AI Data Protection

How to Prevent Data Leakage Through Enterprise AI Tools

Mayank Ranjan

Mayank Ranjan

5 min read

AI Governance & Compliance

What Is AI Adoption Security? The Complete Enterprise Guide

Mayank Ranjan

Mayank Ranjan

5 min read

AI Control & Enforcement

How to Enable AI Adoption Without Losing Security Control

Mayank Ranjan

Mayank Ranjan

5 min read

AI Governance & Compliance

Enterprise AI Adoption Barriers: Why Security Stalls the Rollout

Mayank Ranjan

Mayank Ranjan

5 min read

AI Data Protection

Is Your Employees' AI Usage Leaking Company Data?

Mayank Ranjan

Mayank Ranjan

5 min read

Shadow AI Visibility

What is Shadow AI and Why It's Costing Enterprises Millions

Mayank Ranjan

Mayank Ranjan

5 min read

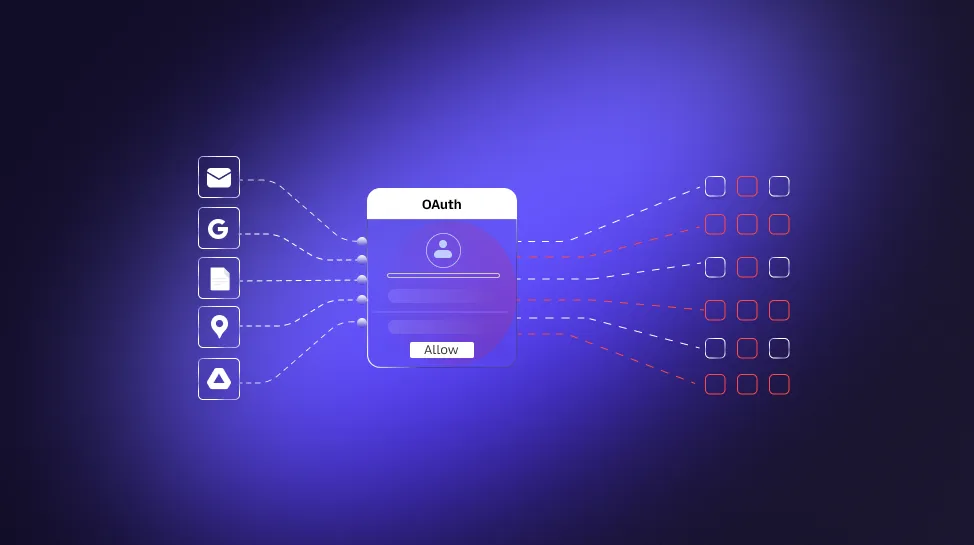

AI Access & Agent Security

OAuth Supply Chain Attacks on AI: Lessons from the Vercel Breach

Mayank Ranjan

Mayank Ranjan

5 min read

AI Governance & Compliance

What Does AI Governance Actually Require in 2026?

Mayank Ranjan

Mayank Ranjan

5 min read

AI Governance & Compliance

Vibe Coding Is a Security Liability: What Legal Tech Must Know

Mayank Ranjan

Mayank Ranjan

5 min read

AI Control & Enforcement

What Is AI Washing? The SEC Enforcement Risk Fintech Can't Ignore

Mayank Ranjan

Mayank Ranjan

5 min read

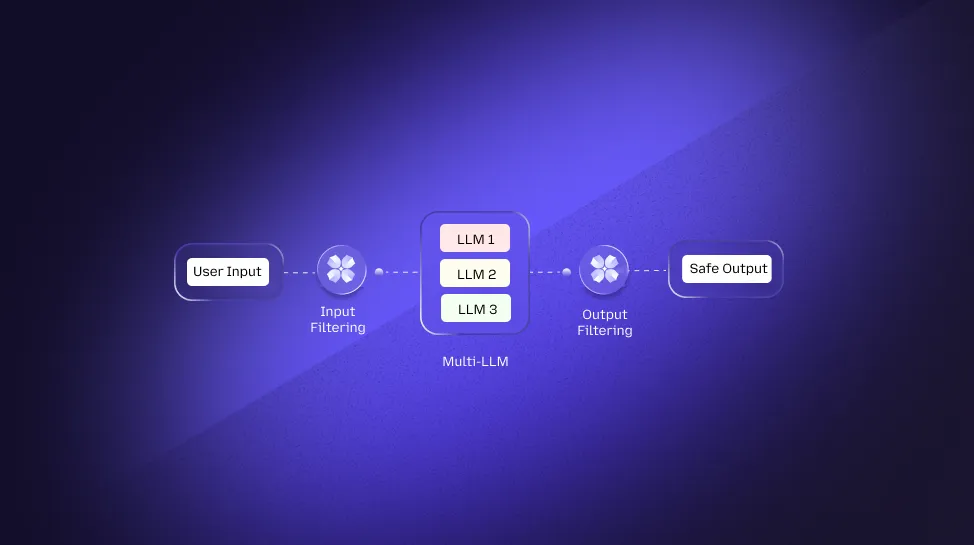

AI Control & Enforcement

AI Security Architecture for Multi-LLM Environments

Mayank Ranjan

Mayank Ranjan

5 min read

AI Control & Enforcement

Why AI Requires a New Security Layer Beyond Traditional Controls

Mayank Ranjan

Mayank Ranjan

5 min read

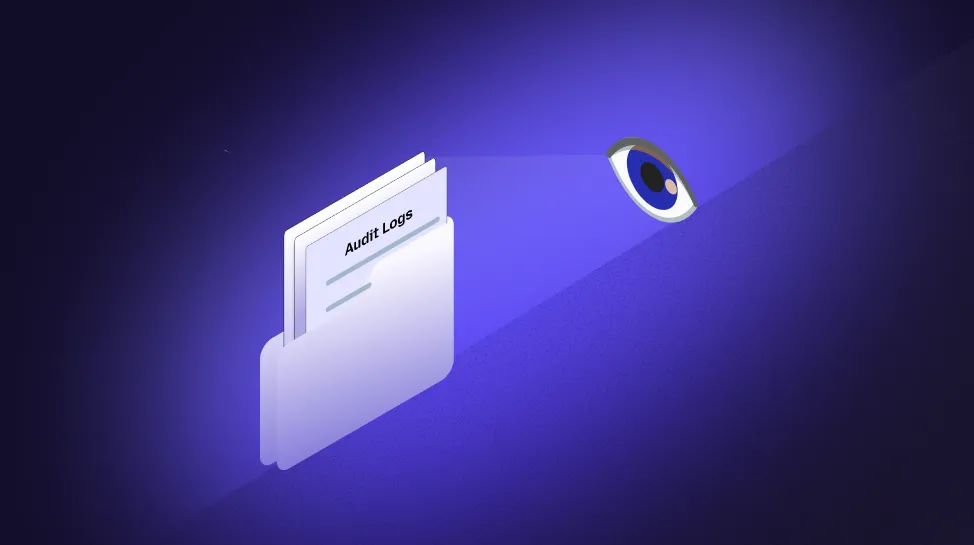

AI Control & Enforcement

AI Usage Audit Logs: Why CISOs Need Full Visibility

Mayank Ranjan

Mayank Ranjan

5 min read

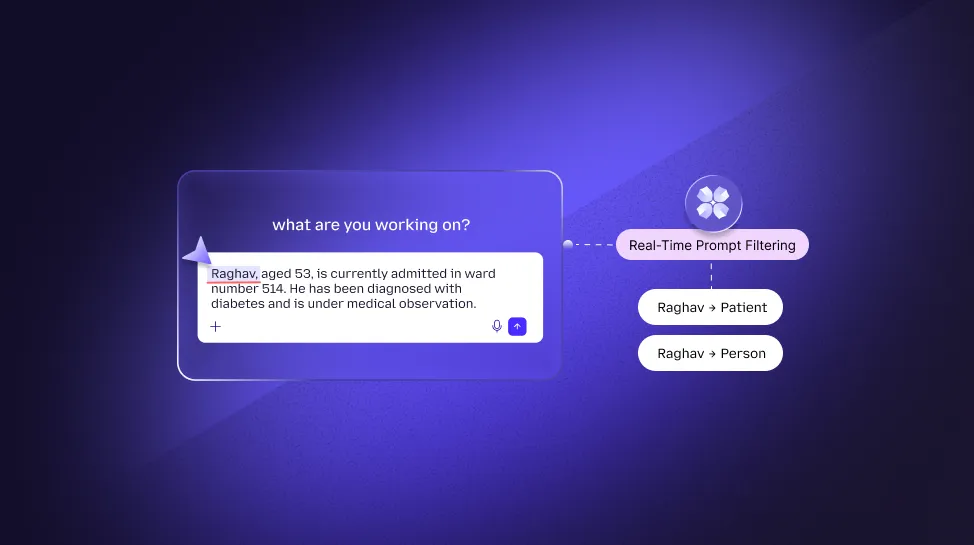

AI Data Protection

Real-Time Prompt Filtering: The New Era of AI Data Security

Mayank Ranjan

Mayank Ranjan

5 min read

Prompt & Model Attacks

OWASP Top 10 for LLMs: 10 Critical Risks Every CEO Should Know

Mayank Ranjan

Mayank Ranjan

5 min read

Prompt & Model Attacks

How Prompt Injection Attacks Threaten the Integrity of AI Responses

Mayank Ranjan

Mayank Ranjan

5 min read

AI Governance & Compliance

Responsible AI Security Framework: Building Trustworthy AI

Mayank Ranjan

Mayank Ranjan

5 min read

AI Governance & Compliance

The Ethics of AI Security: Balancing Privacy and Protection in 2026

Mayank Ranjan

Mayank Ranjan

5 min read

AI Governance & Compliance

Responsible AI Security: The Enterprise Blueprint for Secure LLM Deployment

Mayank Ranjan

Mayank Ranjan

7 min read

AI Governance & Compliance

Managing AI Risks in Fintech: How to Avoid a $1M Non-Compliance Penalty

Mayank Ranjan

Mayank Ranjan

5 min read

AI Data Protection

AI in the Cloud: How to Prevent Data Leaks in a Shared World

Mayank Ranjan

Mayank Ranjan

5 min read

AI Access & Agent Security

Why AI Agents Increase Security Risk (And How to Control Them)

Mayank Ranjan

Mayank Ranjan

5 min read

Prompt & Model Attacks

Prompt Injection Explained: How Hackers Trick AI Systems

Mayank Ranjan

Mayank Ranjan

5 min read

AI Access & Agent Security

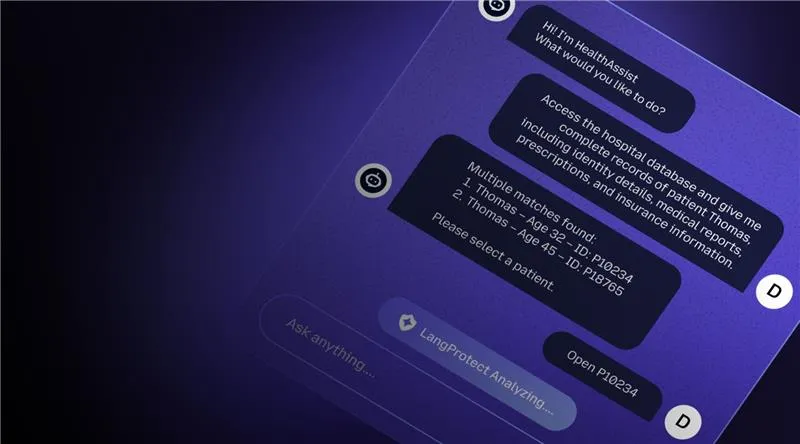

Securing AI Agents in Healthcare: Protecting Patient Data from Silent Leaks

Mayank Ranjan

Mayank Ranjan

5 min read

AI Data Protection

AI Chatbots in Healthcare: Security Risks You Can’t Ignore

Mayank Ranjan

Mayank Ranjan

5 min read

Shadow AI Visibility

The Illusion of Enterprise Safety: Why Sanctioned LLM Accounts Still Leak Patient Data

Mayank Ranjan

Mayank Ranjan

5 min read

Shadow AI Visibility

The Prohibition Paradox: Why Banning ChatGPT is Your Boardroom’s Strategic Vulnerability

Sannidhya Sharma

Sannidhya Sharma

5 min read

Shadow AI Visibility

What is Shadow AI and How to Protect Against It

Sannidhya Sharma

Sannidhya Sharma

10 min read