AI Usage Audit Logs: Why CISOs Need Full Visibility

A support engineer is trying to move faster. A customer escalation comes in, urgent, messy, and filled with context. Instead of rewriting it manually, they paste the entire thread into ChatGPT and ask for a cleaner version.

Inside that prompt sits everything you would normally protect: the customer’s name, their account ID, and internal issue details that were never meant to leave controlled systems.

From a security perspective, nothing looks unusual. The employee is logged in. The browser session is active. A request goes out to an external domain. All of this is captured and stored exactly as expected.

But the one thing that actually matters, the interaction itself, is invisible.

There is no record of what was pasted. No visibility into whether sensitive data was included. No trace of what the model generated in response. No indication of whether that response introduced additional risk.

Two weeks later, during a routine compliance review, a question surfaces:

Was any sensitive customer data exposed through AI tools?

Security teams start digging.

They can see that the tool was accessed.

They can see when it happened.

They can see which user was involved.

But they cannot answer the only questions that matter:

- What data left the system?

- Was it personally identifiable or regulated data?

- Was it blocked, redacted, or fully exposed?

There is no audit trail to reconstruct the event. No way to prove what happened, or what did not.

And that’s the problem.

This is not a detection failure.

This is a visibility failure.

And today, most CISOs are operating inside it, trying to secure systems where the most critical interactions are never recorded.

What Are AI Usage Audit Logs?

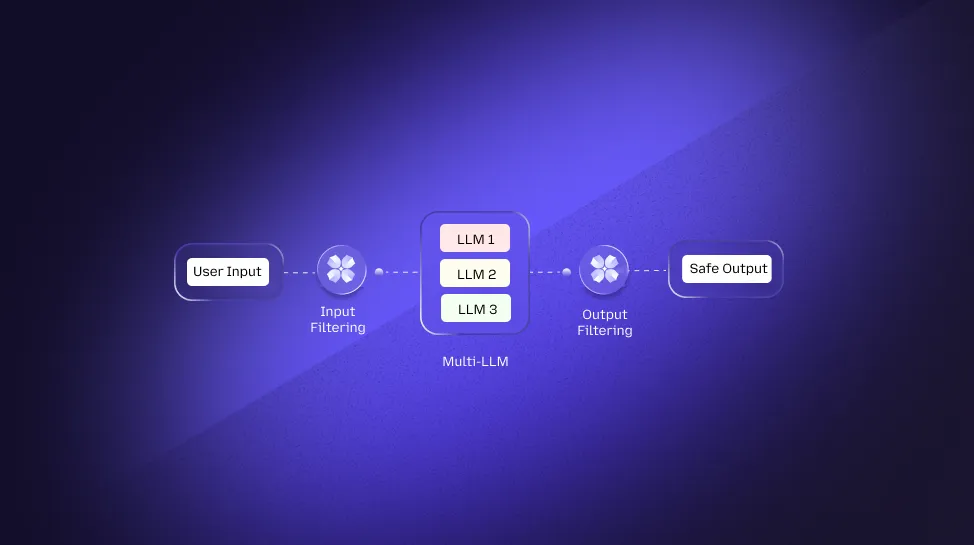

AI usage audit logs capture prompts, responses, user identity, intent, and data classification across AI tools, providing a complete record of AI interactions.

They exist to capture what actually happens inside AI interactions, the moment a user types a prompt, the data included in it, the response generated by the model, and the context around that exchange. They do not just record that a tool was used. They record how it was used and what was exposed during that interaction.

This distinction is critical.

Because in AI, risk does not move through files or APIs in a way that traditional tools can easily detect. It moves through text, often in a single prompt. A user can paste sensitive information, receive a processed response, and move on, all within a session that appears completely normal in conventional logs.

Nothing looks broken. Nothing is flagged.

And yet, something important may have already been exposed.

What AI Audit Logs Actually Capture

AI usage audit logs capture the full lifecycle of an AI interaction, including the prompt, model response, user identity, intent, and data classification, creating a complete, reconstructable record of what actually happened inside AI tools.

This includes identifying whether the prompt contained PII or PHI, understanding the intent behind the request, and recording whether any controls were applied, such as blocking, redaction, or allowing the response.

Instead of isolated signals, you get a complete, contextual record of the interaction.

What This Means for Security and Accountability

For a CISO, the difference between traditional logs and AI audit logs is not technical. It is operational.

Without AI audit logs, you can confirm that an interaction happened.

With AI audit logs, you can explain that interaction in detail.

That means being able to answer questions that matter during an incident or audit:

- What exactly was shared?

- Was it sensitive or regulated data?

- What did the system generate in response?

- Were any controls applied?

Without those answers, investigations stall and compliance becomes difficult to prove.

Why Do CISOs Lack Visibility into AI Usage Today?

Most AI interactions happen outside traditional security controls, which means SIEM, DLP, and CASB tools never capture the part that actually matters, the interaction itself.

On the surface, nothing has changed. Employees are still working inside browsers. Traffic is still flowing through known domains. Authentication systems are still logging access. From a traditional security lens, everything looks normal.

But AI introduces a new layer that sits between user action and system visibility, and that layer is largely invisible.

The problem is not that organisations do not have tools.

The problem is that those tools were never designed to see conversational interactions.

How This Actually Happens in Real Environments

This gap does not come from a single failure. It builds up through everyday usage patterns that quietly bypass existing controls.

1. Shadow AI Usage

An employee opens ChatGPT or Gemini in a browser to speed up a task. There is no malicious intent, just efficiency. The tool is not formally integrated into enterprise systems, so it sits completely outside the logging pipeline.

From a security perspective, the only visible signal is that a known domain was accessed.

What remains invisible is everything inside that session, the prompt entered, the data included, and the response generated.

This is what makes shadow AI different from traditional shadow IT.

It is an unobservable interaction layer.

2. Prompt-Based Data Leakage

Traditional data protection relies heavily on detecting file movement, uploads, downloads, attachments, and transfers.

AI breaks that assumption.

Sensitive data is no longer moved as a file. It is typed or pasted directly into a prompt. A user can include customer details, internal documents, or proprietary logic in plain text, and the system treats it as just another input field.

No file is transferred.

No upload is triggered.

No DLP rule is activated.

The data leaves the organisation as language, not as an asset.

And most security systems are not built to inspect meaning at that level.

This is exactly why real-time prompt filtering and interaction-level controls matter.

3. The SaaS Visibility Gap

AI tools are delivered as browser-based SaaS platforms. That makes them easy to adopt and extremely difficult to monitor at the interaction level.

Security teams can see that a tool like ChatGPT is being used. They can track frequency, users, and access patterns. But they cannot see what is happening inside the session.

There is no visibility into:

- what was entered

- how the model responded

- whether sensitive data was involved

The result is partial visibility that looks complete on dashboards but lacks the depth needed for real security.

Where Traditional Security Breaks

This is where the limitations of existing security architecture become clear.

SIEM platforms are designed to aggregate logs, but they depend on the data being available in the first place. If prompts and responses are never captured, there is nothing meaningful to correlate.

DLP systems are built to detect structured data movement. They struggle to identify sensitive information embedded within conversational text, especially when no file transfer occurs.

CASB solutions can monitor application usage and enforce access policies, but they stop at the application boundary. They do not track what happens inside user interactions.

And logs, in general, lack the ability to reconstruct context. They can show that something happened, but not what that “something” actually was.

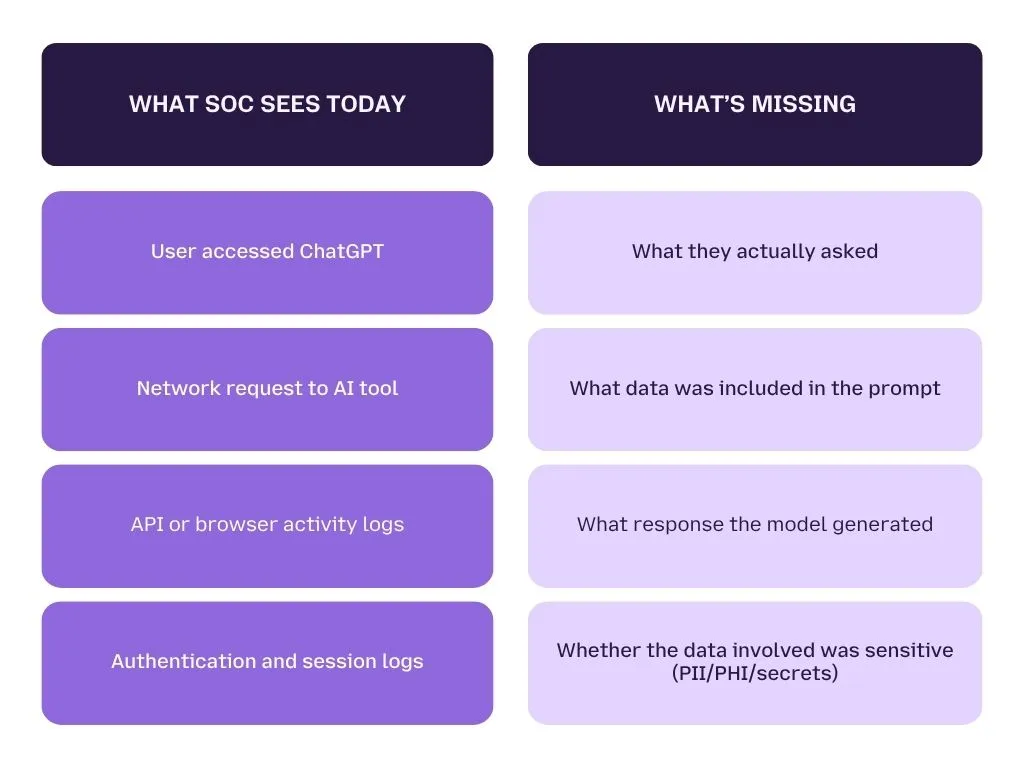

What Does Your SOC Actually See During an AI Incident?

During an AI incident, most SOC teams only see fragmented signals, not the interaction that actually caused the risk.

When an alert comes in, the SOC does what it always does. It starts with logs.

There is a user. There is a timestamp. There is a request to an external AI tool. Everything looks familiar, structured, traceable, and aligned with existing workflows.

But within minutes, the investigation hits a wall.

Because the one thing that matters, the interaction itself, was never captured.

The SOC is not dealing with a lack of data.

It is dealing with the wrong kind of data.

What the SOC Sees vs What It Needs

At first glance, the logs look complete. But when mapped against what’s required to investigate an AI incident, the gaps become obvious.

Where the Investigation Breaks

An alert is raised, maybe from unusual usage patterns, maybe from a compliance trigger, or even from a manual report. The analyst pulls up logs and starts correlating events.

They can confirm:

- the user accessed an AI tool

- the session duration

- the time of the interaction

But when they try to go deeper, the investigation stalls.

There is no record of:

- the prompt that was entered

- the data contained within it

- the response generated by the model

- whether any controls were applied

At this point, the SOC is forced to rely on assumptions instead of evidence.

And that is a dangerous place to be, especially in regulated environments.

What This Means for Incident Response

In traditional incidents, whether it is malware, unauthorised access, or data exfiltration, there is always a trail. Logs may be complex, but they exist. With enough effort, the SOC can piece together the sequence of events.

AI changes that dynamic.

Here, the most critical part of the incident happens inside a conversation that leaves no trace unless explicitly logged. There is no packet capture that reveals intent. No file hash to analyse. No endpoint artifact to reconstruct.

The interaction itself is the incident.

And if that interaction is not recorded, the investigation becomes incomplete by default.

See What Your Security Stack Is Missing

Most AI interactions never make it into your logs, and that’s where the real risk lives. Get full visibility into prompts, responses, and sensitive data exposure across your organisation.

How AI Usage Patterns Create Hidden Security Risk Across Your Organisation

AI usage across organisations is not just increasing. It is creating continuous, measurable exposure through everyday interactions, where sensitive data flows through prompts without consistent visibility or control.

At a surface level, AI adoption looks like a success story.

Usage is growing. Teams are productive. Dashboards show steady activity, prompts generated, users active, tools accessed. It feels measurable, even controlled.

But that view only captures how often AI is used, not how it is used.

And that distinction is where the real risk sits.

Because once you move beyond usage metrics and start examining interaction patterns, a very different picture emerges, one where sensitive data is routinely embedded into prompts, controls are inconsistently applied, and exposure happens quietly within normal workflows.

AI Adoption Is Expanding Faster Than Security Visibility

AI tools are being adopted across teams at a pace that traditional security models cannot keep up with.

Support teams use AI to rewrite escalations. Engineers use it to debug code. Product and marketing teams rely on it for content and research. What begins as productivity quickly becomes dependency.

This leads to a steady increase in prompt volume, but more importantly, it increases the likelihood that real business data enters those prompts.

Not as structured uploads, but as natural language.

Customer identifiers. Internal case notes. Operational details. Fragments of sensitive information that, on their own, may seem harmless, but collectively represent regulated or confidential data.

Sensitive Data Exposure Is Embedded in Everyday AI Workflows

What makes AI risk difficult to detect is not its scale. It is its subtlety.

Sensitive interactions are not isolated incidents. They are embedded within normal workflows.

A user rewriting a support ticket includes a customer reference.

A developer debugging an issue pastes internal logs.

A legal draft includes contextual details from an ongoing case.

None of these actions trigger traditional alarms.

There is no file transfer. No external attachment. No obvious breach pattern.

And yet, sensitive data has already moved beyond controlled systems, simply because it was entered as part of a prompt.

Over time, these interactions accumulate, creating a pattern of exposure that remains invisible unless specifically tracked at the interaction level.

AI Usage Is Fragmented Across Multiple Tools and Environments

Another layer of complexity comes from how AI tools are actually used.

Even in organisations with approved platforms, employees rarely stick to a single tool. They move across ChatGPT, Gemini, Claude, and others depending on the task.

This creates a fragmented environment where:

- usage is distributed across platforms

- policies are inconsistent

- visibility is incomplete

From a dashboard perspective, this appears as diversified usage. From a security perspective, it creates multiple blind spots operating simultaneously.

AI Risk Concentrates Around Specific Users and Workflows

One of the most consistent findings in AI usage analysis is that risk is not evenly distributed.

A relatively small group of users, those deeply involved in operational workflows, generate a disproportionate share of sensitive interactions.

These are not malicious actors. They are often the most efficient, most engaged users. They rely on AI to accelerate tasks, which naturally increases their exposure surface.

Without visibility into their interactions, these users become unintentional risk concentration points.

Why Metrics Alone Do Not Provide Security Visibility

Dashboards can show growth. They can show activity. They can even show flagged interactions.

But metrics alone cannot explain:

- what kind of data is being shared repeatedly

- which workflows are driving exposure

- whether controls are effectively reducing risk or being bypassed

Without context, numbers create awareness, but not control.

This is where most organisations stop. They see AI usage. They measure it. But they cannot interpret the risk inside it.

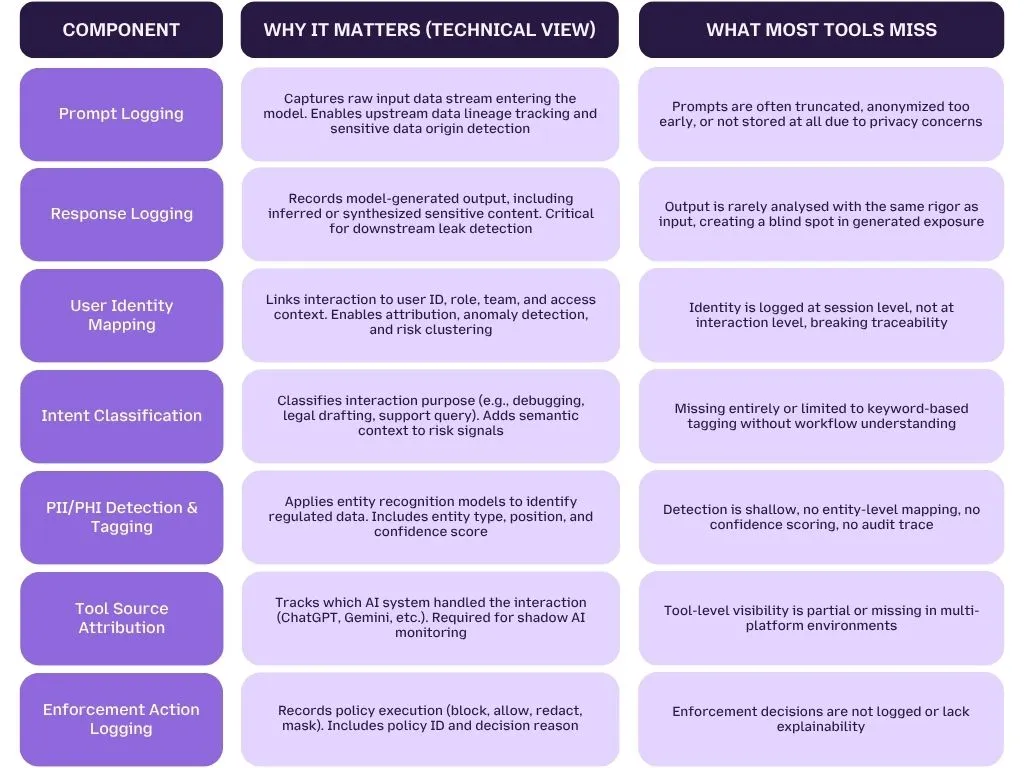

What Should a Complete AI Audit Log Include?

A complete AI audit log must capture every stage of an interaction, prompt, model processing, response, detection signals, and enforcement actions, while preserving context, identity, and traceability required for security, compliance, and investigation.

Most logging systems today are still built for APIs and infrastructure.

AI, however, operates as a semantic layer on top of infrastructure, where the risk is embedded inside natural language. That changes what needs to be logged.

Core Components of a Complete AI Audit Log

The table below outlines what a technically complete audit log must include, and where most systems fall short.

How the AI Interaction Lifecycle Should Be Logged

A complete audit log is not a single record. It is a sequence of linked states.

A typical interaction should be captured as:

1. Input Stage (Prompt Ingestion)

Raw prompt is recorded with timestamp, user identity, and tool source. Initial scanning is triggered for sensitive entities.

2. Detection Stage (Risk Analysis)

Entity detection models identify PII/PHI and assign:

- entity type, such as PERSON or MEDICAL_RECORD

- position in text

- confidence score

3. Transformation Stage (Sanitization / Policy Execution)

Based on policy rules, the system may:

- redact sensitive fields

- mask identifiers

- block the interaction entirely

4. Output Stage (Response Generation)

Final response, original or modified, is logged along with:

- transformation metadata

- policy applied

- decision outcome

5. Audit Layer (Traceability Record)

All stages are linked via a unique request ID, enabling full replay of the interaction lifecycle.

Key Technical KPIs for AI Audit Logging

To evaluate whether an AI audit system is truly complete, you need to measure coverage, accuracy, and enforcement effectiveness.

1. Detection Coverage Rate

Measures how many interactions are scanned for sensitive data.

Formula:

Detection Coverage = Scanned Prompts / Total Prompts

Expected benchmark:

Should approach near-complete coverage, because unscanned prompts create blind spots.

2. Sensitive Interaction Rate

Indicates how frequently sensitive data appears in prompts.

A significant portion of prompts typically contain sensitive entities, often driven by operational workflows.

3. Enforcement Effectiveness Rate

Measures how many detected risks are successfully controlled.

Formula:

Enforcement Effectiveness = (Blocked + Redacted Interactions) / Detected Sensitive Interactions

This KPI reflects whether policies are actually reducing exposure, not just detecting it.

4. Residual Risk Rate

Captures interactions where sensitive data passed through without enforcement.

Formula:

Residual Risk = Exposed Interactions / Total Sensitive Interactions

Even a small residual percentage represents real data exposure risk at scale.

5. User Risk Concentration Index

Measures how risk is distributed across users.

A small group of users typically generates a disproportionate share of high-risk interactions, making user-level visibility critical.

6. Cross-Tool Visibility Ratio

Tracks how much AI usage is covered across all tools.

Formula:

Visibility Ratio = Tracked AI Interactions / Total AI Interactions Across Tools

Low ratios indicate shadow AI blind spots.

Why Incomplete Logs Break Security and Compliance

From a technical standpoint, missing even one component breaks the audit chain.

- Without prompt logging → no input trace

- Without response logging → no output validation

- Without identity → no accountability

- Without detection → no compliance

- Without enforcement logs → no proof of control

This results in systems that can alert but not explain, and detect but not prove.

For regulated environments, this is a critical gap, because compliance requires auditability, not just visibility.

Build an Audit Log That Goes Beyond Visibility

Most tools stop at detection.

LangProtect captures the full interaction lifecycle, from prompt to enforcement, with complete traceability.

See how your AI interactions actually behave, and where your real risks are.

Where Existing AI Security Approaches Fall Short

Most current AI security approaches focus on monitoring usage or blocking risk, but they fail to capture the full interaction context required for investigation, accountability, and compliance.

At a high level, organisations are not starting from zero.

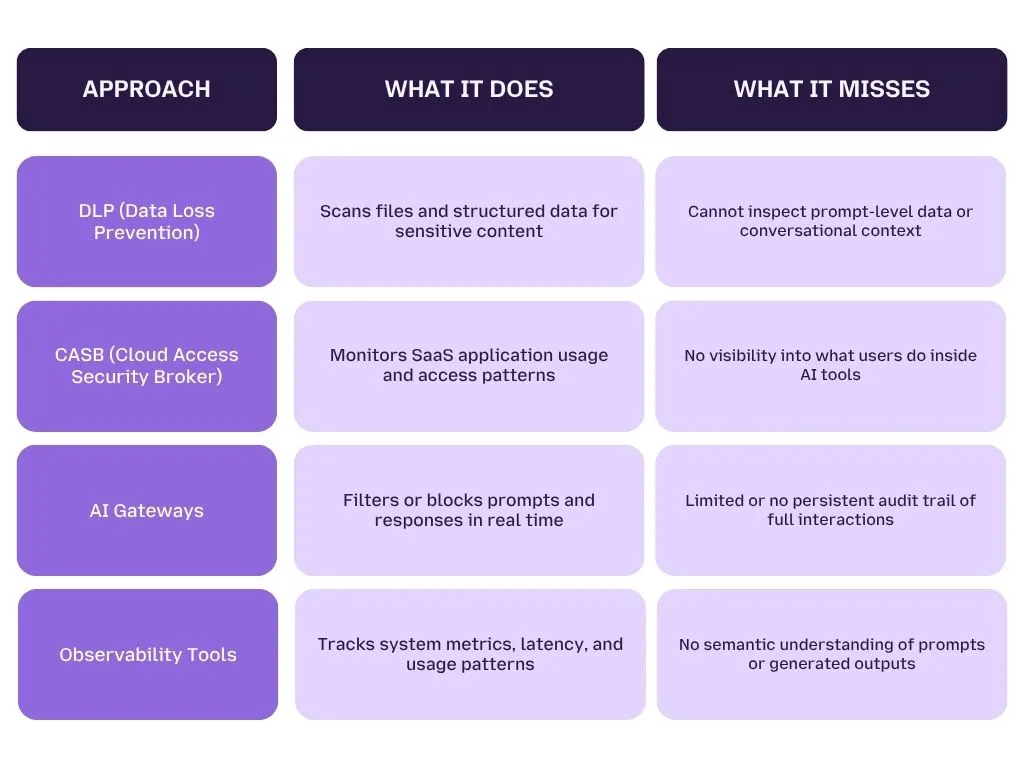

They already have DLP, CASB, observability tools, and in some cases, AI gateways layered into their stack. Each of these plays a role in managing risk.

But none of them were built for language-driven systems where risk lives inside prompts and responses.

Comparison of Current Approaches vs AI Interaction Needs

How AI Audit Logs Enable Real Security and Compliance

AI audit logs enable investigation, prevention, and compliance by providing complete visibility into every AI interaction, from prompt to response to enforcement decision.

Once interaction-level logging is in place, the role of security shifts.

Instead of reacting to fragmented signals, teams can operate with full context, allowing them to understand not just that an event occurred, but exactly how and why it happened.

This changes how incidents are investigated, how risks are controlled, and how compliance is demonstrated.

1. Investigation: From Guesswork to Reconstruction

In a typical AI incident without logs, teams rely on indirect signals, timestamps, access logs, or user reports.

With complete audit logs, the interaction becomes fully reconstructable.

Security teams can:

- review the exact prompt entered

- identify whether sensitive data was included

- analyse the model’s response

- trace the interaction back to a specific user and session

This transforms investigation from inference-based to evidence-based.

2. Prevention: From Detection to Control

Detection alone does not reduce risk.

The real value comes from enforcement tied to context.

With complete audit logging, organisations can:

- block prompts containing regulated data before they are processed

- redact sensitive entities in real time

- trigger alerts based on intent and risk level

More importantly, every action is recorded.

This creates a feedback loop where security teams can evaluate:

- which policies are effective

- where users are repeatedly triggering risk

- how exposure patterns are evolving

3. Compliance: From Reporting to Audit Readiness

Regulatory frameworks like HIPAA and GDPR are not just concerned with detection. They require traceability and accountability.

AI audit logs provide:

- a record of how sensitive data was handled

- evidence of enforcement actions

- traceability across the full interaction lifecycle

This enables organisations to move from reactive reporting to proactive audit readiness.

The Shift from Visibility to Governance

With audit logs in place, AI security evolves into governance.

Teams can:

- define policies based on real usage patterns

- enforce controls consistently across tools

- continuously refine their security posture

This is not just monitoring. It is active control over AI interactions.

How LangProtect Closes the AI Visibility Gap

LangProtect closes the AI visibility gap by capturing every AI interaction end-to-end, prompt, response, intent, risk signals, and enforcement, across all tools in real time.

Most security tools show that AI is being used.

LangProtect shows what is actually happening inside each interaction.

Instead of fragmented signals like tool access or API calls, it records the full lifecycle of an interaction, what the user entered, how the model responded, whether sensitive data was involved, and what action was taken.

This creates a complete, traceable record that security teams can investigate, analyse, and act on.

What makes this important is not just visibility, but context.

Each interaction is enriched with:

- who performed it

- what they were trying to do

- whether sensitive data such as PII, PHI, or secrets was present

- how the system responded, whether allowed, blocked, or redacted

This allows teams to move beyond raw logs and understand risk at a behavioural level.

LangProtect also brings consistency across a fragmented AI environment. Whether users are working on ChatGPT, Gemini, or other tools, all interactions are captured within a single audit layer, eliminating blind spots created by shadow AI usage.

At the same time, enforcement is built into the system. Sensitive prompts can be blocked, data can be redacted, and every decision is logged with context, making controls verifiable, not assumed.

Can You See What Your AI Is Exposing?

If you can’t track prompts, responses, and data flow, you’re operating without control.

LangProtect helps you monitor, audit, and secure every AI interaction in real time.