Secure AI Governance for Government & Public Sector

AI adoption in the public sector is scaling rapidly, introducing unique risks to citizen data and national security. LangProtect provides the resilient guardrails needed to govern AI workflows, redact sensitive info in real-time, and automate compliance with federal mandates ensuring your mission remains secure and trustworthy.

Safeguarding the Public Mission in an AI-First World

While GenAI empowers agencies to serve the public with unprecedented speed, it fundamentally alters the governmental attack surface. As workflows move from simple "chat" to autonomous "execution," traditional security leaves a governance vacuum. Securing the modern agency requires more than just monitoring it requires architectural sovereignty over every AI-driven action to protect national interests and public trust.

Unprecedented Surface Expansion

71%

of public sector entities accelerated their AI integration in 2024, creating a massive, high-privileged footprint that traditional IT controls were never designed to oversee.

The Tactical Readiness Deficit

<15%

of agency security leaders believe their current frameworks are robust enough to mitigate non-deterministic risks like "Crescendo" prompt injections or indirect model hijacking

Workforce Knowledge Gaps

65%

of government staff admit to a lack of formal training in AI security, leading to a surge in sensitive data entering unmanaged personal accounts and browser-based "Shadow AI" tools.

Accelerating Incident Volume

56.4%

increase in AI-specific security breaches within governmental networks, spanning from unintended misinformation cascades to sophisticated credential siphoning.

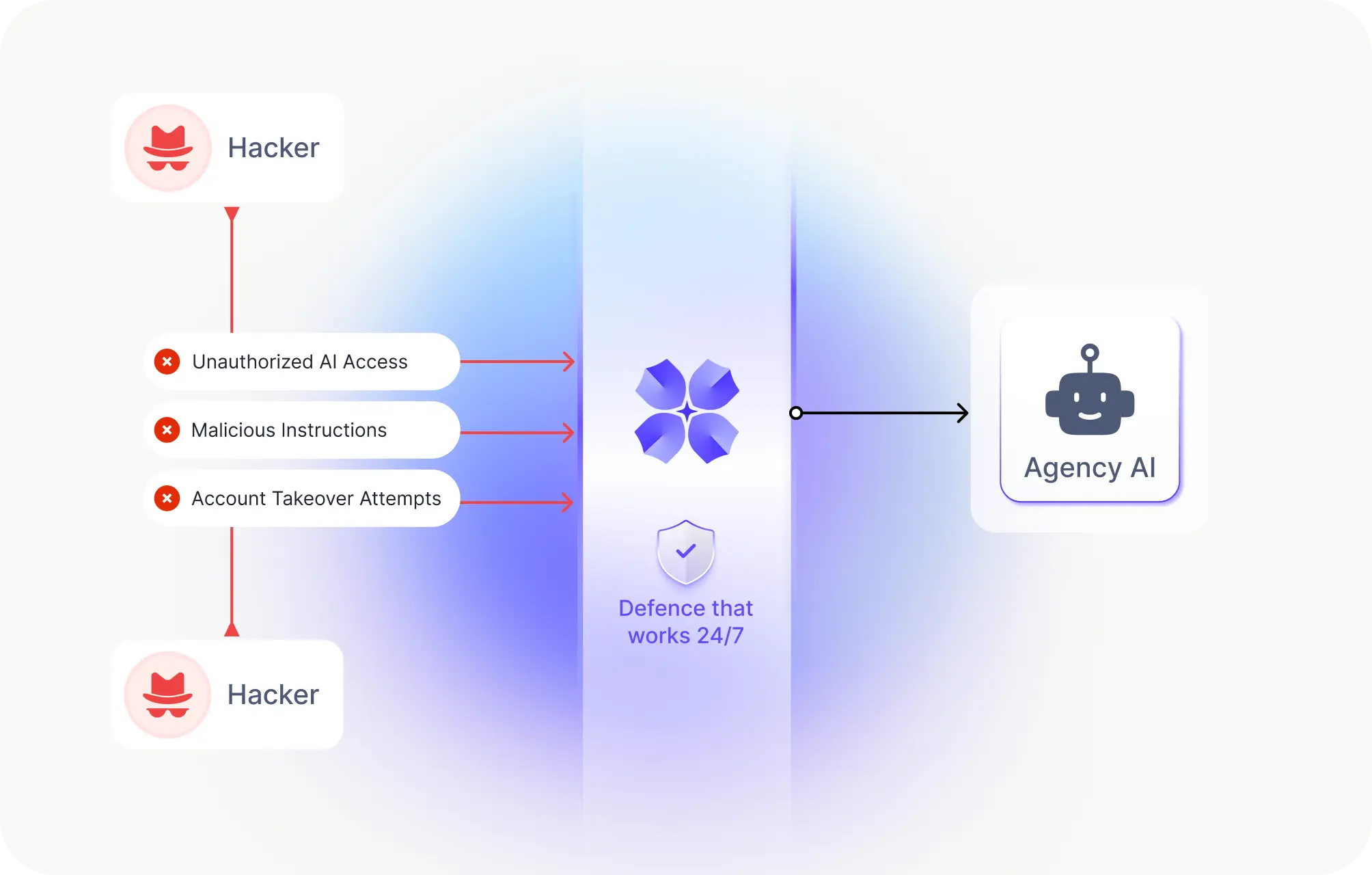

Securing Government AI from Modern Threats

Unsanctioned AI on Civil Workstations

Government employees frequently use personal AI accounts (like ChatGPT or Claude) for sensitive report drafting and data summary. This moves protected proprietary information and citizen PII into unmonitored third-party environments.

Impact: Unauthorized data exfiltration, Privacy Law breaches.

High-Scrutiny Data & Intelligence Research

Sensitive data sets used for policy analysis or public health research often bypass federal safety checks when passed through unsecured AI agents, exposing classified intelligence or PII to the model's context window.

Impact: IP theft, compromised national sovereignty.

Lack of Control in Municipal Functions

Local administrative teams are adopting AI for zoning, permitting, and housing queries without a central audit layer. Without guardrails, public services are left open to dangerous "misinformation cascades."

Impact: Inaccurate public guidance, political brand damage.

Siloed AI Across Government Departments

High-stakes agencies from Defense to Revenue are building separate "pocket-deployments" of AI. This creates a fragmented security posture where it is impossible for the CISO to maintain a unified, secure baseline.

Impact: Inconsistent safety policies, hidden infrastructure breaches.

The Compliance Gap During Federal Audits

Under mandates like OMB M-24-10, agencies must provide "Proof of Supervision." If your AI usage is untracked and non-auditable at the prompt level, satisfying federal or state auditors becomes a mission failure.

Impact: Audit failures, legal penalties, loss of federal funding.

The Infrastructure for Secure Public AI

Government agencies need more than a simple filter. LangProtect provides a full security layer that watches every prompt, validates every action, and ensures every department follows federal safety rules in real-time.

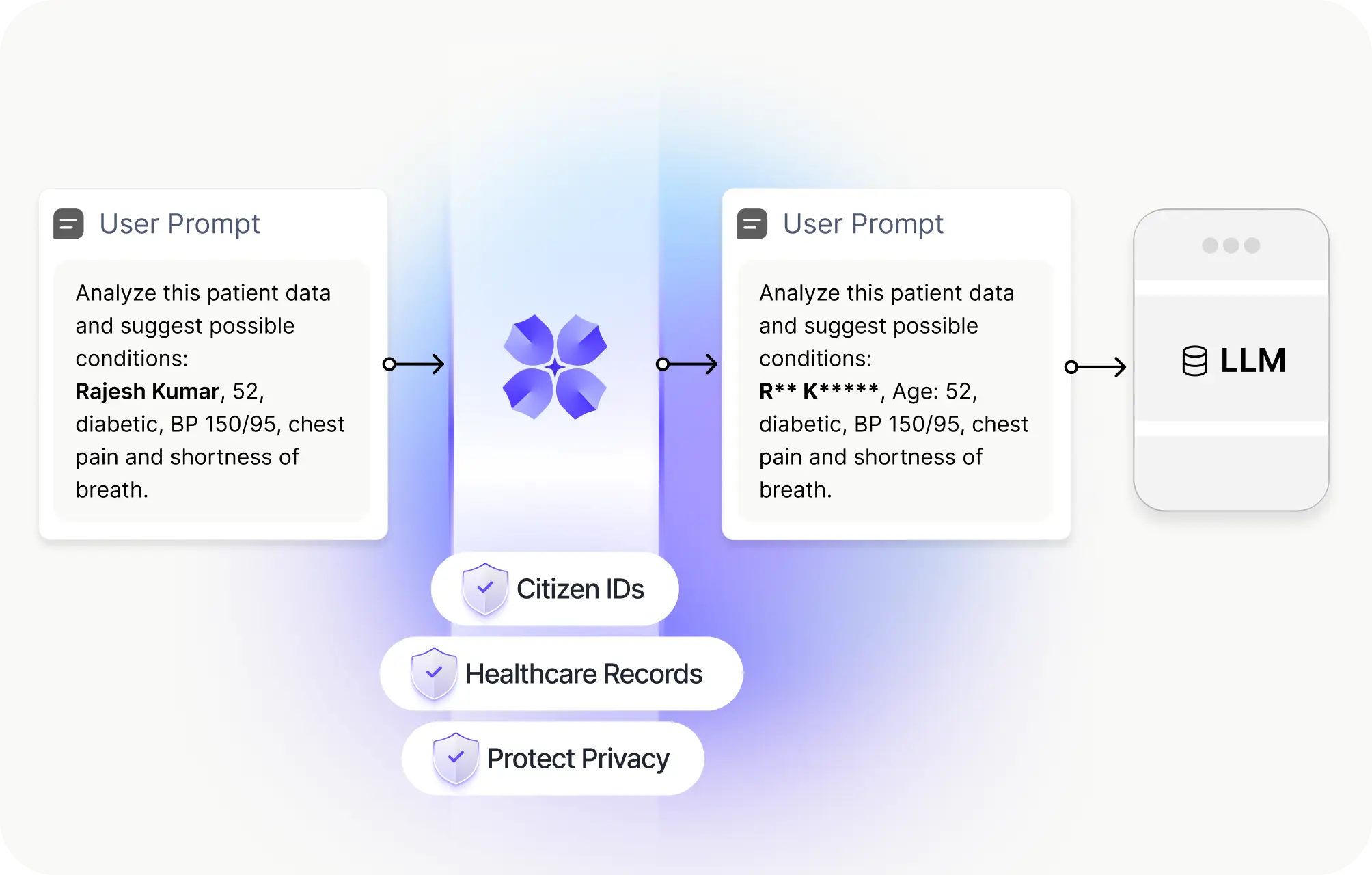

Citizen Data Protection & PII Redaction

We automatically identify and hide sensitive info like social security numbers, home addresses, and citizen health records before they are sent to any AI model.

- Auto-scrubs citizen IDs in milliseconds.

- Prevents sensitive data from leaking into public AI logs.

- Protects the privacy of every individual your agency serves.

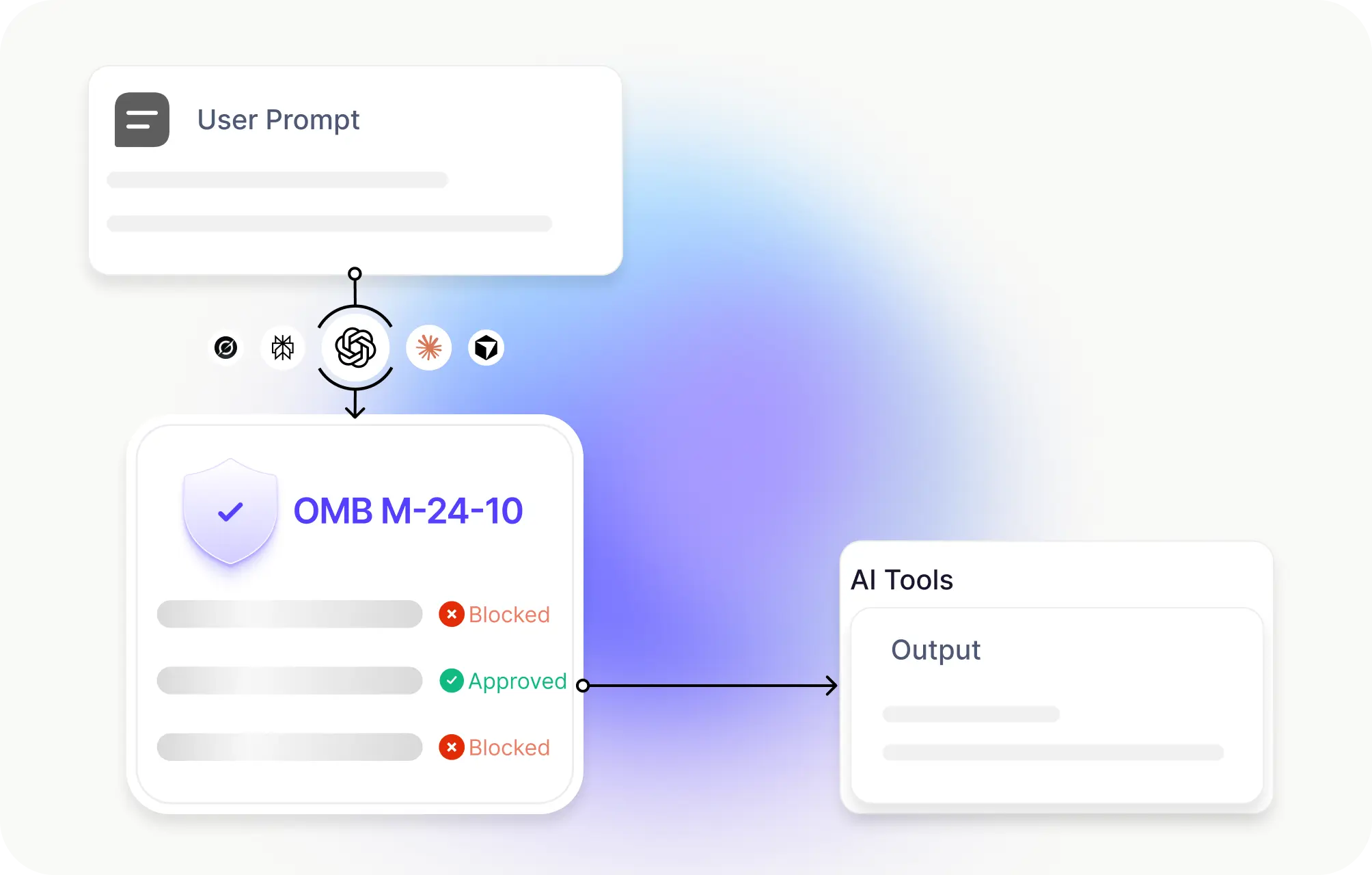

Federal Safety & Policy Enforcement

Our system applies the latest government safety rules (like OMB M-24-10) automatically. We ensure your AI assistants never share prohibited info or follow "tricked" instructions that ignore agency rules.

- Stops adversarial "tricks" or jailbreak attempts instantly.

- Enforces ethical AI usage across all departments

- Blocks risky prompts before they cause a policy breach.

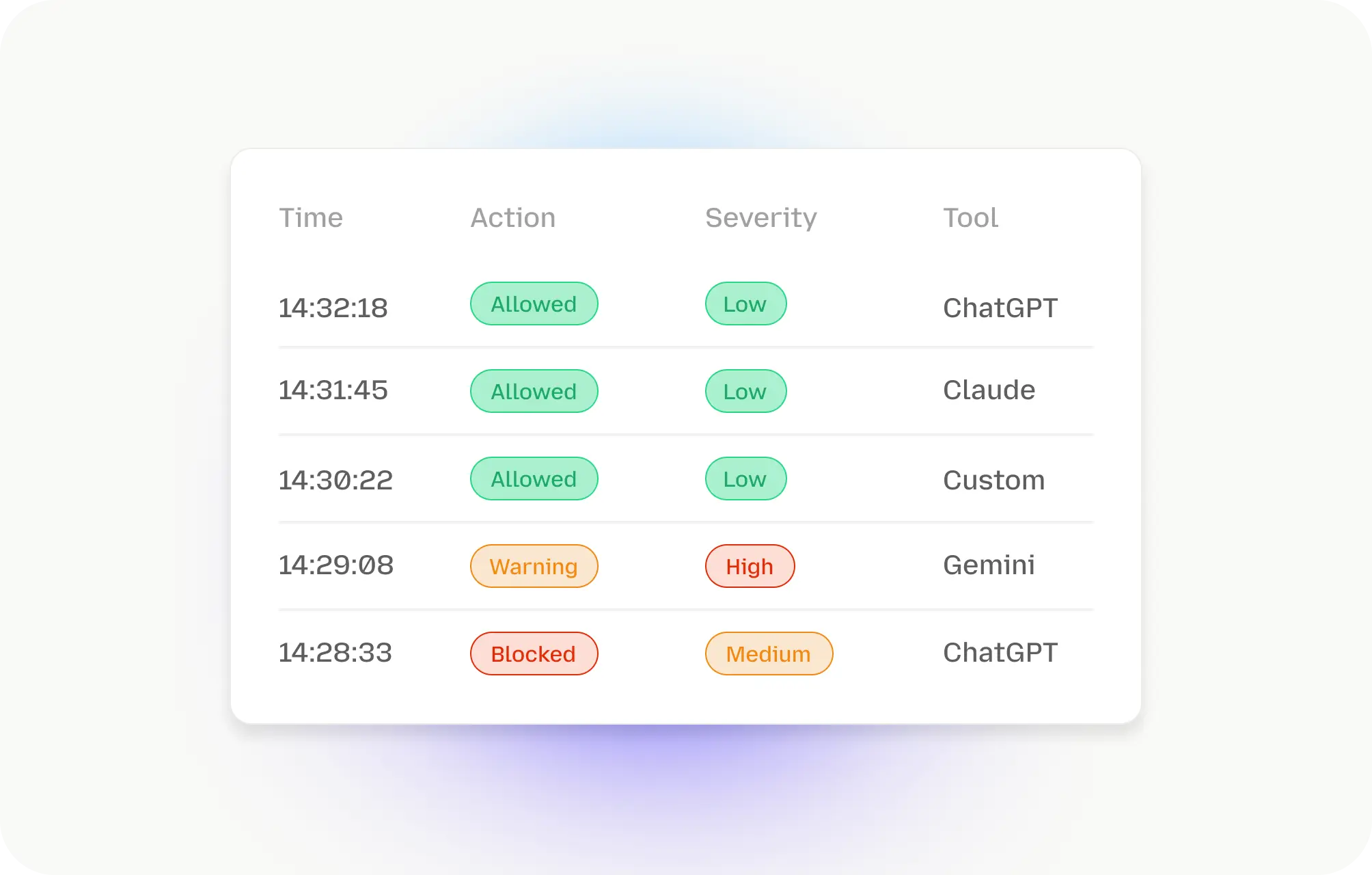

Defensible Logs for Agency Audits

Most AI interactions are a "black box" that auditors hate. LangProtect turns every AI conversation into a human-readable audit trail, making it simple to prove you are managing AI risk properly.

- Provides clear evidence for SOC2, HIPAA, or federal reviews

- Tracks exactly what was said and how it was protected.

- Simplifies high-stakes investigations with structured forensics.

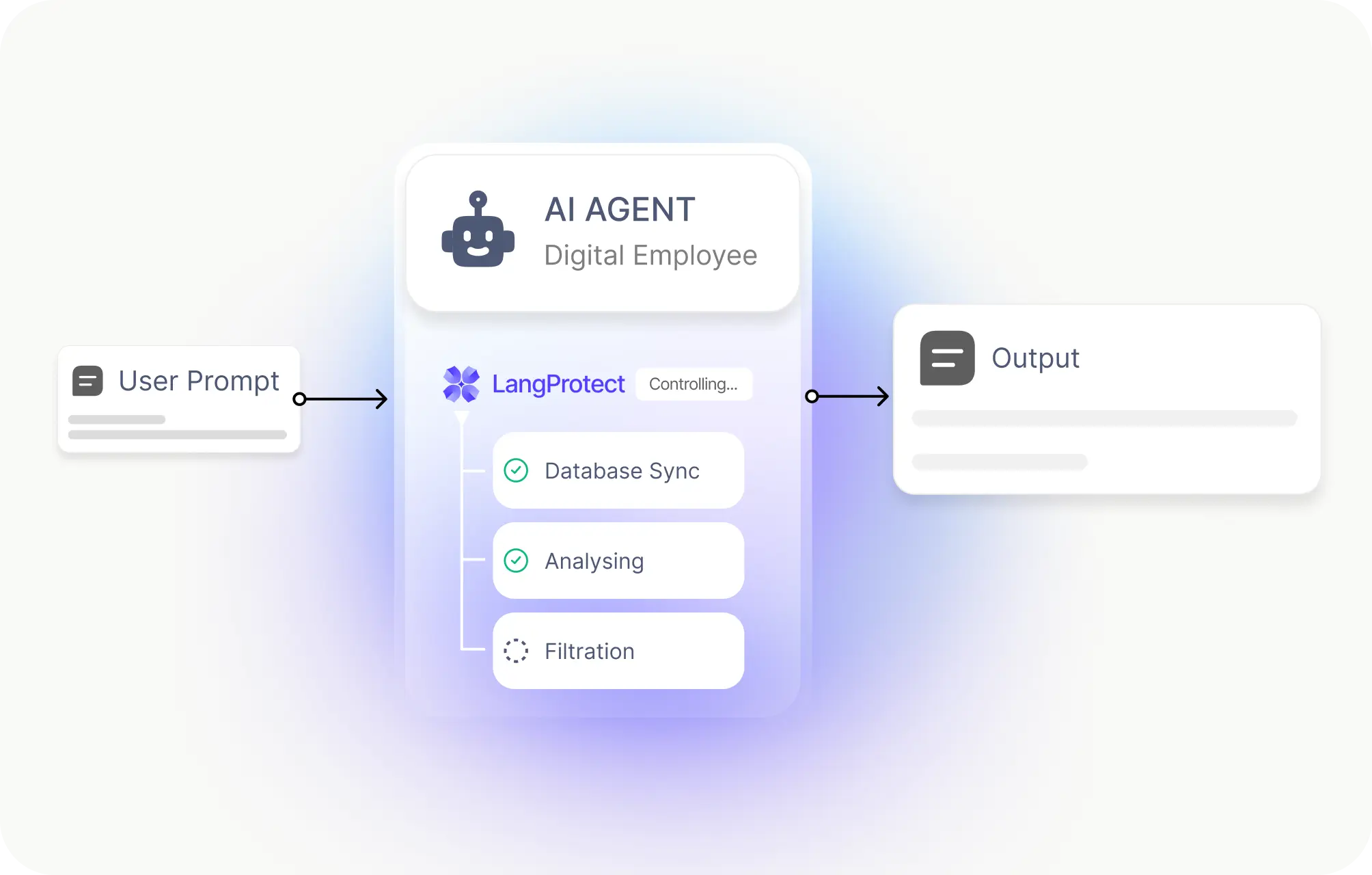

Controlling "Digital Employees" (AI Agents)

As you build autonomous AI agents, you need to make sure they aren't too powerful. We manage the "actions" these agents can take, ensuring they only touch the data they are authorized to see.

- Enforces "least privilege" for autonomous systems.

- Ensures agents cannot delete or change sensitive databases.

- Links AI work to specific authorized human roles for accountability.

Guarding Your Agency's AI Budget

Hackers often try to "jack" an agency's AI account to run up huge bills or crash the system with fake traffic. We protect your API keys and keep your AI resources available for real public service.

- Detects and blocks "AI-jacking" from stolen credentials.

- Stops expensive fake traffic from flooding your systems.

- Secures your AI investment and ensures 24/7 mission uptime.

AI Governance Built for Public Trust and Compliance

Protect privileged communications, safeguard confidential matter data, and maintain defensible oversight across GenAI usage. See how LangProtect helps law firms and corporate legal teams govern AI without compromising professional responsibility.

OMB M-24-10 Compliance

This is the federal standard for AI safety. LangProtect helps your agency meet these rules by ensuring your AI respects civil rights, uses high-quality data, and always keeps a "Human-in-the-loop" for high-stakes decisions.

GDPR & Privacy Standards

Government agencies handle massive amounts of personal data. LangProtect enforces data residency and privacy controls, ensuring that citizen information is never shared with unauthorized models and always stays protected by law.

NIST AI RMF Framework

The National Institute of Standards and Technology provides the roadmap for safe AI. LangProtect uses this framework to map and measure AI risks, turning complex government guidelines into a clear, manageable security plan.

SOC 2 Compliance

Trust is the currency of the public sector. LangProtect helps agencies reach and maintain SOC 2 standards, providing third-party proof that your AI systems are private, secure, and available for the public when they need them.

ISO 27001 Compliance

For international and large-scale safety, LangProtect aligns with ISO 27001. We provide a systematic way to manage your agency's information security, protecting your digital assets from advanced threats around the clock.