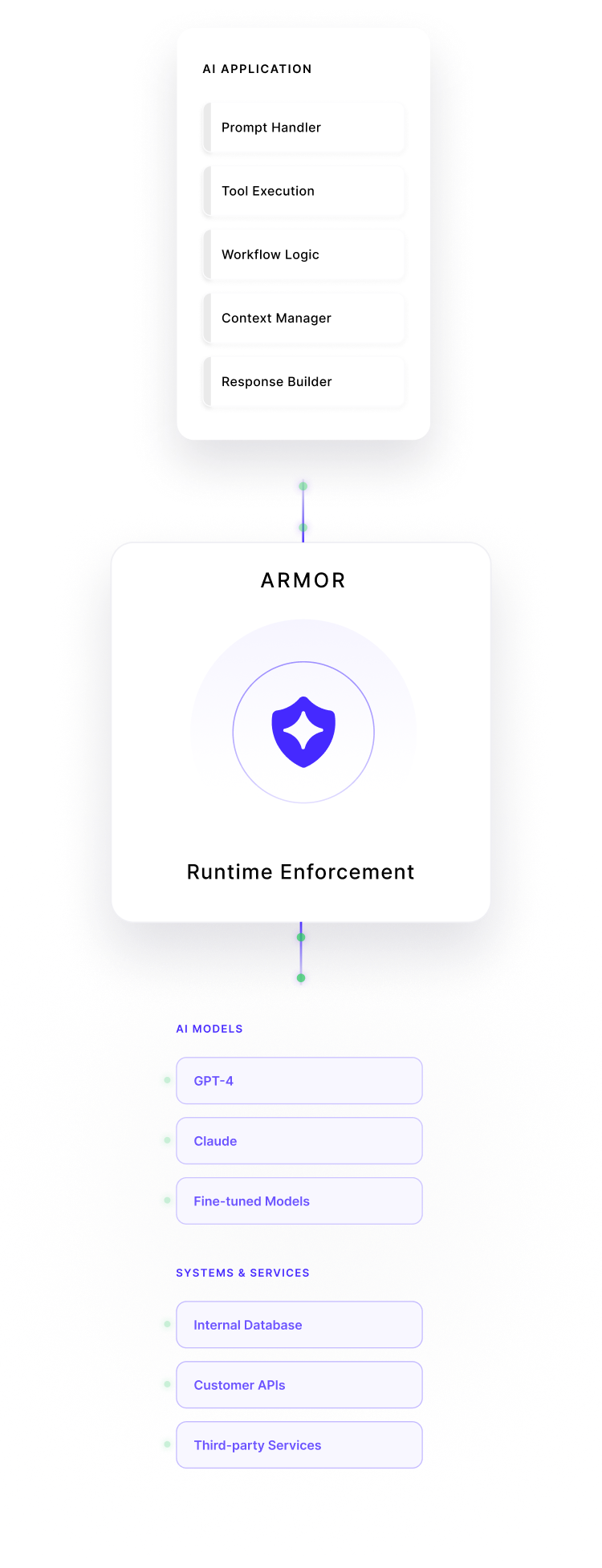

ArmorRuntime Enforcement for Homegrown AI Applications

Armor is an inline security layer for AI applications your organization builds and operates. It enforces policy decisions on prompts, model outputs, and execution paths at runtime, before AI systems access internal data, tools, or downstream services.

Why AI Apps Need Runtime Guardrails

AI applications introduce a new execution layer where traditional controls have limited visibility. Guardrails must operate at runtime, when prompts, context, and outputs interact with real data and real systems.

Risk in Open-Ended Inputs

AI applications accept free-form text and dynamically generated context. Unlike traditional software, inputs cannot be fully validated ahead of time, increasing the risk of unexpected behavior at runtime.

Data Can Propagate

Once internal data is introduced into prompts or context, model outputs can unintentionally propagate that data into logs, responses, or downstream systems.

Model Behavior Can Be Manipulated

Application inputs can be crafted to influence how a model reasons or responds, leading to outputs that deviate from intended product or security constraints.

Security Controls Lack Coverage

Traditional security tools are not designed to inspect prompts, responses, or AI-driven decisions, leaving a critical execution layer unprotected.

Outputs Can Trigger Downstream Actions

AI responses are increasingly passed directly to tools, APIs, and services. Without control, unsafe outputs can lead to unintended system behavior.

Failures Surface in Production

Most AI issues do not appear during development or testing. They emerge under real inputs, real data, and real workloads in production environments.

How Armor Works

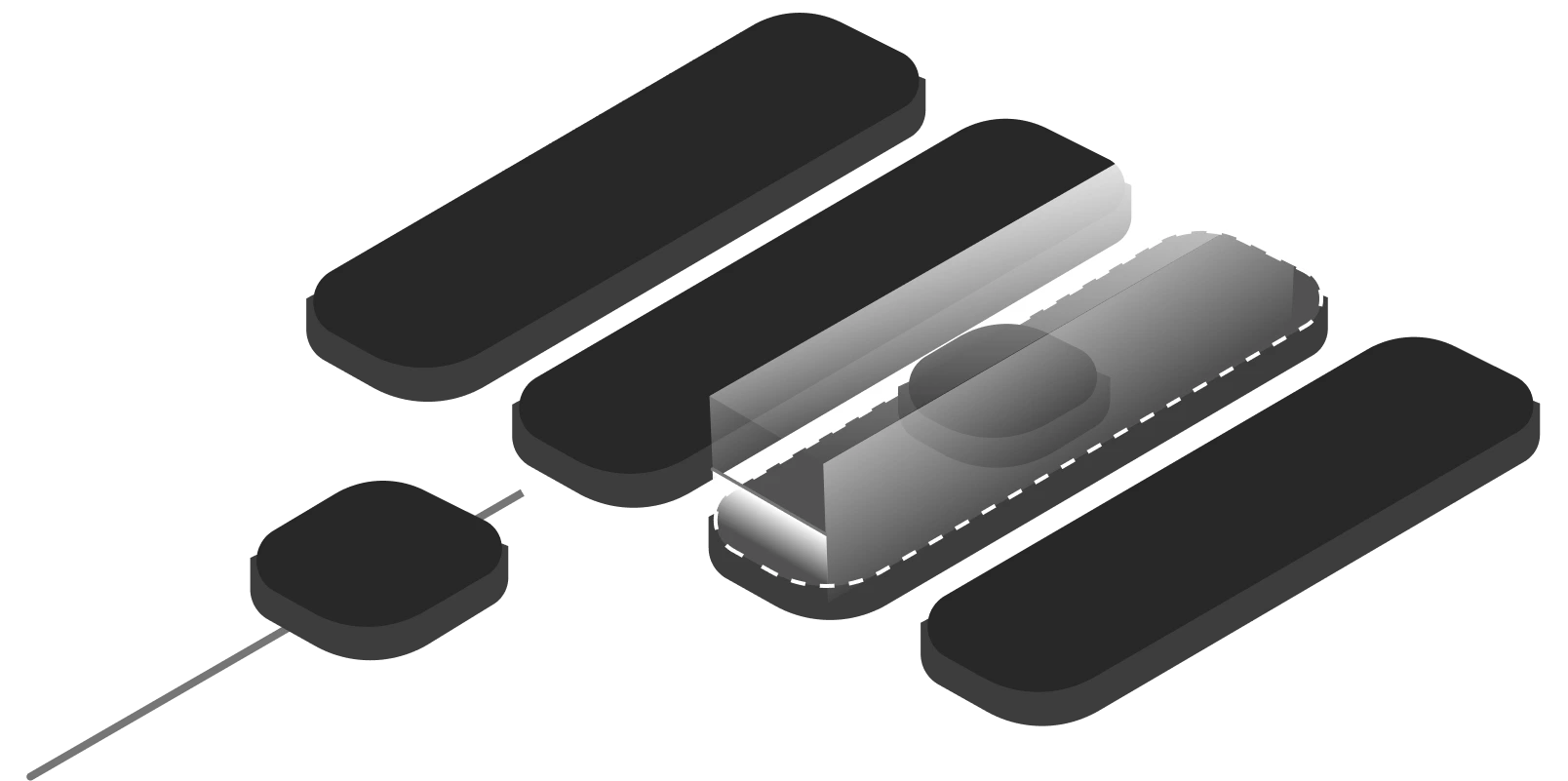

Armor enforces security decisions at execution time, when AI applications interact with real inputs, real data, and real systems.

Intercept Runtime Interaction

Armor operates inline with the AI application’s request–response flow, observing interactions as they occur during execution rather than after the fact.

Evaluate Prompt, Context, And Output

Each interaction is evaluated in context, considering the prompt, associated application state, and generated output against defined policies.

Apply Policy Decision

Based on policy evaluation, Armor enforces a deterministic decision allow, restrict, or block before the interaction reaches internal data, tools, or downstream services.

Record Decision For Review

All enforcement decisions are recorded with relevant context, enabling review, investigation, and audit without interrupting application workflows.

What Armor Protects Against

Armor enforces controls at runtime to prevent AI applications from performing unsafe or unintended actions as they interact with data and systems.

Sensitive Data Exposure

Prevents AI-generated outputs from returning or propagating confidential, regulated, or proprietary information beyond approved boundaries.

Unauthorized Data Access

Blocks AI-driven access to internal databases, services, or resources that fall outside defined access policies.

Prompt Injection via App Inputs

Detects and constrains manipulated or malformed inputs designed to alter application behavior or bypass intended controls.

Unsafe or Non-Compliant Outputs

Restricts responses that violate internal rules, compliance requirements, or product-defined constraints.

Tool / API Misuse

Controls how AI applications invoke internal tools, functions, and external APIs to prevent unintended or unsafe actions.

Unexpected Execution Paths

Identifies and constrains abnormal runtime behavior that deviates from expected application logic or execution flow.

Built for Enterprise Security Teams

Armor is designed to fit into enterprise security environments, giving security teams control and accountability over how AI applications behave in production.

Centralized Policy Control

Define and manage AI security and data-access policies in one place, applied consistently across protected AI applications.

Role-Aware Access

Limit who can view, manage, or modify AI enforcement policies and logs based on organizational roles.

Audit-Ready Decision Records

Maintain structured records of enforcement decisions, including what was allowed, restricted, or blocked during execution.

Integration-Friendly Architecture

Designed to integrate with existing security workflows and tooling without requiring changes to AI models or application logic.

Clear Ownership Boundaries

Separate product responsibility from security enforcement, allowing teams to move fast without bypassing controls.

Enterprise-Grade Operation

Built to support production environments where stability, predictability, and accountability matter.

Use Cases

Armor supports real-world AI usage across teams by preventing common risks before they turn into incidents.

AI Applications Accessing Internal Databases

When AI applications retrieve or reason over internal datasets, Armor enforces runtime policies to prevent unauthorized access and unintended data exposure during execution.

Agent or Tool-Based Workflows

For AI agents that invoke internal tools, services, or APIs, Armor constrains execution paths to ensure actions remain within approved operational boundaries.

AI Responses Passed to Downstream Systems

When generated outputs are consumed by other services or automation layers, Armor evaluates responses before they propagate, reducing the risk of unsafe or invalid downstream actions.

Compliance-Sensitive AI Features

For AI functionality operating under regulatory or internal policy requirements, Armor enforces consistent controls during execution to support compliance and governance needs.

Production AI Incident Prevention

By enforcing policies at runtime, Armor helps prevent unsafe interactions from escalating into production incidents that impact systems, data, or users.

Production AI Incident Prevention

By enforcing policies at runtime, Armor helps prevent unsafe interactions from escalating into production incidents that impact systems, data, or users.

Built-In Runtime Scanners for AI Execution

Armor applies a set of built-in scanners at runtime to evaluate AI inputs and outputs, with the flexibility to tailor enforcement to application-specific and organizational requirements.

Frequently Asked Questions

See How Runtime Enforcement Works in Production

Understand how Armor applies layered controls across prompts and outputs to reduce AI execution risk.

100,000+

Prompts Detected

7000+

AI Tools monitored

20+

Policies Applied

99%

Sensitive Data Coverage

<5ms

Risk Detection