Secure Your Homegrown LLM Application

LLMs with access to sensitive data and tools are prime targets for attacks. Without runtime protection, prompt injections and data leaks can go undetected. Armor secures your LLM apps by validating inputs, outputs, and actions in real-time, ensuring no malicious activity reaches your system.

Where LLM Applications Break Security and Compliance

LLM applications introduce unique risks that traditional security frameworks cannot address. Proper tools are needed to monitor, secure, and enforce compliance across every interaction.

Unmonitored LLM Activity

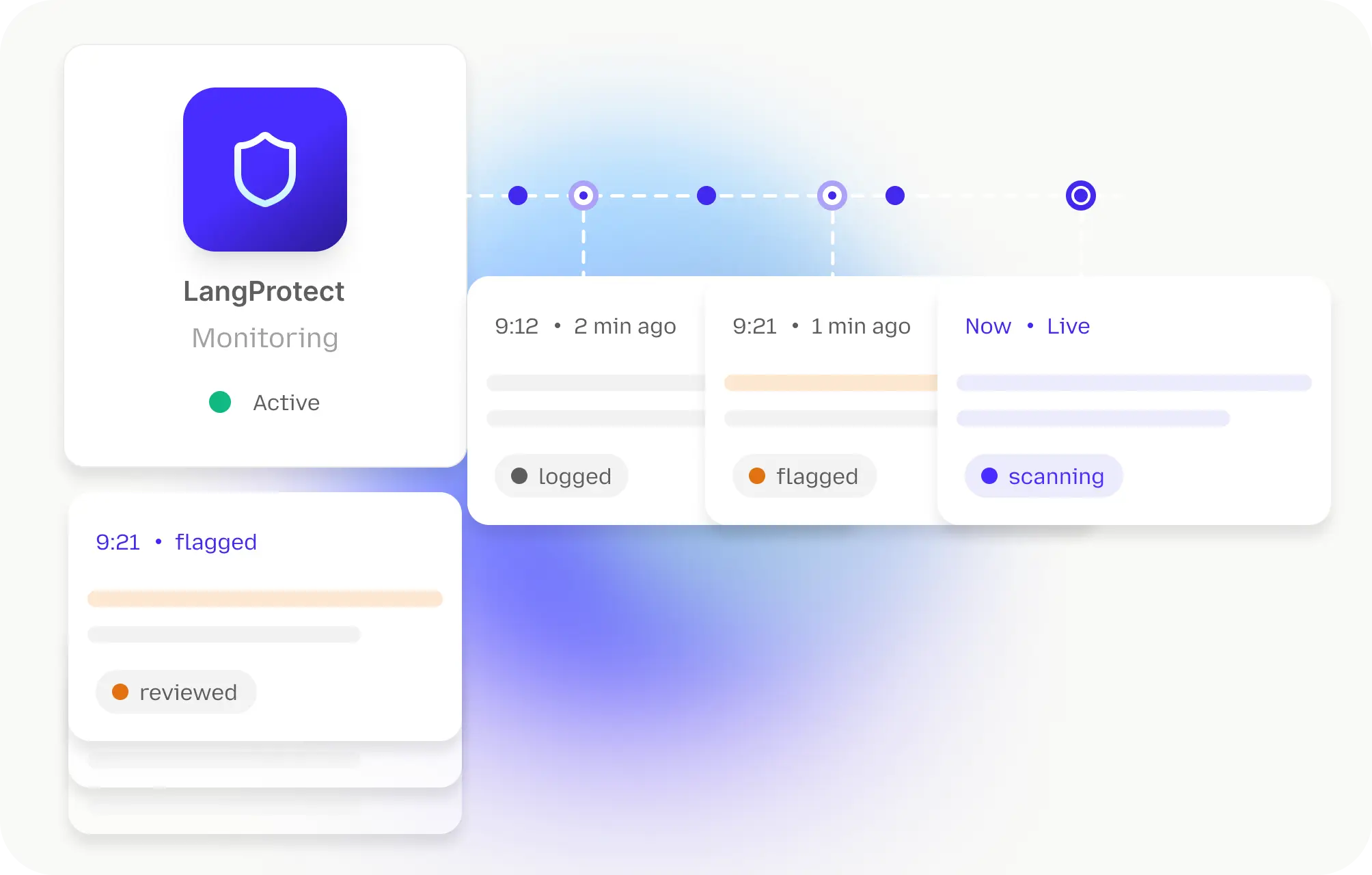

LLMs can access and process sensitive data without oversight, creating gaps in security. Continuous tracking and logging of all LLM activities in real time is crucial to ensure visibility and control over these interactions.

Prompt Injection and Data Exposure

LLM inputs can be manipulated to access or leak sensitive information. Securing inputs and outputs to prevent unauthorized access or unintended data exposure is essential.

Lack of Real-Time Governance

Existing security tools fail to enforce governance for AI systems in real time. Real-time policy enforcement is necessary to meet compliance standards, including SOC2, HIPAA, and GDPR.

Delayed Response to Vulnerabilities

LLM vulnerabilities often go unnoticed until significant damage occurs. Immediate alerts and incident response are critical for detecting and mitigating risks before they escalate.

Why Traditional Security Misses LLM Application Risks

Traditional app and infra security were never built to inspect model inputs, outputs, and agent behavior. LLM-powered systems expose visibility and control gaps that standard tooling can't cover.

73%

of production LLM deployments exhibit prompt injection vulnerabilities that could alter model behavior or reveal internal instructions.

56%

of prompt manipulations across tested LLM interactions succeed in bypassing basic safeguards, showing how easily attackers can mislead models.

80%+

of enterprise AI agents operate with elevated access to data and services yet nearly half lack dedicated oversight or monitoring of model flows.

20%

of real-world LLM agent attacks result in measurable data exposure during task execution, especially when sensitive contexts are involved.

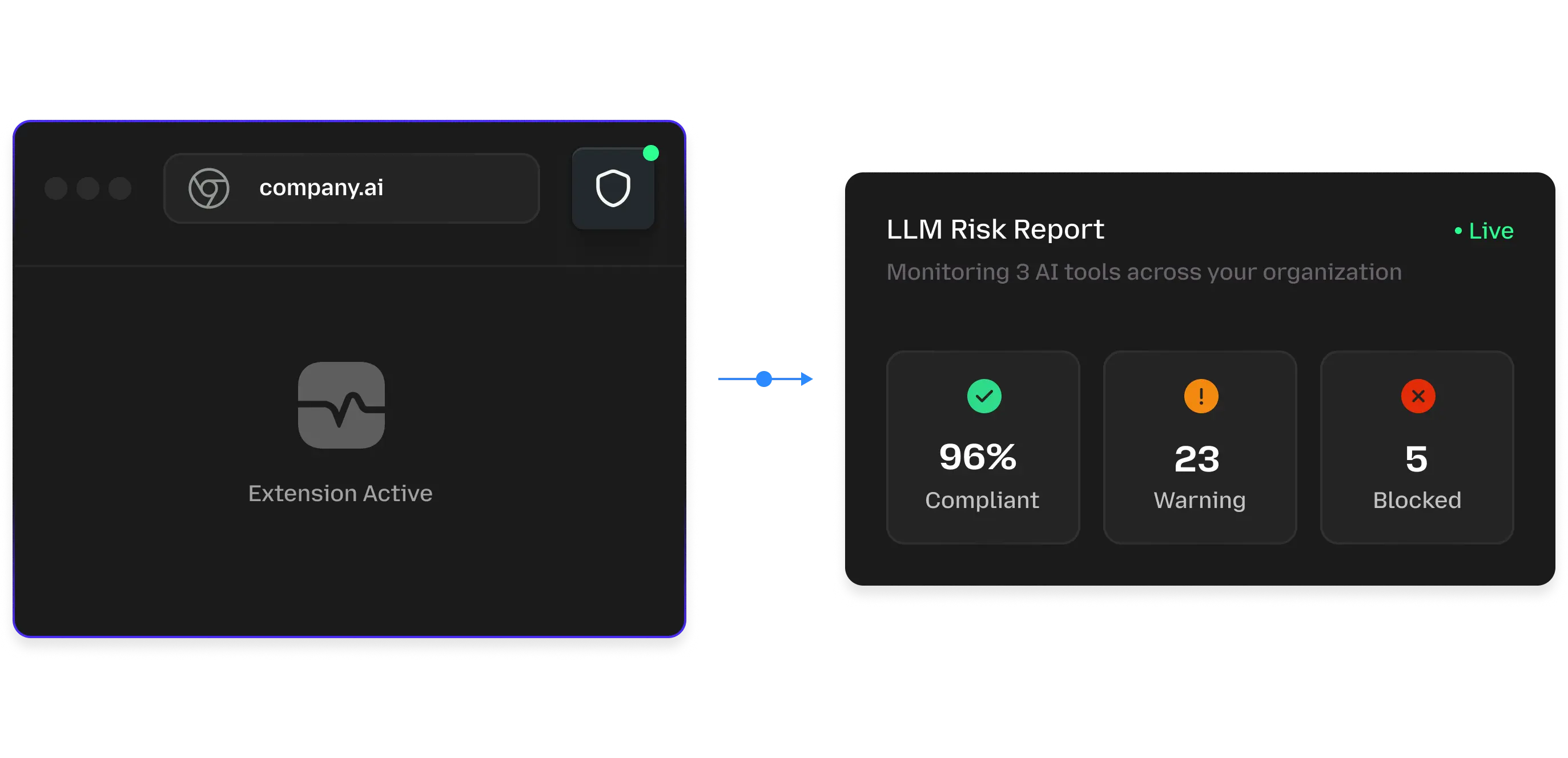

How Runtime Security Works for Enterprise LLM Applications

LLM applications create a new attack surface that traditional security tools don't inspect. Enterprise LLM protection must track inputs, outputs, and system behavior in real time to prevent misuse, data leaks, and unauthorized actions. Below are the core capabilities modern runtime LLM security provides:

Real-time Input and Output Inspection

Every prompt and generated response is monitored before it reaches your model or users, catching malicious, unsafe, or unexpected behavior that traditional controls miss.

Prompt Integrity and Behavior Controls

LLMs can be manipulated through prompt injection malicious instructions embedded in user-supplied text that alters model behavior. Detecting and mitigating these injections prevents unauthorized actions and leaks.

Contextual Policy Enforcement

Apply policies dynamically based on the type of interaction, data sensitivity, and compliance requirements, ensuring enterprise standards like least privilege, data masking, and regulatory constraints are upheld at runtime.

Tool & API Call Validation

When LLM applications invoke APIs, databases, or external services, validating those calls in real time ensures actions align with allowed workflows and prevents lateral movement or unapproved integration use.

Sensitive Data Detection and Protection

LLM systems can inadvertently expose PII, PHI, or proprietary data. Runtime data shielding identifies and controls sensitive content before it's returned or logged, reducing exposure risk.

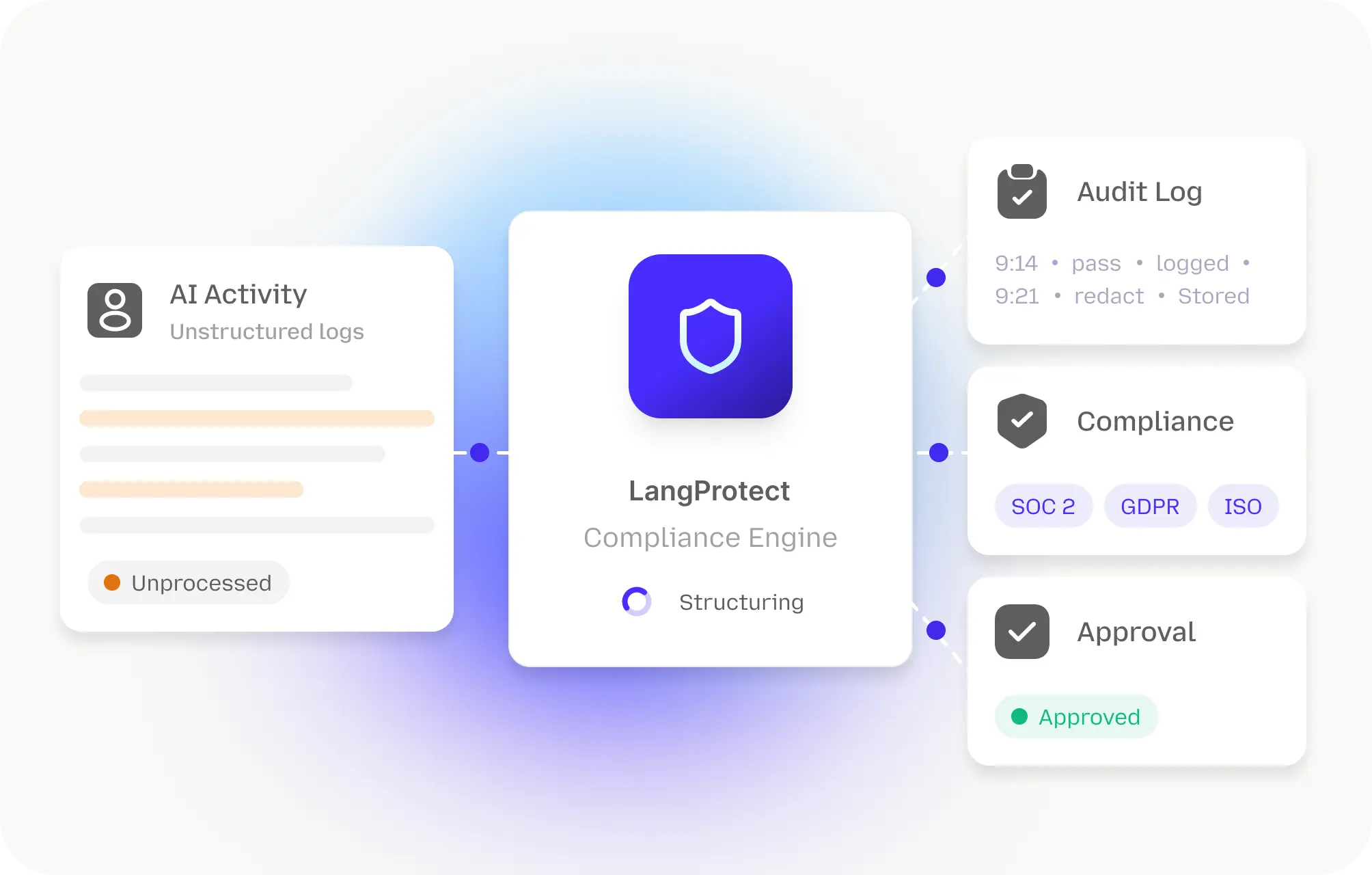

Audit Trails and Traceability

Comprehensive logging of prompts, outputs, actions, and policy enforcement decisions ensures forensic visibility, compliance evidence, and operational transparency for audits and investigations.

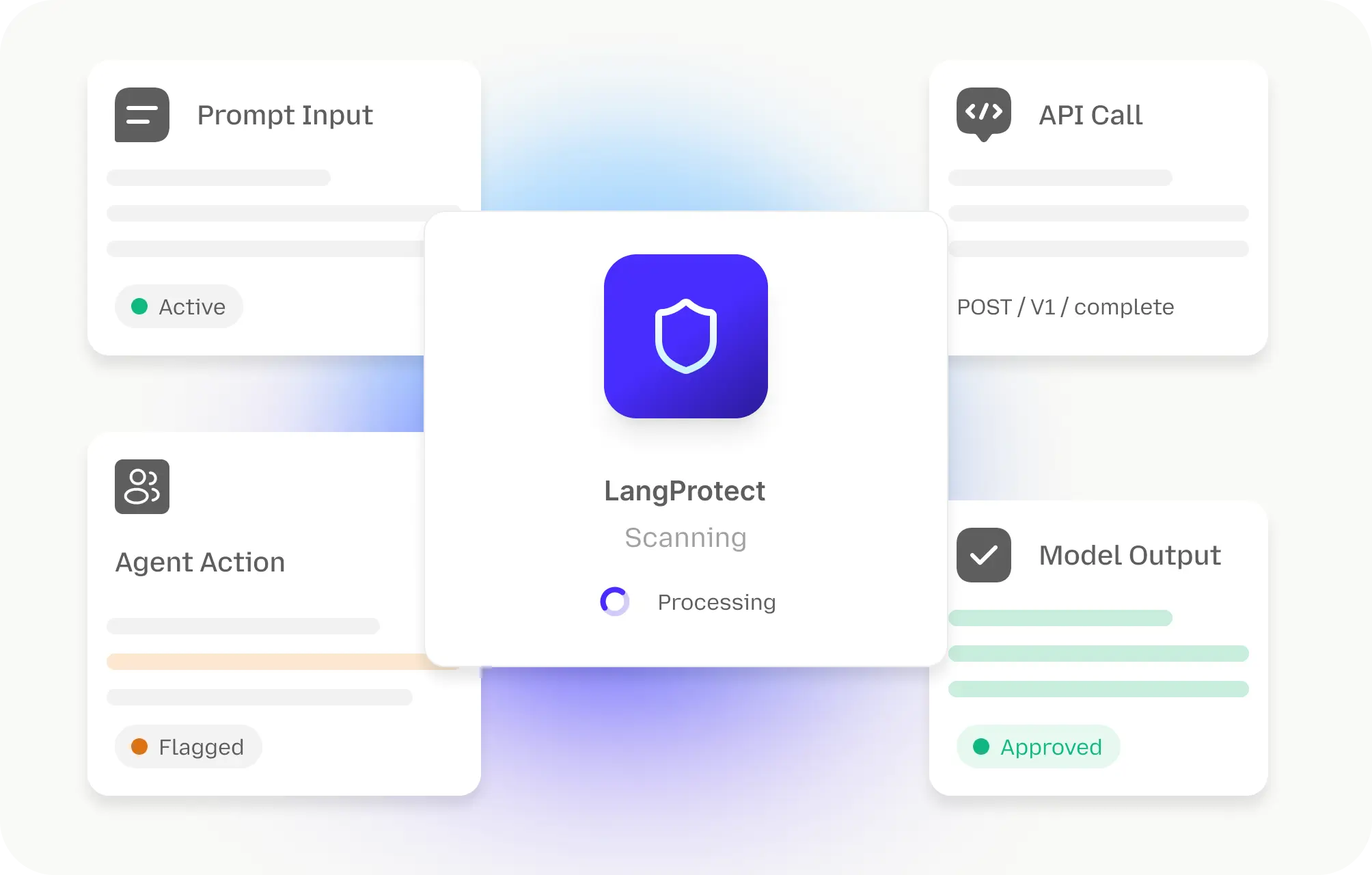

Enterprise-Ready LLM Security & Governance at Scale

Designed for real production workloads

LLM applications must be monitored continuously across all interactions, including prompts, outputs, API calls, and agent actions, to ensure risk visibility and operational control across the organization's AI estate.

Integrates with your existing security stack

LLM security should tie into existing identity, SIEM, DLP, and audit systems so policy enforcement, access control, and incident data feed into enterprise-wide risk workflows.

Built for compliance and risk teams

Enterprise LLM systems face unique threats like prompt injection, data exfiltration, and model manipulation. Governance needs real-time policy enforcement and traceability to support regulatory and internal audit requirements.

Continuous risk visibility and traceability

Capturing prompt context, model inputs/outputs, and decision paths enables continuous risk assessment, forensic reconstruction, and defensible evidence for governance, compliance, and risk management.

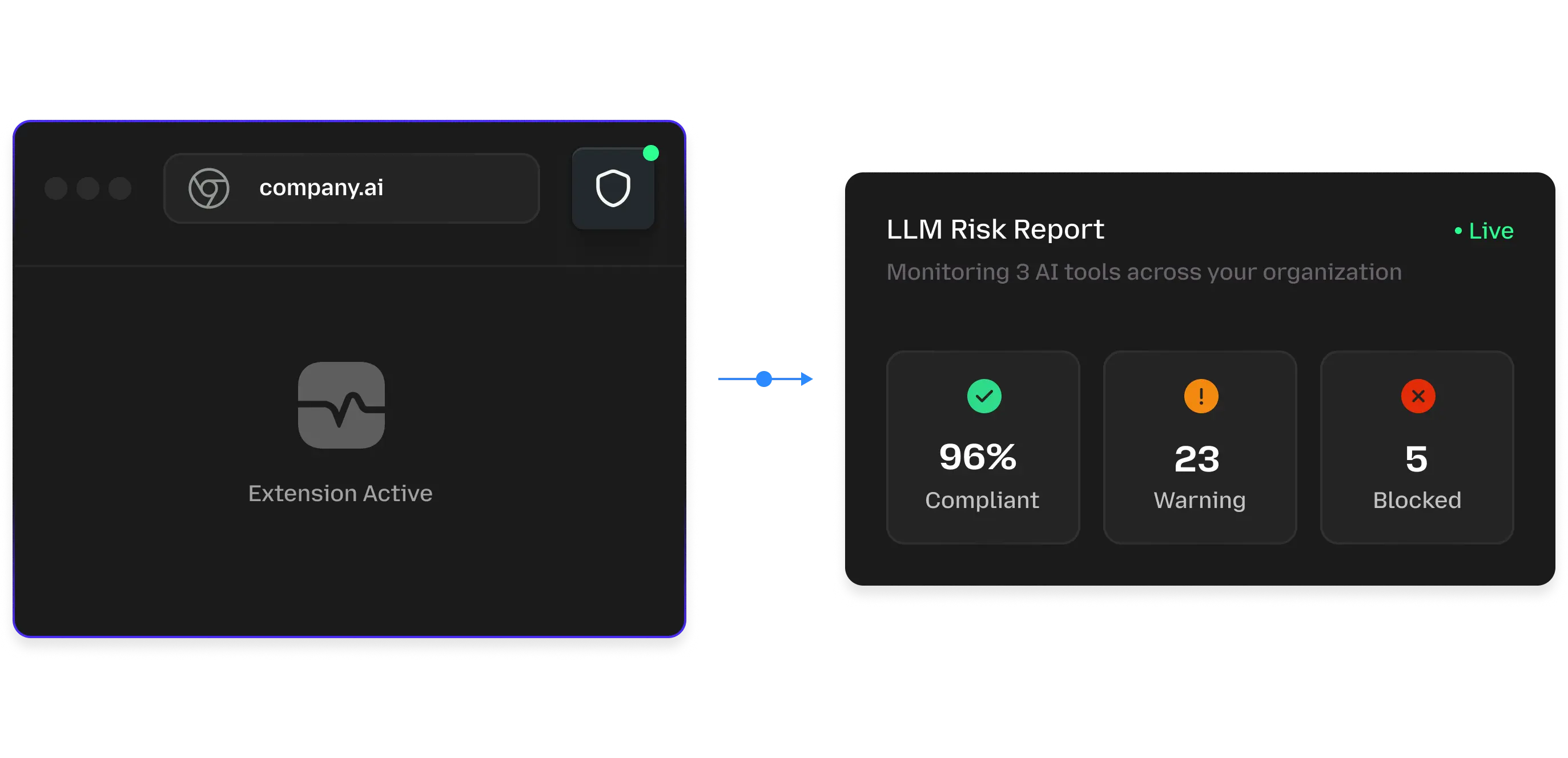

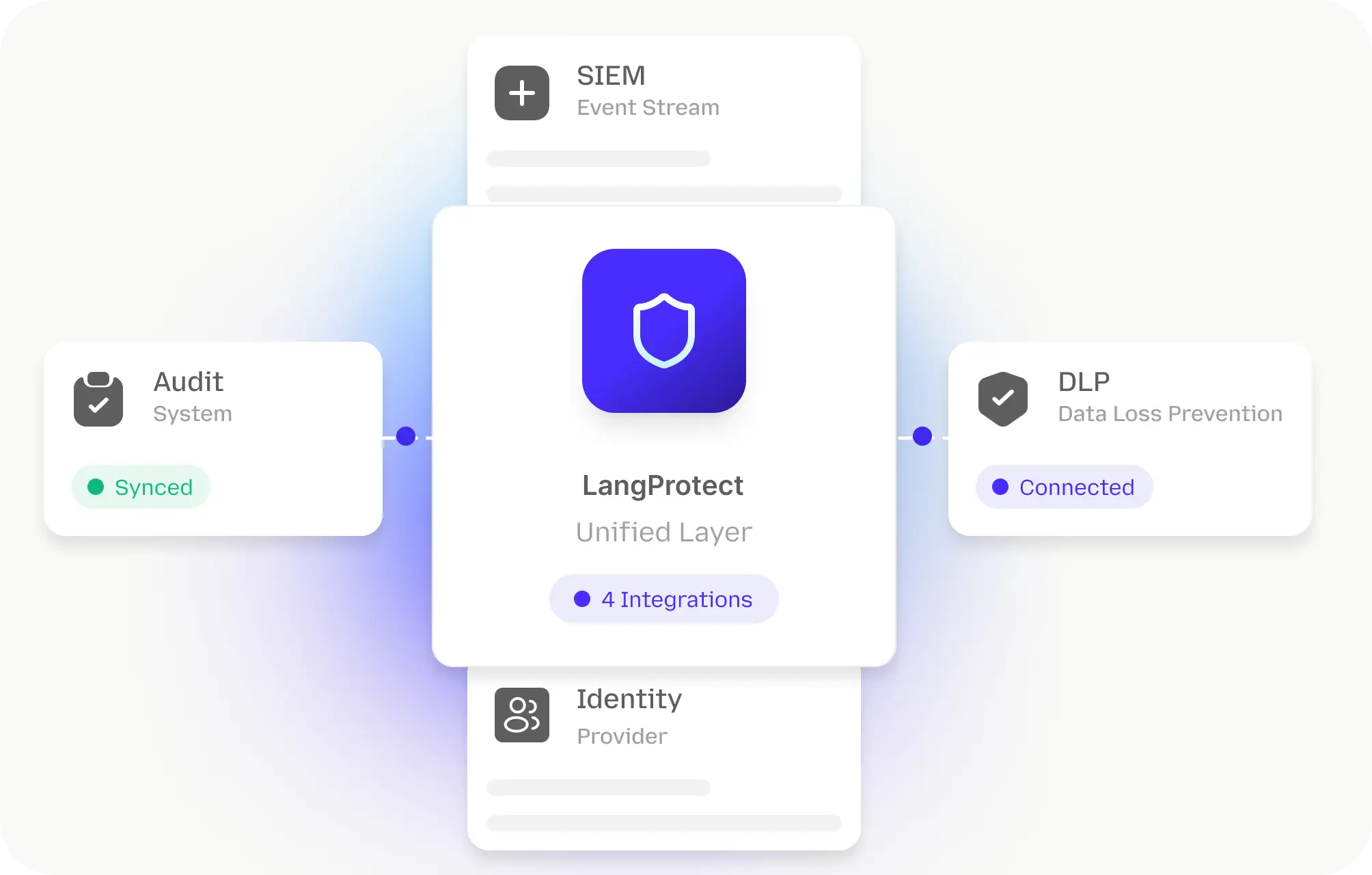

Quick Deploy & Get an Instant LLM Risk Report

Deploy runtime LLM monitoring in minutes and get an instant security snapshot showing prompt risks, data exposure, and policy violations without slowing workflows.