GenAI Chatbot Governance & Control

GenAI chatbots are now used across customer support and internal workflows. But without governance, prompts can leak sensitive data, Shadow bots go unnoticed, and audits lack defensible evidence. LangProtect gives security and AI platform teams real-time visibility and control over chatbot usage across both approved and unmanaged deployments.

Where GenAI Chatbots Break Governance Controls

GenAI chatbots don't usually cause immediate security alerts. They create governance gaps when teams need visibility, enforcement, and proof.

Invisible Chatbot Usage

Chatbots are accessed through browser sessions, personal accounts, or embedded tools that sit outside approved systems. These interactions bypass SSO, IAM, and logging.

Prompt-Level Data Disclosure Outside DLP

Sensitive information is shared through conversational prompts and uploads, not traditional file transfers. Most DLP tools aren't designed to inspect or control data inside AI conversations.

Policy Enforcement Without Evidence

Chatbot usage policies may be defined, but enforcement varies by tool and model. Without runtime controls, organizations can't verify that policies were applied consistently.

Discovery Happens Too Late

Unapproved chatbot usage is often uncovered during audits, investigations, or customer diligence. By then, conversations can't be fully reconstructed.

Why its hard to Catch GenAI Chatbots

Most enterprise security stacks were built to monitor files, endpoints, identities, and sanctioned SaaS tools. GenAI chatbots run through conversational prompts and unmanaged sessions, breaking those assumptions.

72%

of chatbot interactions occur outside federated identity controls, including personal accounts, shared sessions, or embedded tools.

68%

of sensitive information shared with GenAI chatbots moves through prompts and conversational input not file transfers.

59%

of widely used GenAI chatbot services do not expose enforcement-grade APIs for CASB or inline inspection.

77%

of organizations report they cannot fully reconstruct chatbot usage during audits beyond basic access logs.

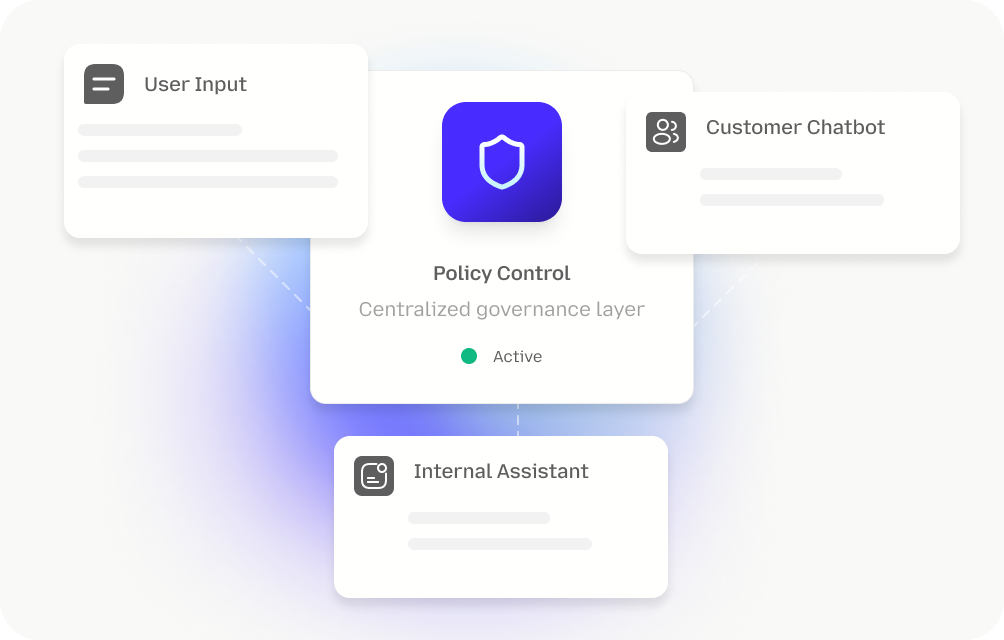

How LangProtect Secure GenAI Conversations

LangProtect secures GenAI conversations at runtime, monitoring prompts and uploads, enforcing policies in context, and generating audit-ready evidence across approved and Shadow chatbot usage.

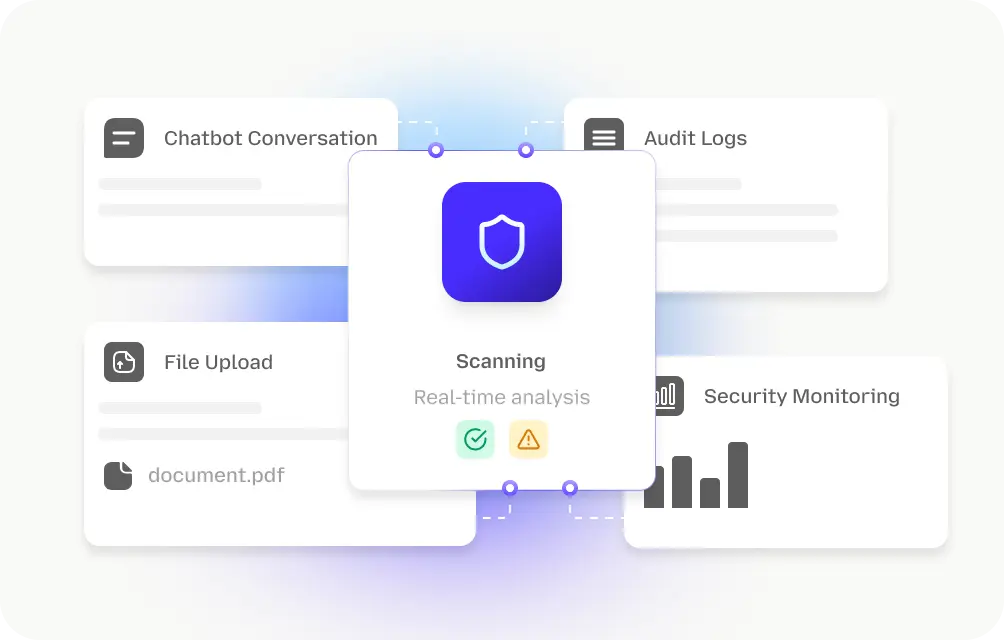

Visibility Into Chatbot Usage

Guardia provides continuous visibility into chatbot usage across internal copilots and customer-facing bots. It detects when chatbots are accessed through browsers, extensions, or unmanaged accounts, even when usage bypasses sanctioned platforms or identity systems.

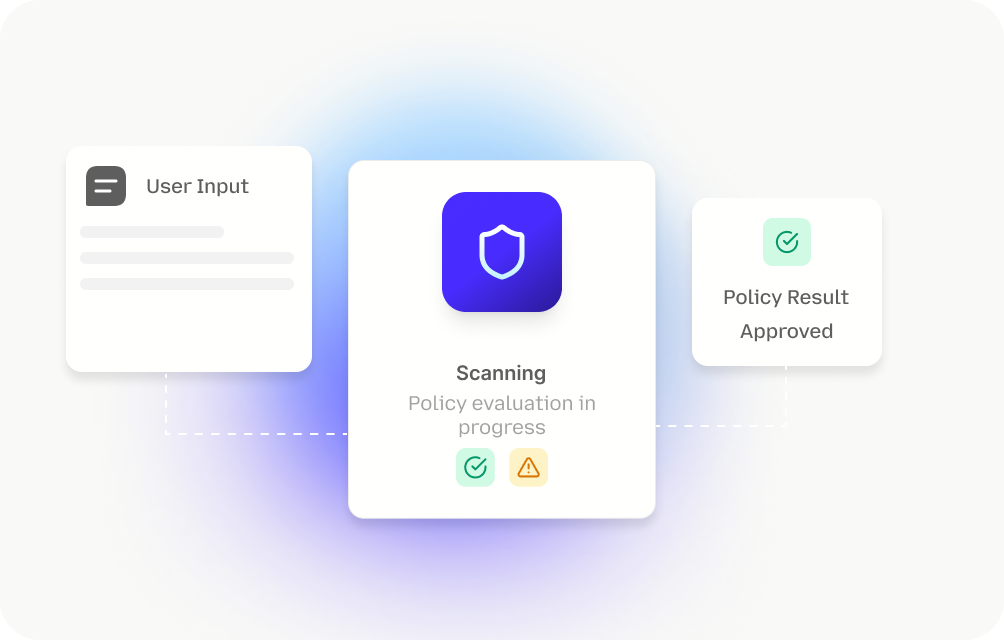

Prompt-Level Data Governance

Guardia inspects chatbot prompts and uploads in real time to identify sensitive data before it is shared. Because chatbot risk travels through prompts not files Guardia focuses on conversational inputs where leakage actually occurs.

Policy Enforcement During Chatbot Interactions

Guardia enforces AI usage policies at the moment chatbot interactions occur. Controls adapt based on who is using the chatbot, the type of chatbot involved, and the sensitivity of the data being shared.

Coverage Beyond Managed Accounts

Guardia maintains accountability even when chatbots are accessed via personal or unmanaged accounts. It correlates chatbot activity across sessions so usage remains attributable, governed, and reviewable.

Audit-Ready Evidence for Chatbot Usage

Guardia records chatbot interactions and policy outcomes to provide defensible audit evidence. Security and compliance teams can demonstrate how chatbots were used, what data was involved, and what controls were applied.

Governance Without Blocking Productivity

Guardia applies controls proportionally based on risk. Low-risk chatbot usage continues uninterrupted, while higher-risk interactions are governed in real time allowing teams to use chatbots safely without slowing work.

Enterprise-Ready AI Governance at Scale

Built for Organization-Wide Chatbot Deployment

Guardia is designed for enterprise rollout across browsers, teams, and regions. It supports staged adoption, role-based policy segmentation, and consistent governance for both internal and customer-facing chatbots without disrupting daily workflows.

Works With Your Existing Security & Audit Stack

Guardia integrates into existing security and audit workflows, feeding chatbot governance events into tools teams already use. This enables faster investigation, centralized visibility, and audit reporting without creating a new operational silo.

Designed for Governance & Compliance Programs

Guardia supports AI governance programs that require clear controls and defensible evidence. It helps organizations demonstrate how chatbot usage is monitored, how policies are enforced, and how exceptions are handled in environments under constant compliance scrutiny.

Take Control of GenAI Chatbot Risk

LangProtect gives security and platform teams real-time visibility into chatbot usage, enforces prompt-level controls, and produces audit-ready evidence so chatbots stay governed and defensible at scale.