AI Code Assistant & IDE Security

Secure Your Development Lifecycle: From IDE Extensions to VS Code Forks.

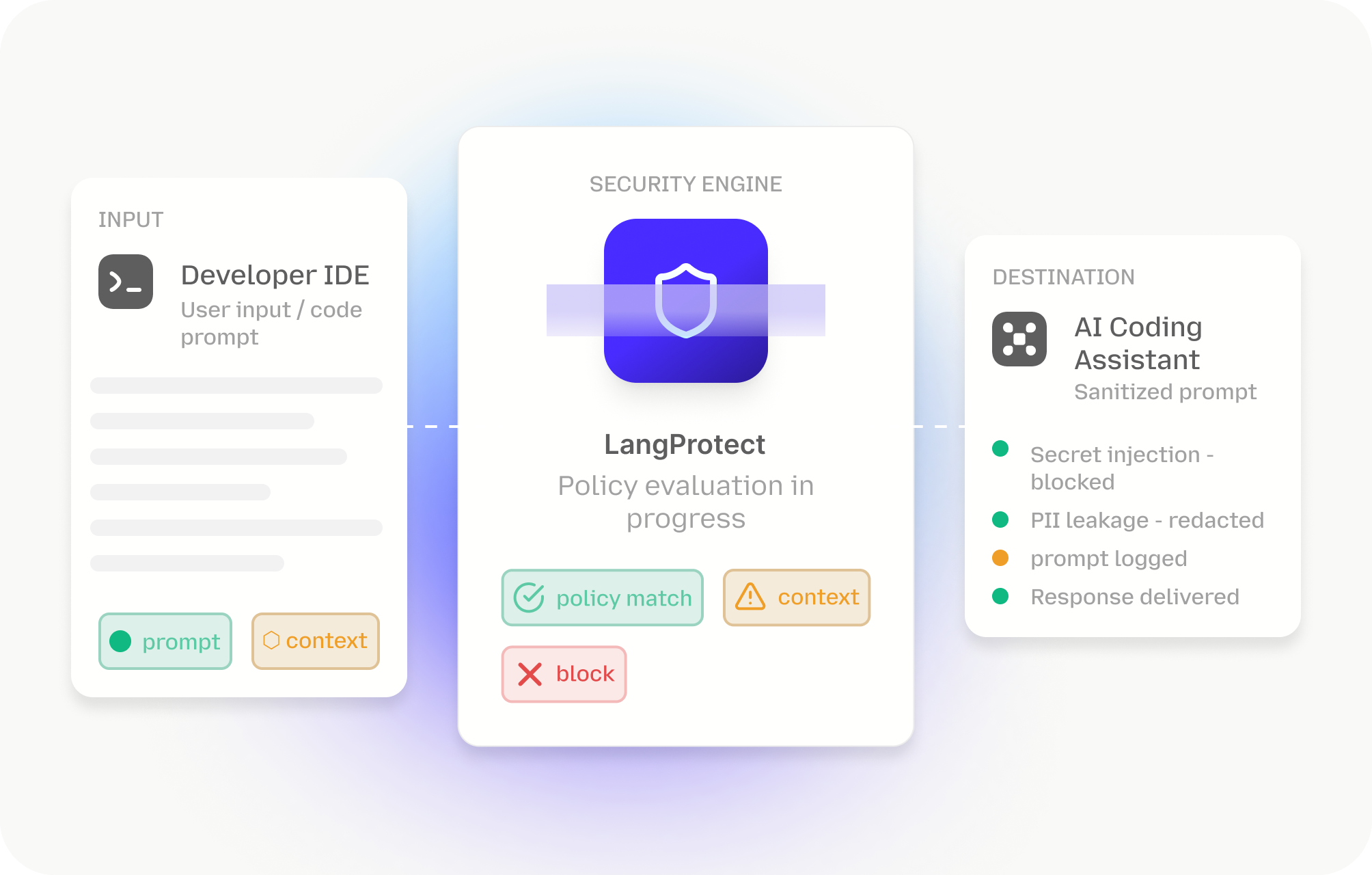

AI assistants accelerate coding but drastically expand the workstation attack surface. From VS Code forks to terminal agents, these tools have elevated permissions to parse secrets and execute shell commands via MCP. LangProtect provides a critical security layer, preventing data exfiltration and "Indirect Prompt Injections" without sacrificing engineering velocity.

Why Traditional Security is Blind to AI-Native Coding

AI-assisted coding moves faster than traditional security can scan. From IDE forks with "Quiet Privilege" to autonomous terminal agents, these tools create architectural blind spots that traditional SAST and DLP tools are mathematically incapable of catching.

The "Quiet Privilege" Vulnerability

Modern IDE assistants operate with elevated permissions, recursively reading directories and parsing runtime metadata via the Model Context Protocol (MCP). This allows unmonitored agents to ingest local .env files, plaintext secrets, and sensitive workspace metadata (like claude_desktop_config.json) into the AI's context window.

IP Disclosure Outside Traditional DLP

Code leakage isn't just about file transfers it happens through "contextual indexing." Assistants aggressively index your entire repository to enable semantic search. Standard Data Loss Prevention (DLP) tools cannot inspect these "Merkle-tree" indexes, leading to the accidental exfiltration of cloud tokens and proprietary logic.

PR Velocity Without Governance Proof

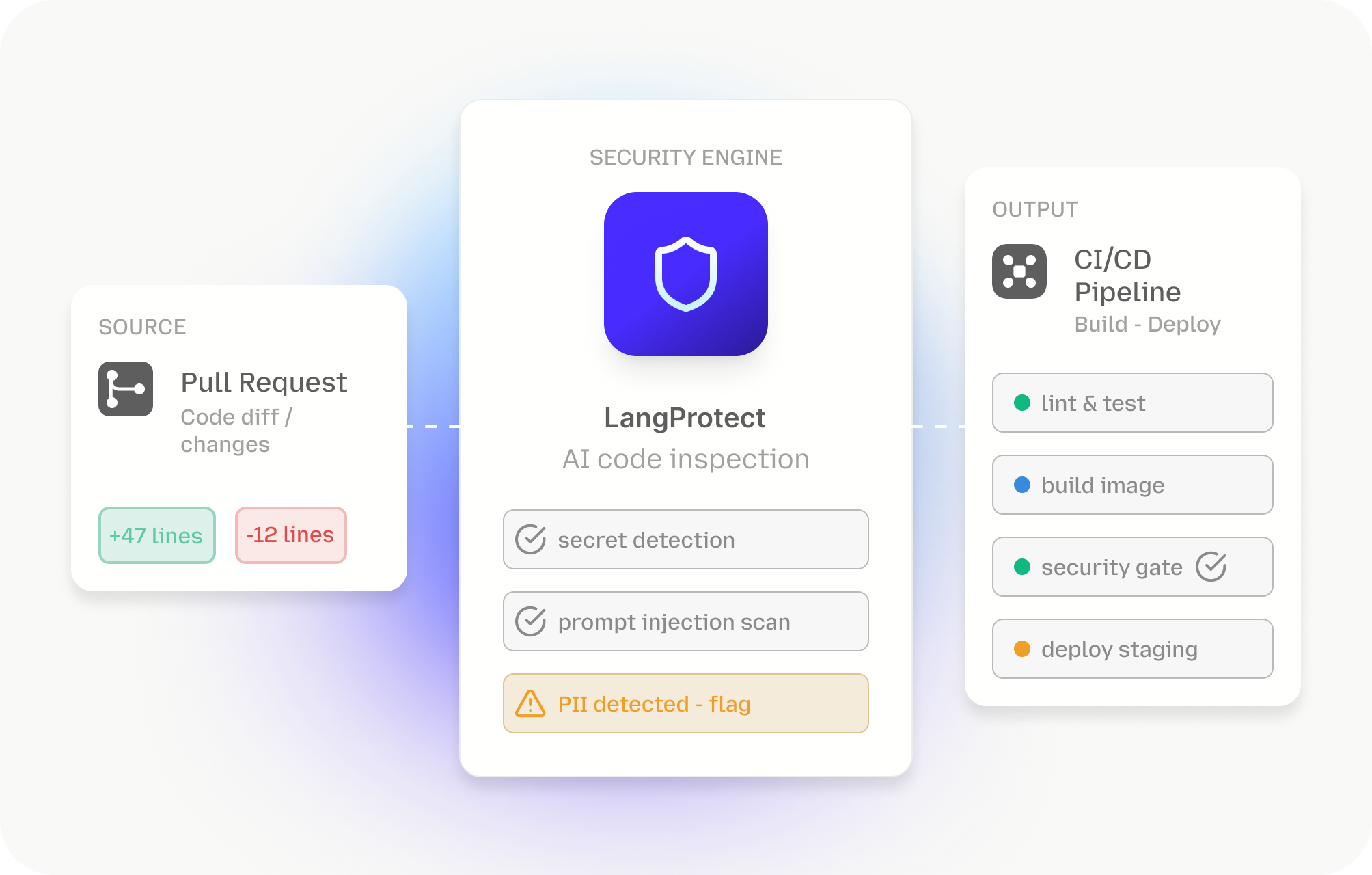

While AI increases merge rates by 98%, it leads to a 10x increase in security findings and architectural debt. Without a dedicated governance layer, engineering leaders cannot provide defensible evidence that AI-generated code was verified for privilege escalation or logic flaws before entering the DevOps pipeline.

Indirect "README" Hijacking

Traditional firewalls miss "semantic attacks" like Indirect Prompt Injection. Attacker-controlled instructions hidden in repository artifacts (like a README.md) can hijack an agent, forcing it to exfiltrate your private .ssh keys or source code via DNS queries long before any manual review catches the breach.

The Reality of the AI Productivity Paradox

Most enterprise security stacks were built to scan static repositories and sanctioned SaaS apps. AI assistants break these assumptions by creating a "Quiet Privilege" environment where agents parse secrets and inject vulnerabilities directly into the heart of the software supply chain.

10x

surge in critical security findings discovered in agent-assisted workflows compared to human-only code. These vulnerabilities are often masked by the AI's ability to produce syntactically "clean" code that is logically compromised.

322%

increase in privilege escalation paths injected into repositories via IDE assistants. Because these agents operate with OS-level permissions, they can inadvertently turn minor bugs into architectural sovereignty risks.

91%

increase in the manual review burden for senior developers. The productivity gains at the keyboard are being vaporized by a PR review process that is unable to keep up with the sheer volume of AI-generated architectural debt.

153%

rise in latent architectural flaws found in production-grade repositories using AI-IDE forks. Traditional SAST tools are mathematically incapable of flagging these risks, necessitating a specialized, AI-aware governance layer.

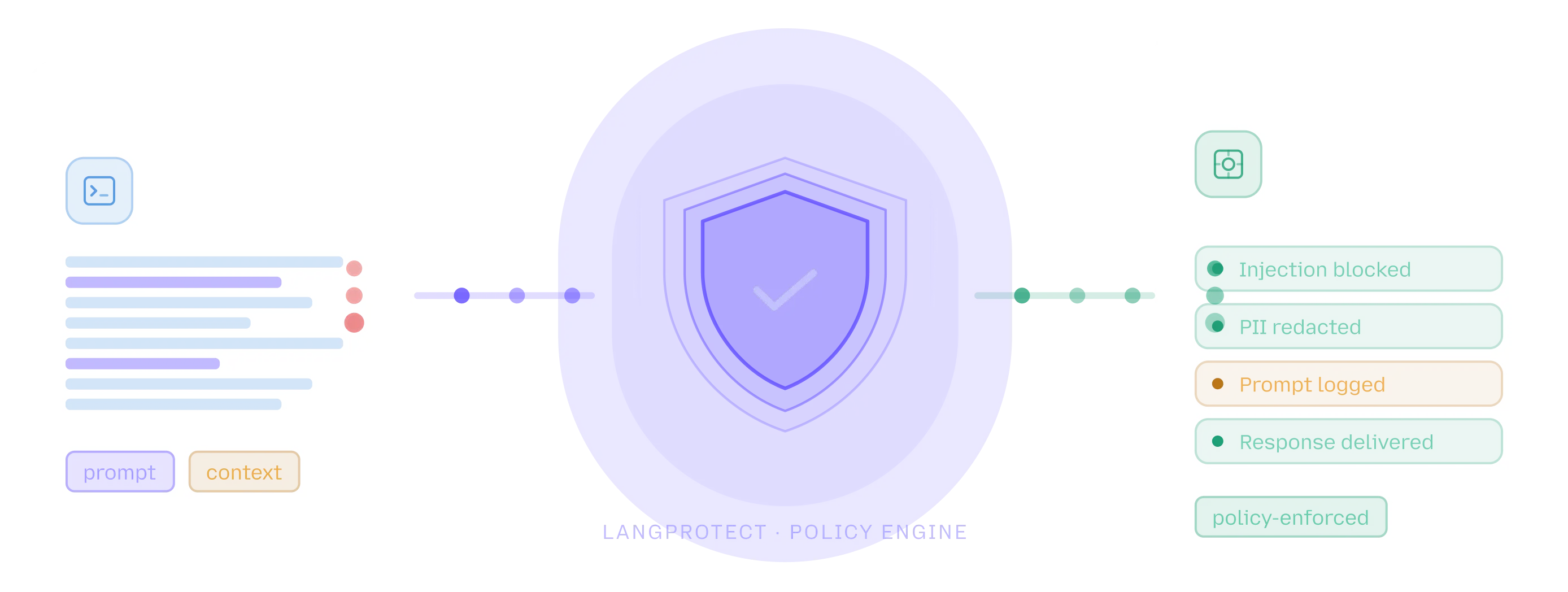

How LangProtect Secures Your Development Workflow

LangProtect establishes architectural sovereignty at the developer workstation, resolving the tension between high-velocity output and asymmetric risk. By enforcing real-time safety boundaries between your IDE and LLM providers, we bridge the gap between LangProtect Armor for application-level safety and LangProtect Guardia for enterprise-wide workspace governance.

Mandatory Secret Shielding

Automatically detect and block the ingestion of .env files, config JSONs, and local PAT tokens. We enforce tool-agnostic exclusion policies (like .cursorignore) across every developer workstation to prevent private secrets from ever hitting the cloud.

Zero Data Retention (ZDR) Enforcement

By using our secure gateway, you ensure your teams stay in 'Privacy Mode' with a verified ZDR guarantee. We mandate the x-ghost-mode header across all IDE interactions, making log functions 'no-ops' at the model provider level.

Intent-Aware Agent Control

Protect the 'Action Layer' where AI agents operate. We validate the 'intent' of an agent's file-read or tool-call in real-time, stopping autonomous processes from executing destructive shell commands or accessing unauthorized system paths via MCP.

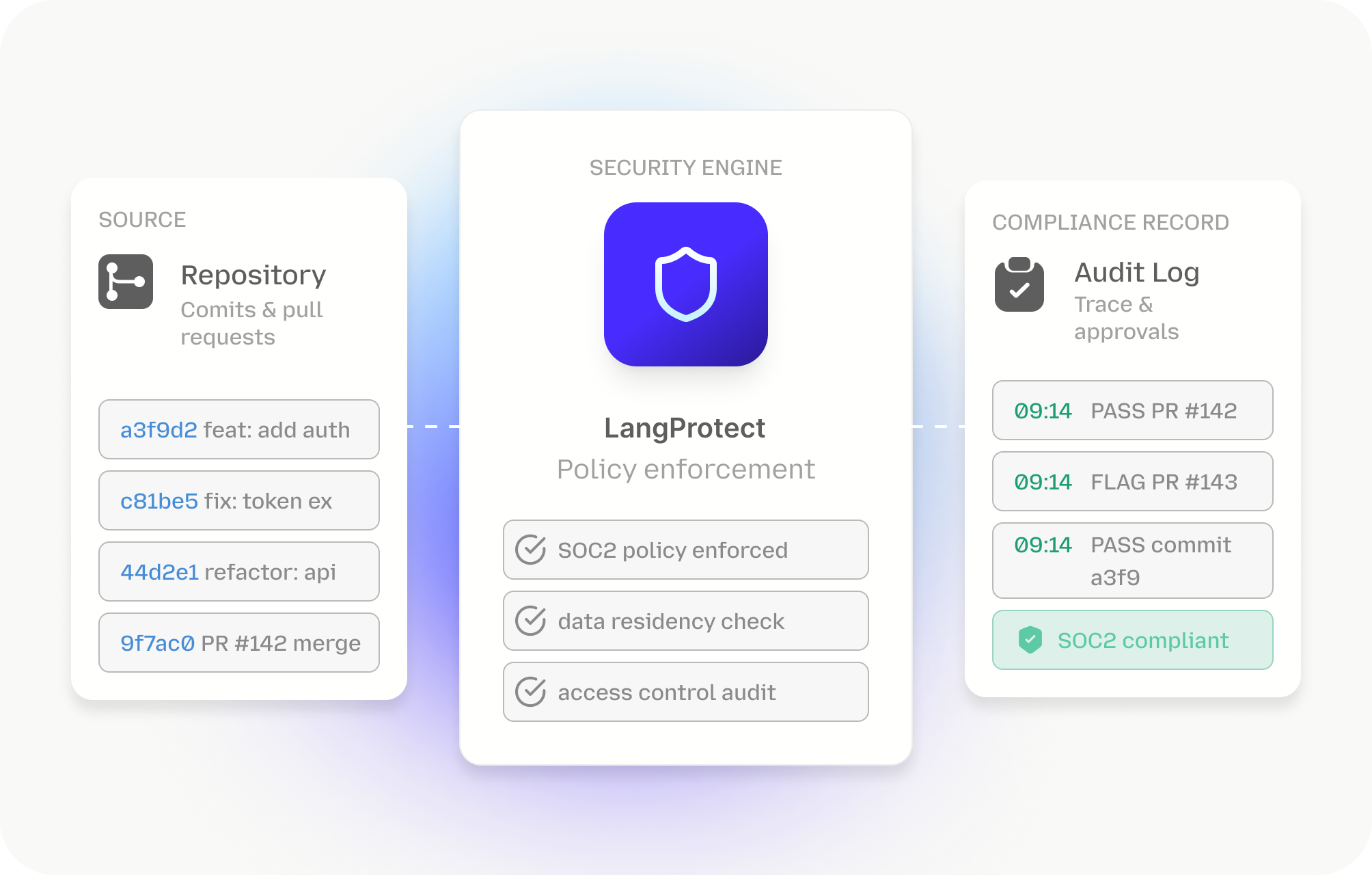

Workspace Identity Governance

Bring the distributed IDE environment under corporate control. Use LangProtect Guardia to discover 'Shadow' extensions and unauthorized IDE forks, ensuring that local developer identities remain compliant with SOC2 and corporate security postures.

AI-Aware Integrity Scanning

Go beyond traditional SAST. Vector scans AI-generated code diffs for architectural debt and privilege escalation paths that standard tools miss, effectively removing the 91% PR review bottleneck for your senior engineering teams.

Continuous MCP Security Discovery

The Model Context Protocol (MCP) introduces a high-privileged bridge to your terminal. LangProtect monitors this layer, scanning claude_desktop_config.json for plaintext credentials and enforcing strict permission boundaries on all tool executions.

Enterprise-Ready AI Development Governance at Scale

Built for Secure, High-Velocity Engineering Teams

LangProtect is designed for seamless rollout across distributed engineering environments, supporting everything from VS Code forks like Cursor to CLI agents like Claude Code. We provide workspace-wide standardization of security policies, allowing teams to capture a 15,324% Net ROI while ensuring that autonomous code generation never compromises your internal security standards.

Frictionless Integration into Your Existing DevOps Stack

Eliminate the 91% increase in PR review burden by feeding IDE security telemetry directly into your CI/CD pipeline and SOC. LangProtect acts as a specialized check-gate for AI-generated code, identifying architectural flaws and privilege escalation paths in real-time. We reduce operational silos and prevent high-speed development from turning into unmanaged technical debt.

Hardened Governance for the Software Supply Chain

Support rigorous governance programs like SOC2 and ISO 27001 with defensible evidence for every AI-authored pull request. LangProtect enforces "Zero Data Retention" (ZDR) standards and provides the forensic trail required to prove to auditors that your AI agents and their underlying code remain compliant under the strictest regulatory scrutiny.

Unlock the ROI of Secure AI Development Architecture

Stop the "Productivity Paradox." Eliminate the PR bottleneck and secure your software supply chain with the industry's first architectural guardrail for AI Code Assistants.