GuardiaInline AI Protection for Employee AI Use

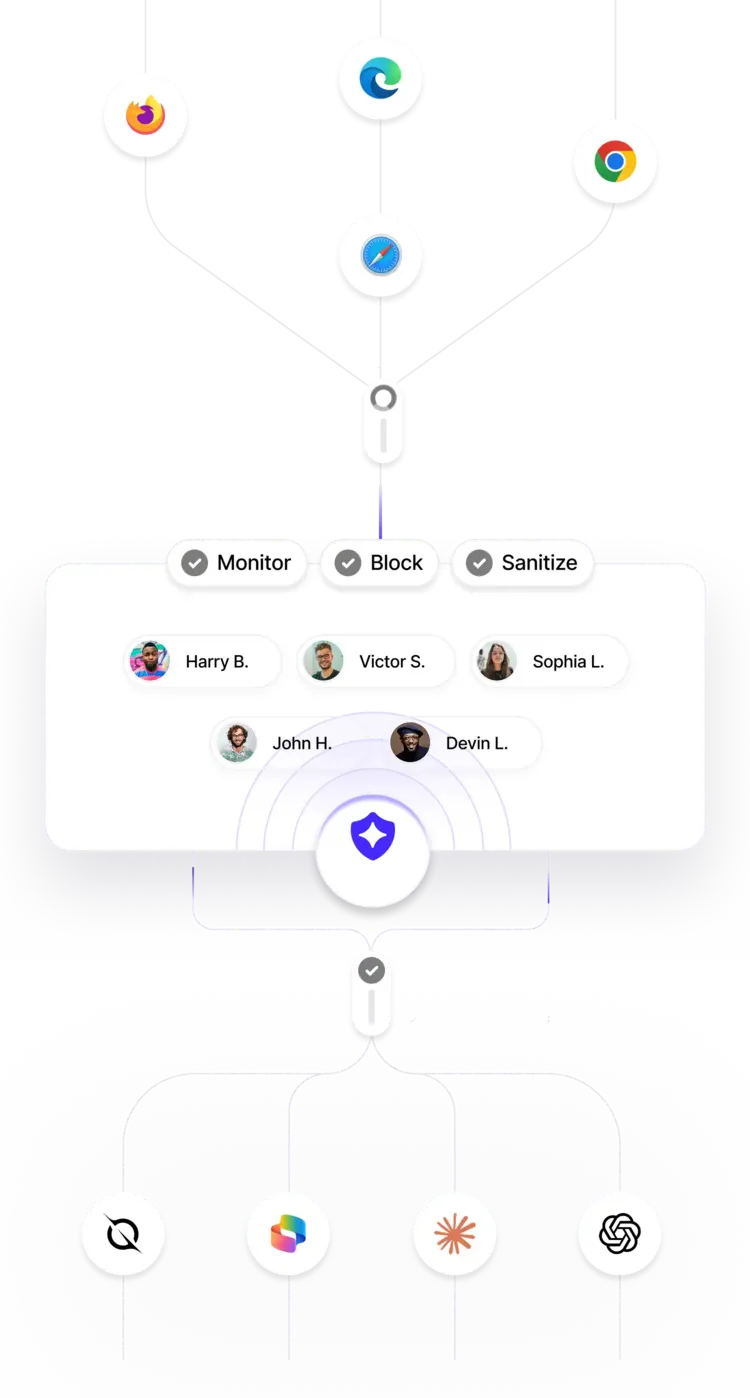

Guardia intercepts prompts and files before they reach AI tools like ChatGPT, Claude, and Copilot, scanning for data leaks, policy violations, and unsafe inputs in real time.

What Guardia Protects Against

Guardia intercepts AI-bound input at the browser level, detecting and blocking high-risk content before it reaches external LLMs.

Sensitive Data Exposure (PII / PHI / Confidential Data)

Detects personal, regulated, and proprietary information inside prompts and file uploads before they are sent to external AI systems.

Prompt Injection & Jailbreak Attempts

Identifies malicious instructions designed to override system behavior, bypass safeguards, or manipulate AI responses.

Policy Violations

Enforces organizational AI usage policies by detecting restricted content, prohibited actions, and non-compliant prompts in real time.

Unsafe or Harmful Content

Blocks toxic, abusive, or restricted language that could create legal, reputational, or safety risks if submitted to AI tools.

Risky File Uploads

Scans PDFs, DOCX files, images, and text-based attachments for embedded sensitive data and policy violations before upload.

Accidental Oversharing & Human Error

Prevents unintentional exposure caused by copy-paste mistakes, auto-generated content, or rushed submissions before data leaves the browser.

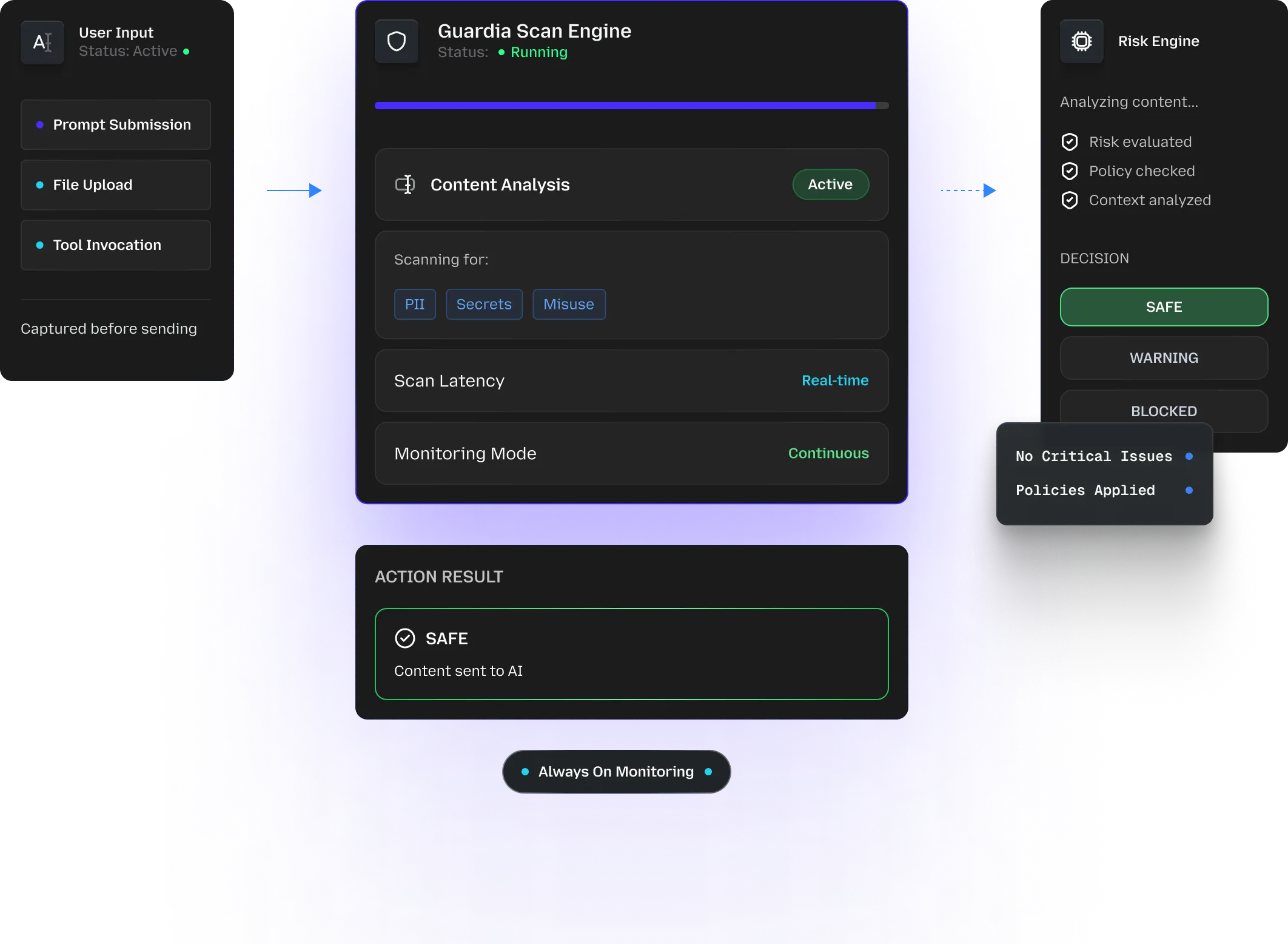

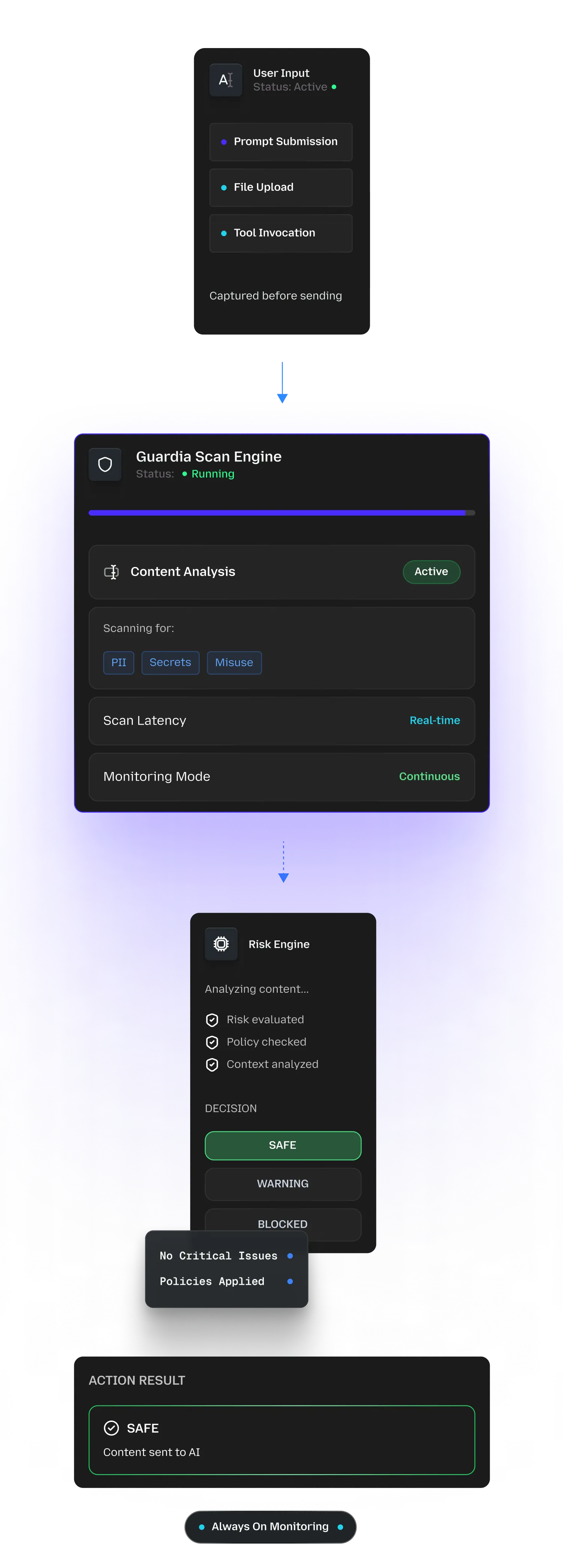

How Guardia Works

Guardia runs inline with employee AI usage, inspecting prompts and files in real time and stopping risky content before it reaches any AI system.

Intercept AI Input At The Browser Level

Guardia detects prompts and file uploads directly inside AI tools like ChatGPT, Claude, and Copilot before submission.

Scan Content In Real Time

Every input is evaluated using multiple detection engines to identify data exposure, policy violations, and unsafe behavior.

Classify Risk Instantly

Each interaction is categorized as Safe, Warning, or Blocked based on severity and policy rules.

Enforce The Right Action

Users receive immediate feedback with options to edit, redact, proceed with warning (if allowed), or cancel submission.

Record For Visibility & Audit

All flagged events are logged with context, giving security teams a clear audit trail and operational insight.

Visibility and Control in Real Time

Guardia stops risky AI use before submission, while giving security teams the evidence and oversight needed for audits, investigations, and governance.

Instant Pre-Submission Warnings

Flag risky prompts and uploads before they leave the browser.

Clear, Actionable Explanations

Flag risky prompts and uploads before they leave the browser.

Inline Risk Highlighting

Highlight the exact text or file content that contains sensitive data or policy issues.

Edit, Redact, or Cancel

Offer safe alternatives and redaction suggestions without blocking productivity.

Real-Time Detection Logs

Track flagged activity across teams and tools as it happens, with clear context.

Severity-Based Risk Classification

Prioritize high-impact incidents using consistent risk levels, not noisy alerts.

Searchable Audit Trails

Access scan records including inputs, detections, and actions taken for reviews and investigations.

Organization-Level Analytics & Trends

Identify recurring risk types, high-risk teams/tools, and emerging patterns over time.

Use Cases

Guardia supports real-world AI usage across teams by preventing common risks before they turn into incidents.

Prevent Accidental Data Leakage

Employees often paste internal data, customer details, or confidential documents into AI tools without realizing the risk. Guardia detects sensitive information in prompts and files before submission and helps users redact it safely.

Enforce AI Usage Policies at Scale

As AI adoption grows, enforcing consistent usage rules becomes difficult. Guardia applies organizational AI policies automatically across teams and tools, without relying on manual reviews.

Reduce Risk from Prompt Injection & Jailbreaks

Malicious or poorly structured prompts can manipulate AI behavior. Guardia identifies and blocks prompt injection and jailbreak attempts before they reach external models.

Protect File Uploads to AI Tools

Uploading PDFs, documents, or images to AI tools can expose embedded sensitive data. Guardia scans files in real time and prevents risky uploads before they leave the browser.

Support Compliance & Security Reviews

Auditors and security teams need evidence of control over AI usage. Guardia maintains searchable logs and detection records to support audits, investigations, and compliance reporting.

Enable Safe AI Adoption for Employees

Employees want to use AI productively, not break policy. Guardia provides clear, real-time guidance so users can correct issues and continue working safely.

Works With Your Stack

Guardia fits into your existing workflows, so teams can adopt AI safely without slowing down operations or changing how they work.

Integrates With Existing Apps

Protects AI usage directly within browser-based AI tools employees already use, without requiring application or model changes.

Centralized Dashboards & Logs

Provides real-time visibility into detected risks through centralized dashboards, logs, and analytics for security and compliance teams.

Supports Enterprise Workflows

Designed to align with enterprise security, compliance, and IT processes as AI usage scales across teams.

Frequently Asked Questions

Take Control of AI Use Before Risk Escalates

Guardia helps organizations prevent data leakage, enforce AI usage policies, and maintain visibility into employee AI interactions before content ever reaches external AI systems.

100,000+

Prompts Detected

7000+

AI Tools monitored

20+

Policies Applied

99%

Sensitive Data Coverage

<5ms

Risk Detection