Shadow AI Detection & Governance

Employees using AI tools without proper oversight can pose a serious risk to data security. Without the usual security checks like Single Sign-On (SSO), Data Loss Prevention (DLP), or audit logging, these tools can cause unnoticed data breaches. Proactive monitoring and governance are essential for tracking, controlling, and proving the use of AI tools in your organization.

“AI moved faster than security ever did.” When we started working with AI inside real businesses, we noticed something no one was addressing. Employees were adopting powerful AI tools daily. Teams were shipping AI-driven products faster than ever. But visibility, control, and security didn’t keep up. LangProtect was built to close that gap. We give enterprises real-time visibility into how AI is actually used, enforce the right policies as it happens, and stop risks before they become incidents without slowing teams down. Our goal is simple: Enable AI adoption that is safe, compliant, and built to last.

Suny

Co-Founder at LangProtect

Where Shadow AI Breaks Governance Controls

Shadow AI does not trigger incidents. It creates governance failures when control and evidence are required.

Missing AI Usage Monitoring

Employees use AI tools with personal or unmanaged accounts. Usage happens with no reliable records or accountability. AI activity happens without being tracked.

Prompt-Level Data Disclosure Outside DLP

Sensitive data is exposed through AI prompts and file uploads, not just file transfers. Existing DLP systems aren't designed to monitor or control data shared at the prompt level.

Unverifiable Policy Enforcement

AI usage policies might exist, but they aren't always enforced in real-time, making it hard to prove compliance during audits.

Delayed Detection Under Regulatory Scrutiny

Shadow AI usage is frequently discovered only during audits, legal inquiries, or customer due diligence. At that point, usage reconstruction is incomplete and remediation options are limited.

Why Existing Security Controls Don’t Catch Shadow AI

Most enterprise security stacks were built to monitor files, endpoints, networks, and sanctioned SaaS applications. Shadow AI operates outside those assumptions.

70%

of enterprise GenAI usage occurs outside federated identity systems, including personal or unmonitored accounts.

65%

of sensitive data shared with AI tools is transmitted via prompts or clipboard, not only by file uploads.

60%

of public GenAI tools lack enterprise-grade APIs required for CASB inspection and enforcement.

75%

of organizations cannot reconstruct AI usage history beyond high-level access logs during audits or investigations.

How LangProtect Works Against Shadow AI

LangProtect addresses Shadow AI by monitoring AI usage in real time, enforcing policies, and providing audit-ready evidence of employee AI interactions.

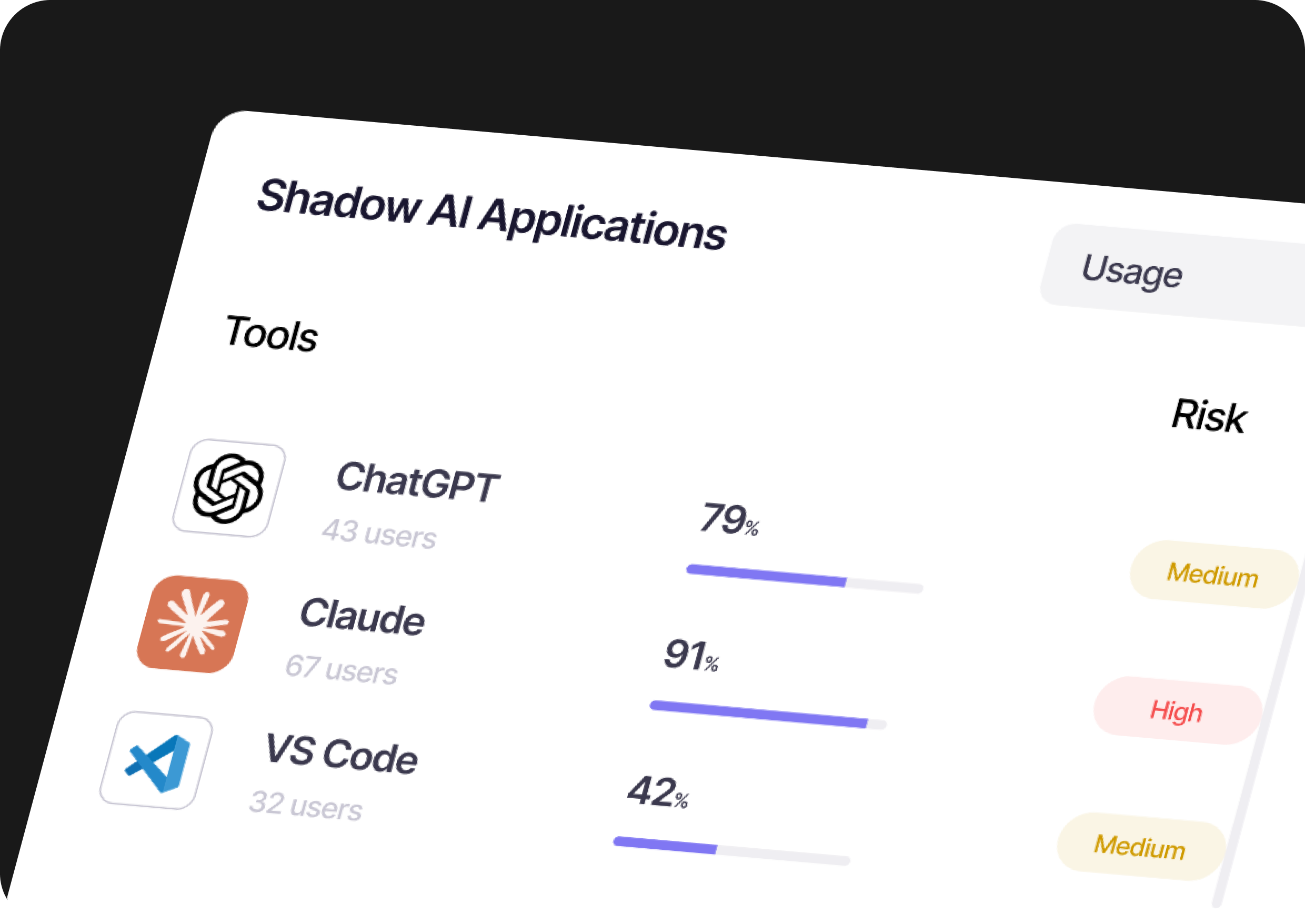

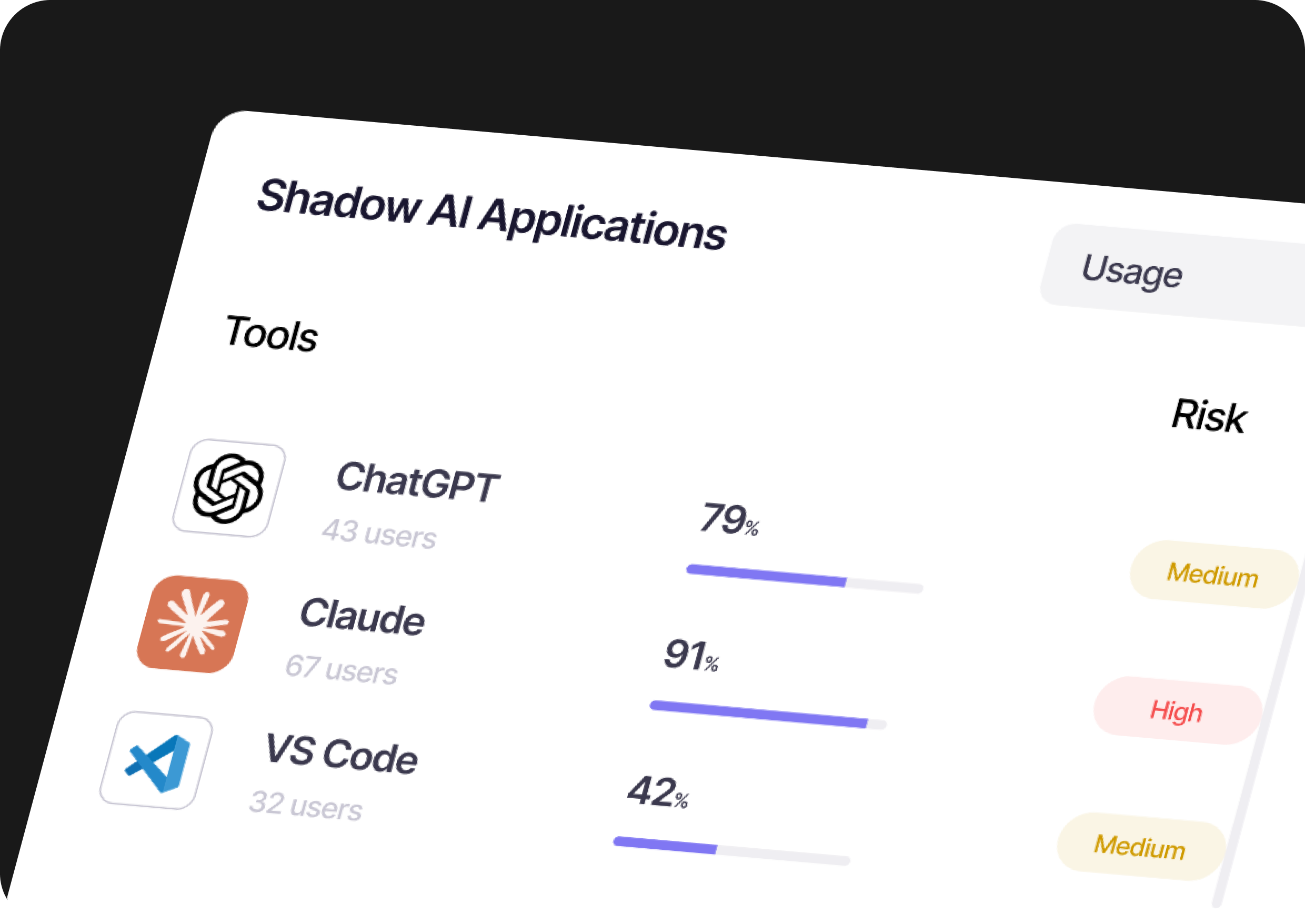

Runtime Discovery of AI Tool Usage

LangProtect continuously monitors AI tool usage at the browser level. It tracks both approved and unapproved AI tools, ensuring visibility into all AI interactions, even when they aren't officially sanctioned.

Prompt and Upload-Level Inspection

LangProtect inspects both AI prompts and file uploads in real time to detect sensitive data being shared. This helps prevent data leaks that traditional DLP systems can't catch.

Contextual Policy Enforcement at Runtime

LangProtect enforces AI usage policies in real time based on context, such as the AI tool being used, the user's role, and the sensitivity of the data involved. This makes governance operational, not just theoretical.

Identity-Aware Governance Beyond SSO

LangProtect ensures accountability for AI usage, even when employees use personal or unmanaged accounts. It correlates AI activity across both corporate and unmanaged accounts, ensuring consistent policy enforcement.

Audit-Ready Evidence and Traceability

LangProtect logs every AI interaction, including policy enforcement decisions, creating an audit trail that can be used during investigations or regulatory reviews. This provides transparency and accountability for AI usage.

Governance Without Disrupting Productivity

LangProtect ensures AI governance without blocking workflows or restricting productivity. Policies are enforced based on risk and context, allowing organizations to adopt AI tools safely.

Quick Deploy in your browser & Get Instant AI risk Visibility Report

Install the extension, scan live AI activity, and instantly uncover hidden risks across your organization’s AI usage.

Enterprise-Ready AI Governance at Scale

Designed for real enterprise rollout

LangProtect continuously monitors AI tool usage at the browser level. It tracks both approved and unapproved AI tools, ensuring visibility into all AI interactions, even when they aren't officially sanctioned.

Integrates with your existing security and audit stack

LangProtect can feed telemetry and governance events into existing monitoring and investigation workflows so security teams don't create a new silo. This supports faster triage, reporting, and audit evidence collection using the tools teams already operate.

Built for governance and compliance demands

Designed to support AI governance programs that require defensible controls and evidence. It helps teams demonstrate monitoring, enforcement, and exception handling in environments where compliance and audit scrutiny are non-negotiable.

Take Control of Shadow AI Risk Today

LangProtect provides real-time visibility, policy enforcement, and audit-ready evidence to govern AI usage across your organization.