How to Enable AI Adoption Without Losing Security Control

By January 2026, the era of cautious AI experimentation had effectively closed. What began as a handful of employees using consumer AI tools to speed up routine tasks had quietly scaled into something far more consequential, a distributed network of third-party models, browser-based extensions, and SaaS integrations that now sit at the centre of how knowledge work actually gets done across finance, legal, HR, and engineering functions.

For most enterprise security teams, the first genuine reckoning with this reality arrives not through a breach or a board directive, but through a shadow AI discovery scan, the kind that returns forty-seven active tools when the security register lists four.

In a representative pattern we see across financial services organisations in 2026, that scan typically surfaces 47 tools that have never been through a formal security review, 6 with direct integrations into internal databases or CRM systems, and at least one browser extension in active use by a finance or legal team member that is transmitting sensitive data to a third-party model operating under no data processing agreement, no BAA, and no documented retention policy.

None of it was flagged by the SIEM. None of it triggered a DLP alert. The exposure existed in plain sight, inside the conversation layer that traditional security infrastructure was never designed to read.

This is the position most CISOs occupy today, not at the beginning of an AI governance journey, but at an urgent inflection point within one that began without them. The question is no longer whether to enable AI adoption.

It is whether to govern what is already running before a regulator, an auditor, or an adversary surfaces the gap first. This post provides a structured framework for diagnosing where your organisation currently stands, establishing the controls that matter most in the immediate term, and building the compliance evidence that the EU AI Act and DORA now require you to demonstrate, without pausing the AI adoption that your business depends on.

Find out what AI tools are already running in your environment

Get a complete shadow AI inventory across your organisation, browser extensions, SaaS integrations, and agent connections, before your next audit does it for you.

How Do You Find Out Which AI Tools Are Already Running in Your Organisation?

Most CISOs discover their shadow AI problem not through an alert, but through the first time someone actually looks. A discovery scan operating at the browser and network level, rather than a software inventory check will surface tools your SIEM, your DLP, and your asset register have no record of, because those systems were built to monitor known applications, not conversational AI endpoints that employees reach directly through a browser tab or a quietly enabled SaaS feature.

What comes back when you run that scan is almost always more than expected and the risk is rarely where headcount suggests it should be.

What a Real Shadow AI Discovery Scan Actually Surfaces

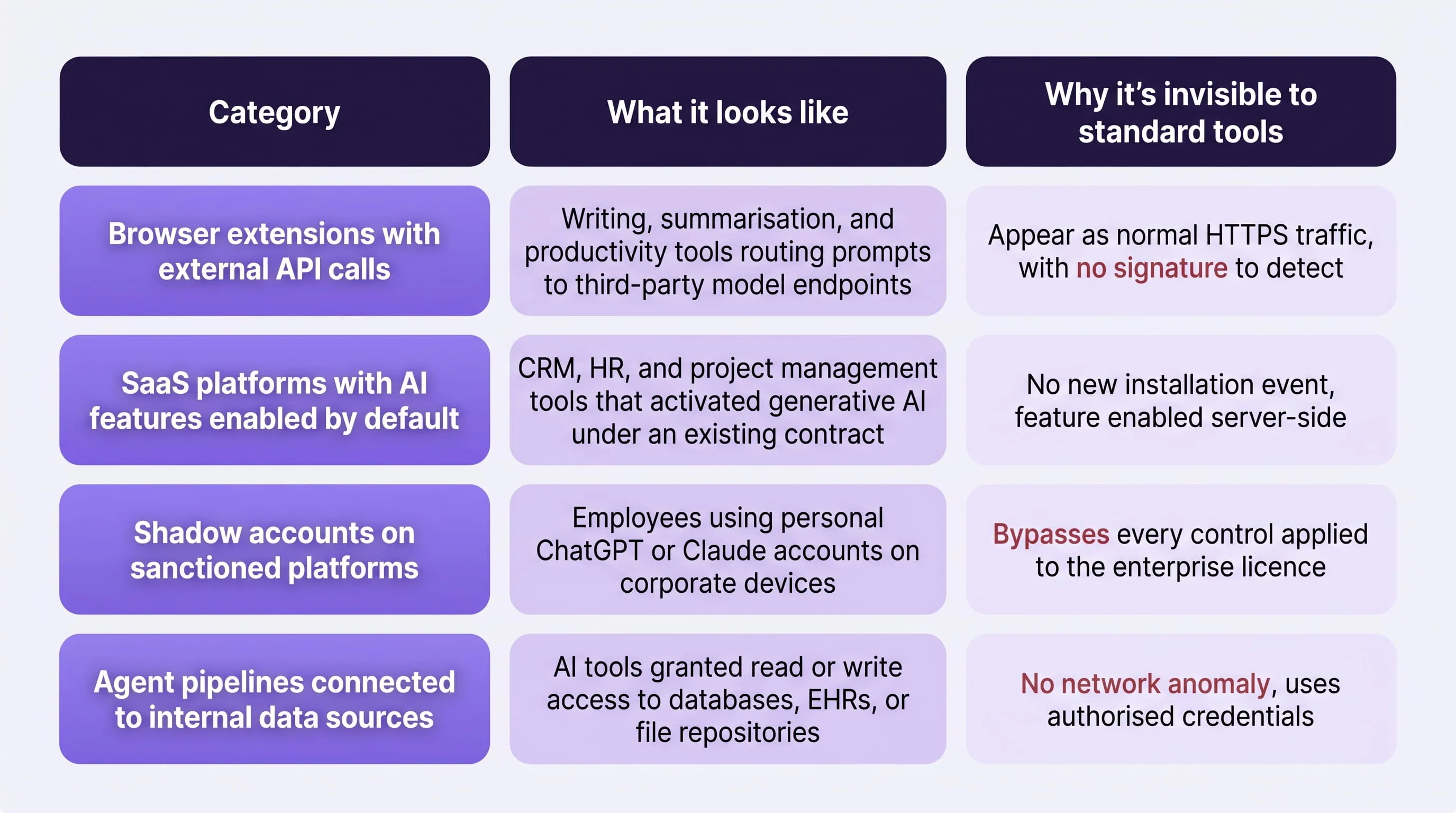

A discovery scan returns four categories of AI activity that most enterprise security teams currently have no visibility into:

The table above is not a theoretical risk catalogue. It represents the consistent pattern of what shadow AI discovery returns across enterprise environments in 2026, particularly in financial services, legal, and healthcare, the sectors where the data being routed through these channels carries the highest regulatory consequence.

What Are the Three Data Points That Determine Real Risk Exposure?

When a scan returns results, the instinct is to sort by user count, to identify the tools the most people are using. This is the wrong starting point, and acting on it consistently underweights the highest-risk tools while overweighting the lowest-risk ones.

The three data points that determine actual exposure are data access permissions, external API connections, and department of primary use.

- Data access permissions tell you which tools have been granted access to internal systems, CRMs, document repositories, financial databases, EHRs. A tool used by two people with write access to an accounts payable system is categorically more dangerous than a tool used by two hundred people drafting marketing copy.

- External API connections tell you which tools are routing data to model endpoints outside your environment. Every external API call is a potential data transfer to a third party whose retention policy, security posture, and regulatory standing you have not reviewed and cannot currently verify.

- Department of primary use determines the data classification risk. AI tools concentrated in finance, legal, HR, or engineering are handling the exact data categories, financial records, PII, source code, privileged communications, that EU AI Act Annex III and GDPR classify as highest-risk for automated processing.

Why User Count Is a Misleading Risk Signal

A browser extension used by one analyst in the finance team that connects directly to your accounts payable data represents more regulatory exposure than a writing assistant used by forty people across marketing. Sorting discovery results by user count produces a triage list that protects the wrong things first, and in an EU AI Act audit, the tools at the bottom of that list are often the ones the regulator finds most significant.

Why Continuous Discovery Is Not the Same as a Quarterly Audit

A quarterly audit produces a point-in-time snapshot. It tells you what tools were running on the day the audit ran. By the time the next review is scheduled, the environment it assessed no longer exists, because the shadow AI landscape changes on a weekly cadence, not a quarterly one.

Consider what changes between a January audit and an April review in a typical enterprise environment:

- A SaaS vendor ships an AI feature update enabled by default for all existing users, no new installation, no alert

- An employee installs a browser extension after a peer recommendation, no IT approval required

- A developer connects an internal agent pipeline to a new data source to accelerate a sprint, no security review triggered

- An AI vendor silently updates the underlying model, changing its data handling terms and safety properties , no notification sent

None of these events generate an alert under a quarterly audit cadence. All of them change your risk and compliance posture in ways that matter to a regulator.

The NIST AI Risk Management Framework's Govern function defines continuous monitoring of AI systems as a foundational governance requirement, and DORA's ICT risk management obligations, in force since January 2025, explicitly require ongoing oversight of third-party technology providers. A quarterly audit does not satisfy either standard.

The practical implication is that your first discovery scan is not the deliverable, it is the baseline.

What you build on top of it, the continuous monitoring layer that keeps that inventory current and flags changes as they happen, is what satisfies both the security requirement and the regulatory one.

For a detailed look at how AI tools reach sensitive data through channels a perimeter-based stack cannot see, the cloud AI security and data leak prevention guide covers the technical exposure pathways in full.

What Is the Difference Between an AI Security Policy and AI Security Enforcement?

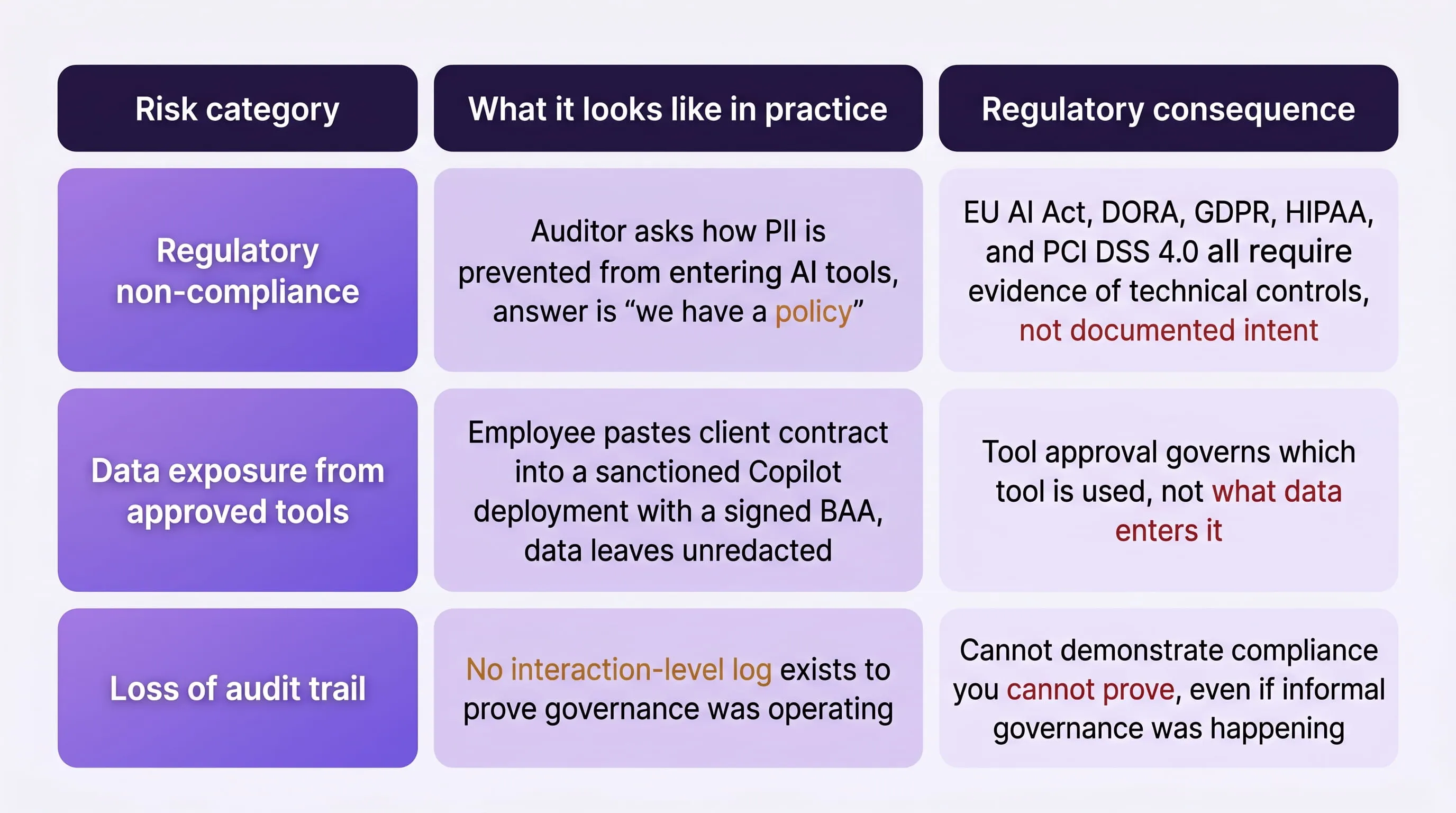

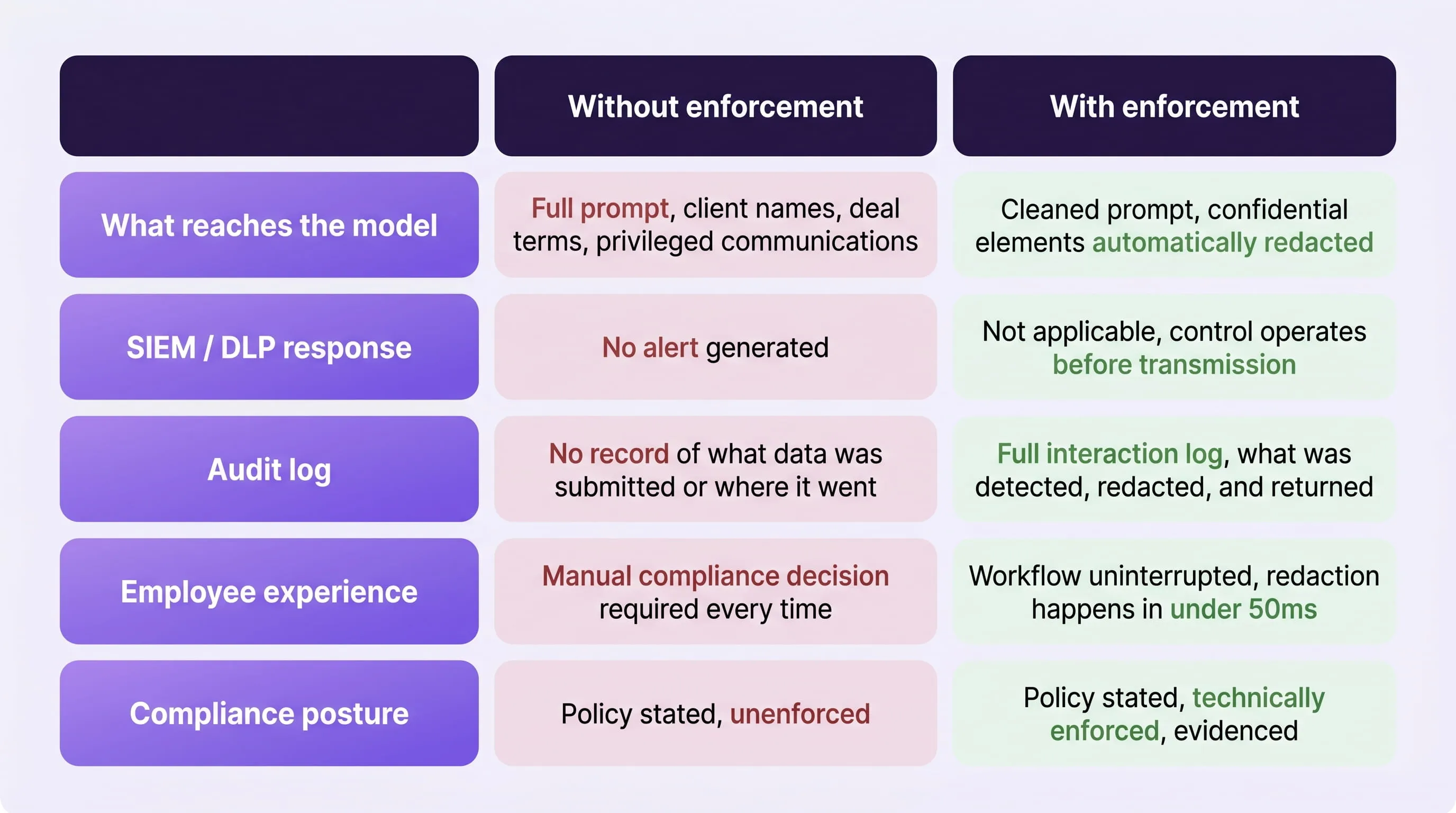

An AI security policy is a document. AI security enforcement is the technical layer that applies that document at the moment a prompt is submitted, automatically, consistently, and regardless of which tool, which employee, or which device is involved. Most enterprises in 2026 have the document. Very few have the enforcement layer. This gap, between what your policy states and what your environment actually enforces, is the first thing a regulator finds in an audit, and the primary reason organisations with formally documented AI governance programmes still experience material data exposure.

Why Having an AI Policy Is Not the Same as Having AI Governance

The distinction becomes clear the moment you test it. A standard enterprise AI usage policy includes a rule along the lines of: employees must not submit PII, PHI, or confidential financial data to external AI tools.

That rule is clear, reasonable, and almost universally violated, not because employees are careless, but because compliance requires them to make a data classification judgment, under deadline pressure, every single time they use an AI tool.

Without a technical enforcement layer, three things are true simultaneously:

- Your policy exists in a document your employees have read once and largely forgotten

- Every AI interaction in your environment is a manual compliance decision made by an individual

- You have no evidence of governance, even when governance was informally happening

With an enforcement layer, the classification happens automatically. Sensitive data is detected and redacted before the prompt leaves the organisation, without interrupting the employee's workflow. The policy stops being a statement of intent and becomes an operational control. This is precisely what EU AI Act Article 9 mandates, not a documented policy, but a functioning risk management system. The regulation uses the word "system" deliberately. A PDF does not qualify.

Where Does the Policy-to-Enforcement Gap Create the Most Exposure?

The highest exposure sits at the intersection of approved tools and ungoverned data flows, where an organisation has completed vendor review and signed a BAA, but has no control over what data employees actually submit through that approved tool.

This gap produces three compounding risk categories:

For a detailed breakdown of how these regulatory frameworks map to specific technical enforcement requirements across financial services, the AI risks in fintech guide covers the compliance obligations and enforcement gaps that most financial institutions are currently carrying.

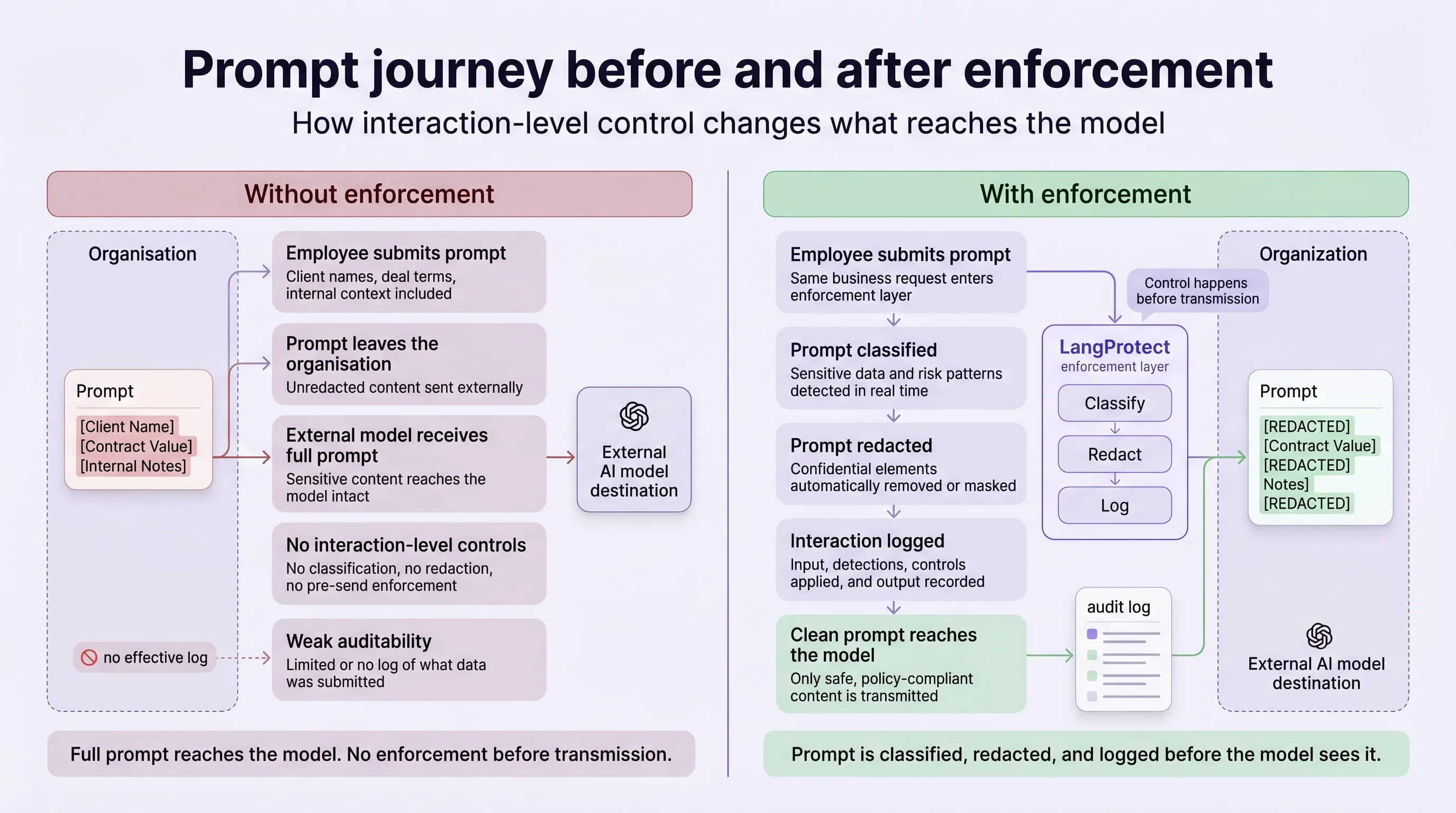

What Does Enforcement at the Interaction Layer Actually Look Like?

Enforcement at the interaction layer operates at the point where a prompt is submitted, before it reaches any model, whether inside a sanctioned enterprise tool or an unsanctioned browser extension. This means the enforcement layer covers your entire AI environment by default, not just the tools on your approved list.

Without Enforcement vs. With Enforcement, Legal Team, Client Contract

For a technical walkthrough of how semantic intent detection differs from keyword filtering at this layer, the real-time prompt filtering guide covers the architecture in full.

The OWASP LLM Top 10 identifies sensitive information disclosure as one of the highest-priority risks in enterprise LLM deployments, and the policy-to-enforcement gap is the primary mechanism through which that disclosure occurs in practice.

For organisations operating across financial services, healthcare, legal, and public sector environments, LangProtect Guardia applies this enforcement layer at the browser level, covering every AI interaction an employee initiates regardless of which platform or tool they are using. For a complete overview of how the full control stack maps to your compliance obligations, the LangProtect Solution Brief covers Guardia, Armor, and Vector in detail.

What This Means for Your Next Board Presentation

The question your board and your auditors will ask is not "do you have an AI policy?", it is "how do you enforce it?" If the honest answer is through employee training and the honour system, that answer will not survive regulatory scrutiny in 2026.

The shift from documented policy to technical enforcement is not an upgrade to your AI governance programme. It is the point at which you actually have one.

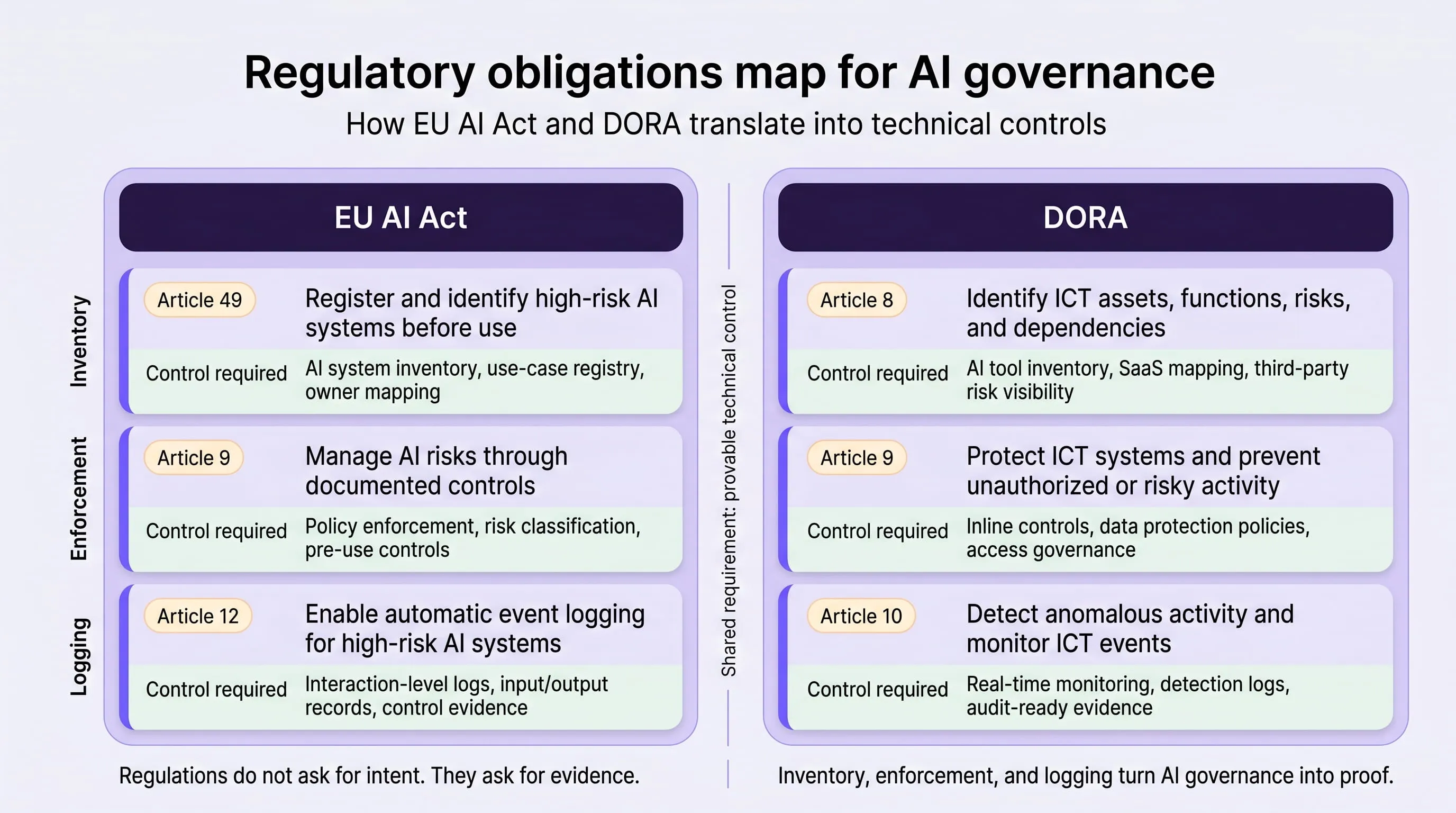

Which AI Adoption Risks Are Now Legally Your Problem Under the EU AI Act and DORA?

From August 2, 2026, ungoverned AI adoption is not just a security risk, it is an enforceable regulatory liability carrying penalties up to €35 million or 7% of global annual revenue, whichever is higher.

For most enterprise security leaders, the shift from voluntary governance best practice to mandatory legal obligation happened faster than internal governance programmes could keep pace with. Understanding exactly which obligations apply, and what evidence satisfies them, is no longer optional preparation for a future audit. It is the immediate operational priority.

What Does the EU AI Act Actually Require From Enterprise AI Deployments?

The EU AI Act organises its requirements around risk classification. Not every AI tool your employees use falls under the same obligation, but the tools that do carry the heaviest regulatory burden are precisely the ones most commonly found in ungoverned enterprise AI adoption: HR screening tools, credit scoring systems, legal document processing, and AI used in public sector decision-making all qualify as high-risk under Annex III of the EU AI Act.

For high-risk AI systems, two articles carry the most immediate operational weight for enterprise security teams.

EU AI Act Article 9: Risk Management System

Article 9 requires a documented, technically implemented risk management system for every high-risk AI system in use. The critical word is system, a written policy does not satisfy this

obligation. The requirement is for a continuous process that identifies, evaluates, and mitigates risks throughout the AI system's lifecycle, not at a point in time.

In practical terms, Article 9 compliance requires:

- A live inventory of every high-risk AI system in operation, not a spreadsheet updated quarterly

- Documented risk assessments for each system, covering data inputs, model behaviour, and potential failure modes

- Technical controls that actively mitigate the identified risks, not controls that rely on employee behaviour

- Evidence that the risk management system is operating continuously, not episodically

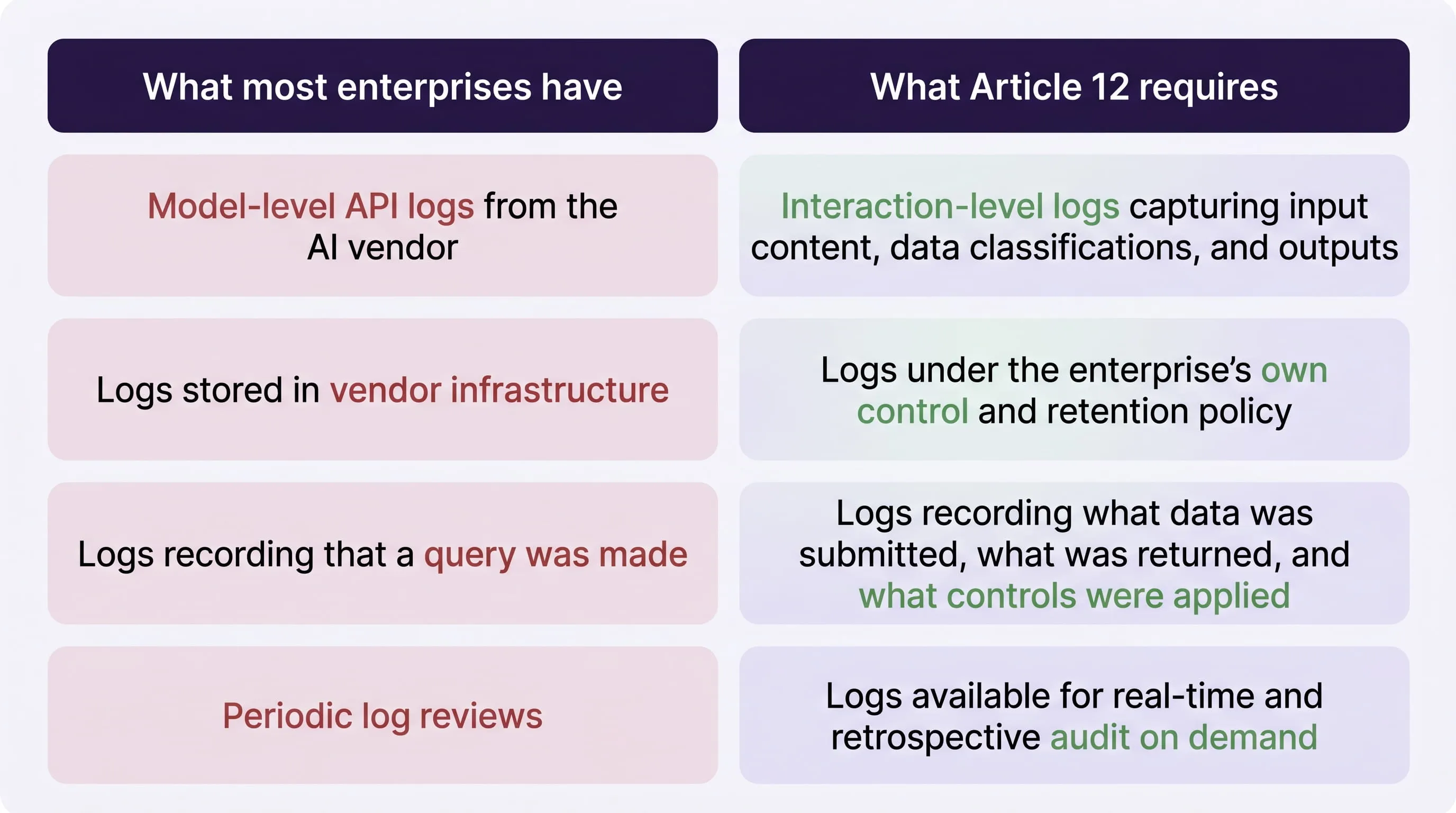

EU AI Act Article 12: Logging and Record-Keeping

Article 12 requires that high-risk AI systems automatically generate logs sufficient to enable post-hoc assessment of system behaviour. This is where the gap between what most enterprises currently have and what regulators will ask for is most pronounced.

The AI security ethics and traceability guide covers the forensic logging architecture in detail, including how interaction-level logs satisfy the auditability requirement without storing raw PII, which is the compliance tension most enterprises encounter when implementing Article 12.

What Does DORA Require That Most AI Governance Programmes Are Missing?

The Digital Operational Resilience Act has been in force since January 2025 and applies to financial entities operating in the EU, banks, insurers, investment firms, and their critical third-party ICT providers. For enterprises in scope, DORA introduces two specific obligations that most AI governance programmes are not currently meeting.

Third-party ICT risk management

It requires continuous monitoring of every third-party technology provider, which now includes every AI vendor whose tools your employees are using. If an employee is routing prompts through a browser extension connected to a third-party model, that vendor is an ICT provider under DORA. If you cannot demonstrate ongoing oversight of that provider's security posture, data handling terms, and model updates, you are non-compliant, regardless of whether the tool was formally approved.

ICT incident reporting

requires notification within specific timeframes when an ICT-related incident causes or could cause significant harm. An AI-related data exposure, an employee submitting client financial data to an unvetted model, or a prompt injection attack exfiltrating internal information, qualifies as an ICT incident under DORA. Without interaction-level logging, you cannot determine the scope of the incident, which makes the notification timeline impossible to meet.

For a detailed mapping of DORA's ICT risk management requirements to specific security controls in financial services environments, the EBA's official DORA guidance provides the authoritative regulatory framework. The AI risks in fintech guide covers how these obligations interact with SOX, PCI DSS 4.0, and GDPR in practice.

What Will a Regulatory Audit Actually Ask For?

Understanding the abstract obligation is one thing. Understanding what an auditor from a National Competent Authority will specifically request in a 2026 review is another, and the gap between the two is where most enterprises are currently most exposed.

A regulatory audit of AI adoption governance will typically open with four requests:

- A current AI system inventory — not a historical register, but a live list of every AI tool in active use, including those adopted without formal review

- Evidence of risk assessments for tools operating in high-risk categories under Annex III

- Interaction logs demonstrating that data controls were operating during a specified review period

- Incident records showing how any AI-related data exposure events were identified, contained, and reported

The first request alone, a current AI system inventory, is the one most enterprises cannot satisfy immediately. Shadow AI discovery, as covered in the first section of this guide, is the foundation that makes everything else possible. Without a live, accurate inventory, risk assessments cannot be completed, logging cannot be attributed correctly, and incident scope cannot be determined.

For organisations building this capability from scratch, the LangProtect enterprise AI governance framework and the Shadow AI detection solution provide the inventory and monitoring layer that audit readiness requires.

The NIST AI Risk Management Framework provides the governance backbone that both EU AI Act Article 9 and DORA ICT risk management are broadly aligned with, making it the most efficient single framework to build compliance evidence against.

The August 2, 2026 Deadline Is Not Contingent on Guidance

As of the time of writing, the European Commission's proposed Digital Omnibus package, which would have delayed some EU AI Act obligations; has not been confirmed. Enterprises should treat August 2, 2026 as the binding enforcement date for high-risk AI system obligations. Building your compliance programme around a delay that may not materialise is the highest-risk planning assumption available.

See where your AI adoption governance stands against EU AI Act and DORA requirements

Get a structured compliance gap assessment mapped to your current AI environment, before your August 2026 audit deadline

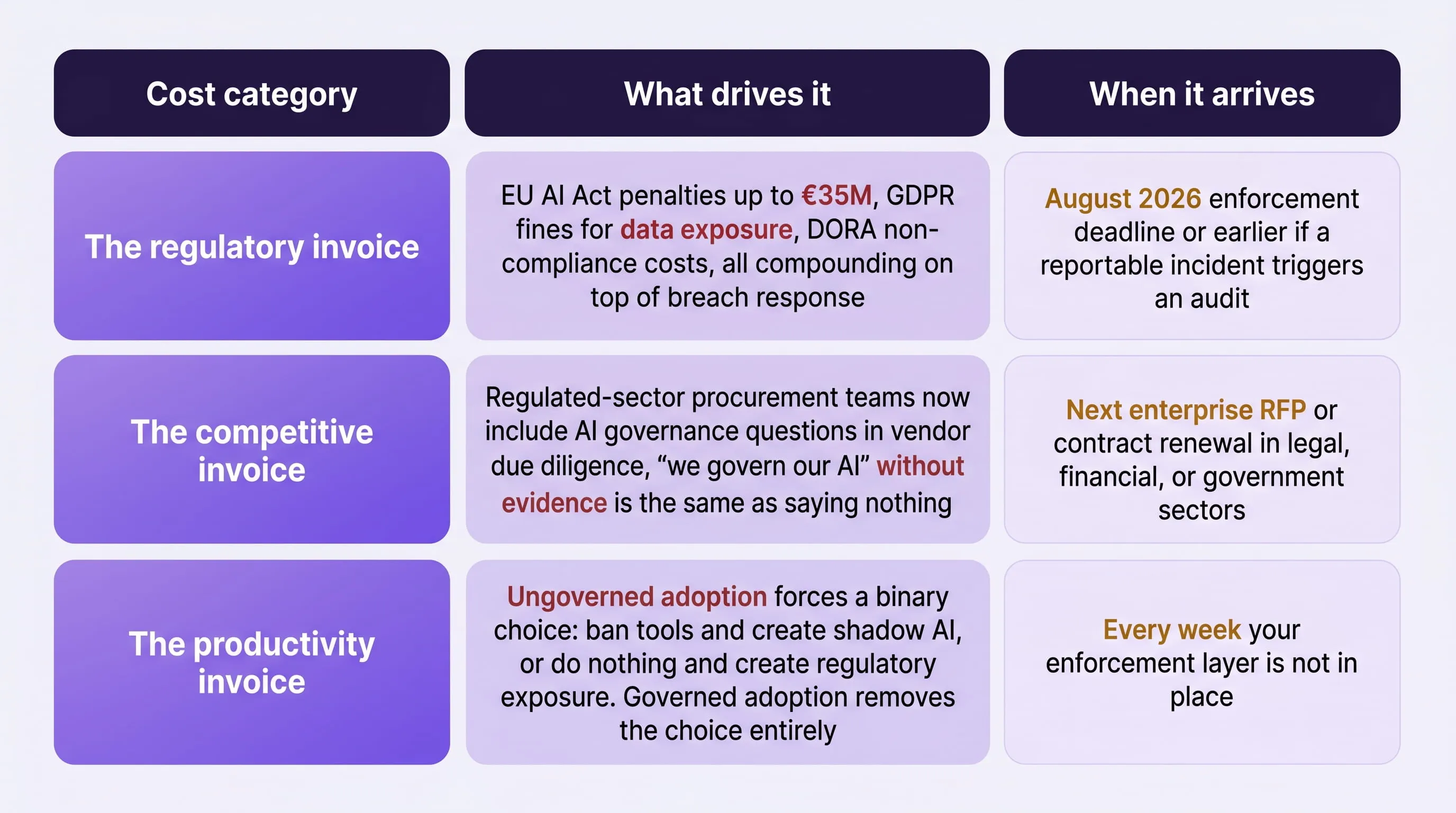

What Does Ungoverned AI Adoption Actually Cost, Beyond the Breach?

A shadow AI breach costs an average of $4.63 million , $670,000 more than a standard data breach, according to IBM's 2025 Cost of a Data Breach Report , but the breach is only the first invoice. The total cost of ungoverned AI adoption compounds across three distinct categories, each of which lands independently of whether a breach ever occurs.

The Three Invoices Ungoverned AI Adoption Generates

The number worth taking to the board is this: enterprises with mature AI security postures are 2.8x less likely to experience a material AI-related data breach than those relying on perimeter-level controls, according to the Cisco State of AI Security 2026 report.

That is not a security statistic, it is a business case. For a full breakdown of how these cost categories compound specifically in financial services environments, including the interaction between DORA penalties and GDPR fines, the AI risks in fintech guide covers the regulatory cost stack in detail.

The Cost of Inaction Is Not Zero, It Is Just Deferred

Every week without an enforcement layer is a week where the regulatory invoice is accumulating, the competitive disadvantage is widening, and the audit evidence trail is not being built.

The question is not whether ungoverned AI adoption costs money. It is whether you pay on your terms or on the regulator's.

How Do You Govern AI Adoption That's Already Happening, Without Starting Over?

You do not need to replace your existing AI tools, rebuild your security stack, or pause adoption while building a governance programme from scratch. You need to add an interaction-layer governance capability on top of what already exists, one that covers every tool simultaneously, including the ones employees adopted without review.

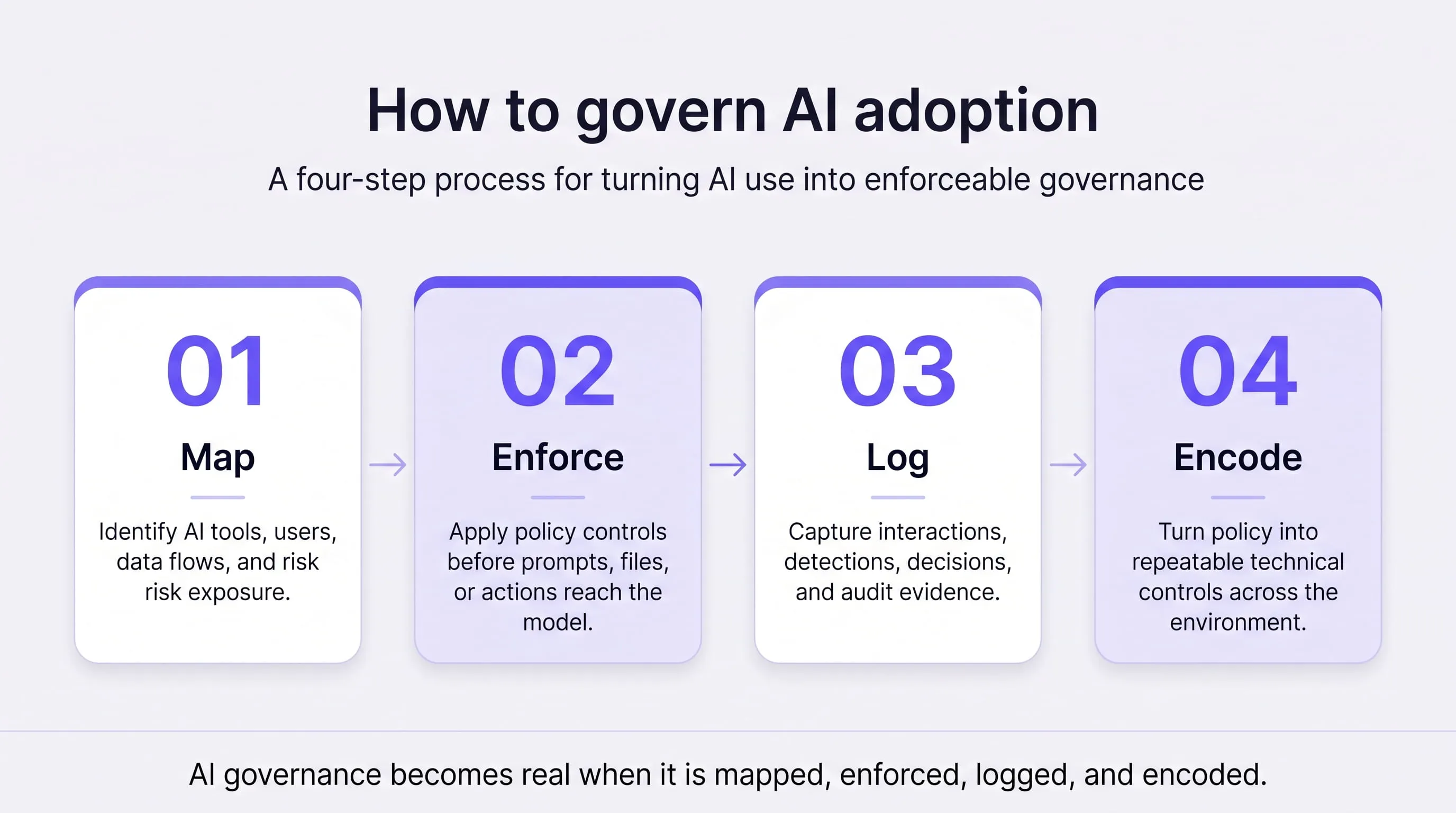

The Four-Step Governance Framework for AI Adoption Already in Progress

Step 1: Map Before You Manage

Run a continuous shadow AI discovery scan to produce a live inventory of every AI tool currently in active use. Sort results by data access permissions, not user count. This inventory is the foundation every subsequent step is built on, and, as covered in section one of this guide, it is the first thing a regulator will ask for.

Step 2: Enforce at the Interaction Layer, Not the Perimeter

Apply prompt-level policy enforcement across all tools simultaneously, sanctioned and unsanctioned. This does not require replacing any existing tool. The enforcement layer sits between the employee and every AI tool they use, classifying and redacting sensitive data before it reaches the model. For a full technical walkthrough of how this operates in practice, the real-time prompt filtering guide covers the architecture in detail.

Step 3: Build the Compliance Evidence Trail

Deploy interaction-level logging that captures what was prompted, what data was detected, what was redacted, and what reached the model. This is the specific evidence that satisfies EU AI Act Article 12 and DORA's ICT incident reporting requirements, and it is what your board needs to see before the next audit.

Step 4: Encode the Policy, Don't Just Document It

Your AI usage policy needs to be the configuration of your enforcement layer, not a separate PDF. When the policy changes, new data classification rules, new tool approvals, new regulatory requirements, the enforcement layer updates with it automatically. This is the point at which you move from having an AI policy to having AI governance.

What About AI Agents Running Alongside Employee-Facing Tools?

AI agents introduce a separate governance dimension that the four steps above do not fully address on their own. Unlike employee-facing tools where a human submits each prompt, agents operate autonomously, making tool calls, accessing data sources, and taking actions without human initiation at each step.

The AI agents security risk guide covers why agents require a separate identity and access governance layer on top of the interaction controls described here. For organisations with agentic AI already in production, LangProtect Vector provides the runtime protection layer specifically designed for agent and MCP environments.

The NIST AI Risk Management Framework provides the governance backbone that ties all four steps together, mapping to both EU AI Act Article 9's risk management system requirement and DORA's continuous oversight obligation within a single framework.

What Do You Tell the Board in Three Weeks?

The board does not need a technical briefing. They need three numbers, one sentence, and a deadline.

The Three Numbers That Tell the Governance Story

- Total AI tools currently running — your discovery scan provides this. Present the full number, including shadow AI. Underreporting here creates a worse problem when the real number surfaces later.

- Percentage currently governed — meaning covered by interaction-level enforcement and logging. This is your current compliance posture expressed as a ratio. Most enterprises discover this number is below 30% on first assessment.

- Cost of the unaddressed gap — the $4.63M average breach cost plus the applicable

regulatory penalty ceiling for your sector and jurisdiction. This converts a security problem into a financial exposure the board can evaluate against the cost of the governance investment.

The One Sentence They Need to Hear

*"We are deploying interaction-layer governance across all AI tools in the environment; * sanctioned and unsanctioned, over the next 30 days. By the end of Q3, we will have a compliant AI inventory, audit-ready interaction logs, and technical enforcement against our EU AI Act and DORA obligations."

This sentence works because it is specific, time-bounded, and maps directly to regulatory language the board's legal and compliance advisors will recognise. What the board actually wants, and what most CISO briefings fail to provide, is not an assessment of the problem. It is evidence that the person in the room has a plan that does not require them to make a decision they are not equipped to make.

AI Adoption Is Not Waiting for Your Governance Programme to Catch Up

The enterprises that will come out of 2026 in the strongest position are not the ones that adopted AI most aggressively, they are the ones that governed it most effectively while everyone else was still debating policy documents.

The discovery scan, the enforcement layer, the compliance evidence trail, and the board communication framework covered in this guide are not a 12-month transformation roadmap. They are the minimum viable governance posture for an organisation that already has AI running across its environment and a regulatory deadline on the calendar.

The window between where most enterprises are today and where EU AI Act and DORA enforcement will require them to be is narrowing faster than most internal governance programmes are moving.

The organisations that close that gap on their own terms, with a live inventory, technical enforcement, and audit-ready logs already in place , will spend August 2026 demonstrating compliance. The ones that do not will spend it explaining exposure.

Your AI adoption is already running. The only question left is whether it is running with governance or without it.

Ready to govern the AI adoption that's already happening in your organisation?

Get a complete shadow AI inventory, interaction-layer enforcement, and audit-ready compliance logs, deployed across your environment in days, not months.