What is Shadow AI and Why It's Costing Enterprises Millions

In enterprise security, the most dangerous breaches are not the ones that trigger alerts. They are the ones that do not.

As organisations accelerate AI adoption across every business function, finance, legal, HR, sales, operations, a structurally invisible risk category has emerged. Employees are submitting sensitive enterprise data to unsanctioned AI platforms at scale, generating no file transfers, no unusual login patterns, and no signals that conventional security infrastructure is designed to detect. The threat does not look like an intrusion. It looks like productivity.

According to IBM's 2025 Cost of a Data Breach Report, the first industry study to isolate AI-specific breach costs across 600 organisations globally, enterprises with high levels of shadow AI exposure incur an average breach cost of $4.63 million: a $670,000 premium over standard data breaches.

The driver of that premium is not the volume of data exposed. It is the detection gap.

Shadow AI breaches take an average of 247 days to identify, during which regulatory notification deadlines expire, data potentially enters third-party model training pipelines, and forensic reconstruction becomes progressively more expensive in the absence of prompt-layer audit logs.

To illustrate the scale of the exposure, consider what a standard enterprise morning looks like from two perspectives simultaneously:

SIEM Dashboard, Tuesday, 09:14

✓ No unusual authentication activity detected

✓ No anomalous data transfer volumes flagged

✓ No DLP policy violations triggered

✓ No endpoint security alerts generated

Status: Environment nominal

Actual activity, same 4-hour window:

→ HR Business Partner submitted 1,200 employee

performance records to ChatGPT for review

summary generation

→ Finance Director uploaded Q3 P&L to an AI

writing platform for board report drafting

→ Legal Counsel submitted a confidential client

NDA to a public LLM for contract redlining

→ Sales Lead exported full CRM database to an

AI personalisation tool for outreach sequencing

Security alerts generated: 0

Audit log entries created: 0

This is not a hypothetical scenario constructed for illustrative effect. It is the operational reality documented across organisations in IBM's research, the 2026 CISO AI Risk Report, and Lenovo's April 2026 enterprise AI survey, in which 70% of enterprise employees reported using AI tools weekly, with up to one-third doing so outside any form of IT oversight.

The fundamental problem is architectural, not behavioural.

SIEM platforms, DLP systems, and endpoint monitoring tools were engineered for a threat model defined by file-based data transfers, credential-based intrusions, and software installation events.

Shadow AI operates across none of those surfaces. It operates through browser sessions, pasted text fields, and API connections to legitimate, trusted vendor domains, producing activity that is indistinguishable from normal enterprise productivity in every log that security teams currently monitor.

This analysis examines the full financial anatomy of a shadow AI breach, the direct costs, the regulatory penalties that compound on top, and the forensic consequences of operating without prompt-layer visibility.

It also addresses why conventional security architecture is structurally insufficient to detect this exposure class, and what control framework enterprises must implement before regulatory scrutiny makes the absence of audit evidence a liability in itself.

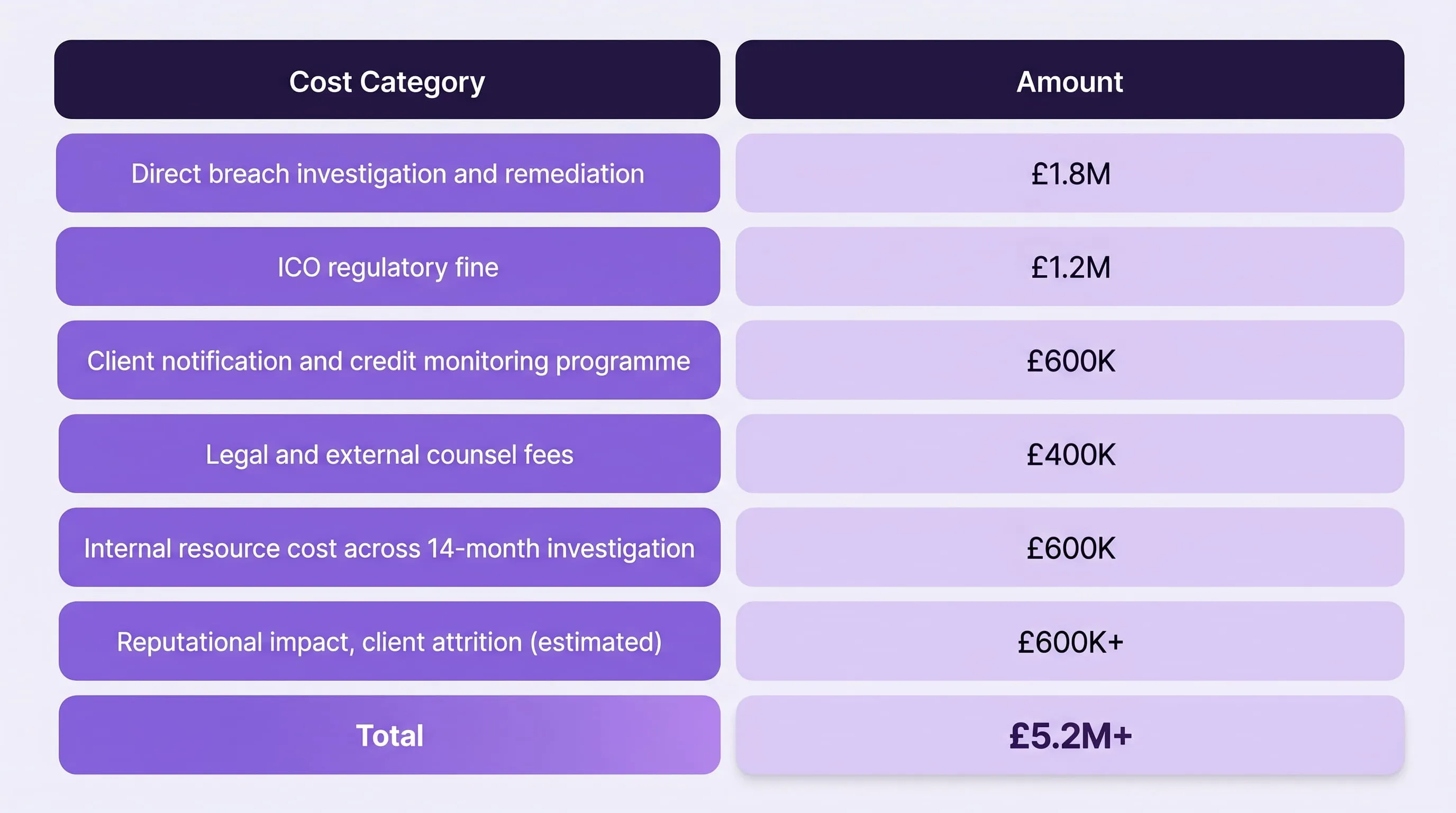

The Breach That Cost £5M, and Generated Zero Alerts

Shadow AI breaches are expensive not because attackers are sophisticated, but because the breach happens in plain sight, through tools employees trust, on networks security teams monitor, and generates no signal that existing controls are designed to catch.

Most organisations find out about shadow AI exposure one of three ways: a third-party vendor notifies them of a breach, a regulator initiates an inquiry, or an employee self-reports after reading a news story about a similar incident. In none of these scenarios does the organisation's own security stack raise the first flag.

The following scenario is a composite based on documented breach patterns across regulated industries. It reflects how shadow AI incidents actually unfold, not how security teams assume they do.

A 14-Month Exposure with No Internal Detection

A UK-based financial services firm employs a compliance team responsible for processing client Know Your Customer (KYC) documentation. The approved workflow is slow, document review, manual summarisation, compliance sign-off, and the backlog is persistent.

At some point in Q1, one analyst discovers that a publicly available AI summarisation tool processes KYC documents in seconds. The output quality is good. The tool is free. Nobody formally sanctions it. Nobody formally bans it. It occupies the grey zone that most enterprise AI governance policies leave unaddressed, the space between "approved" and "prohibited" where most shadow AI actually lives.

Over the following 14 months, the tool becomes standard practice across the compliance team. Client names, passport numbers, income verification records, and financial history are submitted regularly. The tool's interface looks professional. The domain passes every proxy filter. The activity is indistinguishable from legitimate browser-based productivity work. Then the tool's vendor suffers a breach.

What the Investigation Actually Found

When the vendor notifies the firm of the incident, the compliance and security teams face a problem that is more damaging than the breach itself: they cannot answer the questions that regulators will immediately ask.

- When was client data first submitted to this platform?

- How many client records were affected across the 14-month period?

- Was the data used in the vendor's model training pipeline?

- Can the firm demonstrate that the exposure is now contained?

The answer to every question is the same: we do not know. There are no prompt-layer logs. No DLP alerts were ever triggered because no file transfers occurred. No endpoint records exist because the tool required no installation. The firm cannot reconstruct the exposure timeline, cannot produce evidence of containment, and cannot demonstrate that it had any governance framework in place for AI tool usage.

Under GDPR Article 33, the firm had 72 hours to notify the ICO from the point of becoming aware of the breach. That obligation applies regardless of whether the firm can fully characterise the scope, and the inability to characterise scope is itself treated as evidence of inadequate controls.

Final cost breakdown:

None of this cost was driven by a sophisticated attacker. All of it was driven by a productivity tool that nobody thought to govern.

Why the Security Stack Missed It

This is the part most post-breach analyses understate. The firm's security infrastructure was not inadequate by conventional standards. SIEM was deployed and monitored. DLP policies covered email, file sharing, and removable media. Endpoint protection was current. Proxy filtering was active.

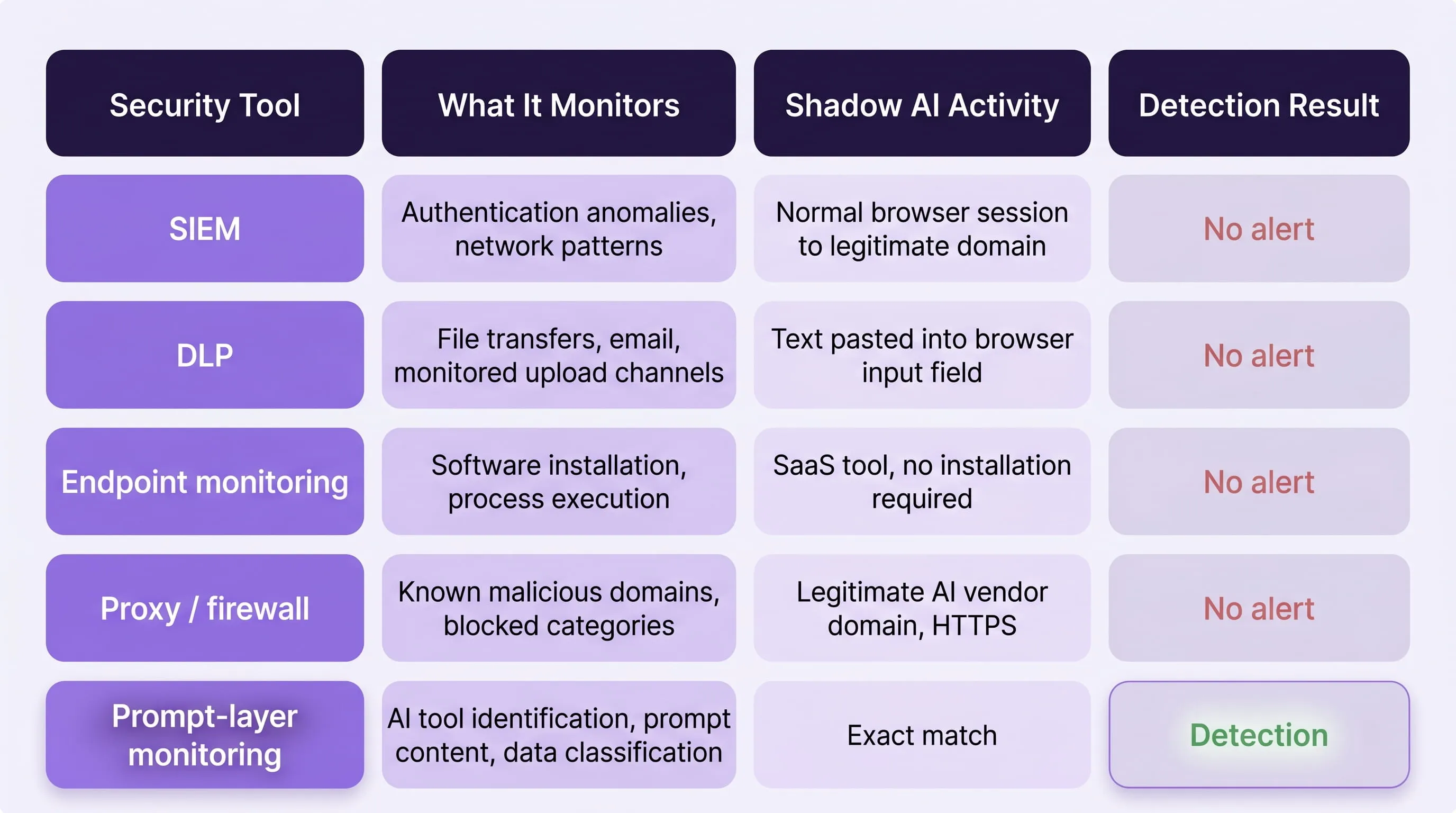

Every one of those controls failed to detect this exposure class because every one of them was designed to monitor surfaces that shadow AI does not use:

- SIEM monitors authentication anomalies and network traffic patterns. The analyst's session looked identical to any other browser-based workday. No anomaly, no alert.

- DLP inspects file transfers, email attachments, and content uploaded through monitored channels. Text pasted into a browser-based input field is architecturally invisible to standard DLP deployment. No inspection, no flag.

- Endpoint monitoring detects software installation events and process execution. The tool was SaaS, accessed entirely through a browser, requiring no installation. No footprint, no detection.

- Proxy filtering identifies connections to known malicious or prohibited domains. The vendor's domain was legitimate, HTTPS-encrypted, and not on any blocklist. No signal, no block.

The exposure was not hidden. It was simply operating on a surface that the entire security stack was not built to see.

The Regulatory Exposure That Compounds Every Day

The most important number in the breach timeline is not the fine. It is the 247 days.

IBM's 2025 research shows shadow AI breaches take an average of 247 days to identify. In the context of a firm operating under GDPR, EU AI Act, and FCA individual accountability obligations, each one of those days represents compounding liability:

- Days 1–3: GDPR 72-hour notification window expires. The firm is now in breach of its notification obligations regardless of what the underlying incident cost.

- Days 1–247: Data that was submitted to the vendor's platform may have entered model training cycles. Deletion requests, if made, apply to stored data only, not to model weights that have already been updated.

- From August 2, 2026: EU AI Act Article 12 mandates continuous logging of high-risk AI system activity. Organisations that cannot produce retrospective audit evidence for AI tool usage will face this obligation without the infrastructure to meet it.

The 247-day detection gap is not a number to cite in a board presentation. It is a description of how long your organisation's exposure compounds before anyone with the authority to act becomes aware of it.

Why a Shadow AI Breach Costs $670,000 More

The $670,000 premium that shadow AI breaches carry over standard enterprise breaches is not a rounding error or a statistical outlier. It is a structural cost driven by three compounding factors that conventional breach response processes are not equipped to handle: a detection gap that keeps exposure live for nearly eight months, a complete absence of the audit evidence that regulators require, and a data exposure profile concentrated in the highest-penalty categories under every major privacy framework.

Understanding where the $4.63 million comes from, and what sits on top of it, is the difference between a security budget conversation and a board-level risk conversation.

Layer 1 - The Direct Breach Cost ($4.63M Average)

As per IBM's 2025 Cost of a Data Breach Report, the first study to isolate AI-specific breach data across 600 organisations globally, establishes the baseline. Organisations with high levels of shadow AI incur average breach costs of $4.63 million, compared to $3.96 million for standard breaches. The $670,000 premium is consistent across industries and geographies.

Three factors drive that premium directly:

- Detection delay

Shadow AI breaches take an average of 247 days to identify, six days longer than the 241-day average for standard breaches. While six days sounds marginal, the compounding effect of an additional six days of active, undetected exposure across a high-volume data environment is significant.

More importantly, the 247-day figure reflects the complete absence of prompt-layer telemetry, organisations are not detecting these breaches slowly, they are not detecting them at all until an external party notifies them.

- Absence of audit logs

When a standard breach occurs, forensic investigators reconstruct timelines from SIEM logs, DLP records, and endpoint telemetry. Shadow AI breaches generate none of these records at the point of exposure.

Forensic reconstruction from zero baseline is three to five times more expensive than standard breach investigation, and in many cases, full reconstruction is impossible, which regulators treat as evidence of sustained, uncontained exposure.

- Data exposure profile

IBM's research found that 65% of shadow AI breaches expose customer PII, the data category carrying the highest penalty exposure under GDPR, HIPAA, and state privacy laws. 40% expose intellectual property, the category most damaging to competitive position and most difficult to remediate once it has entered a third-party AI platform's data pipeline.

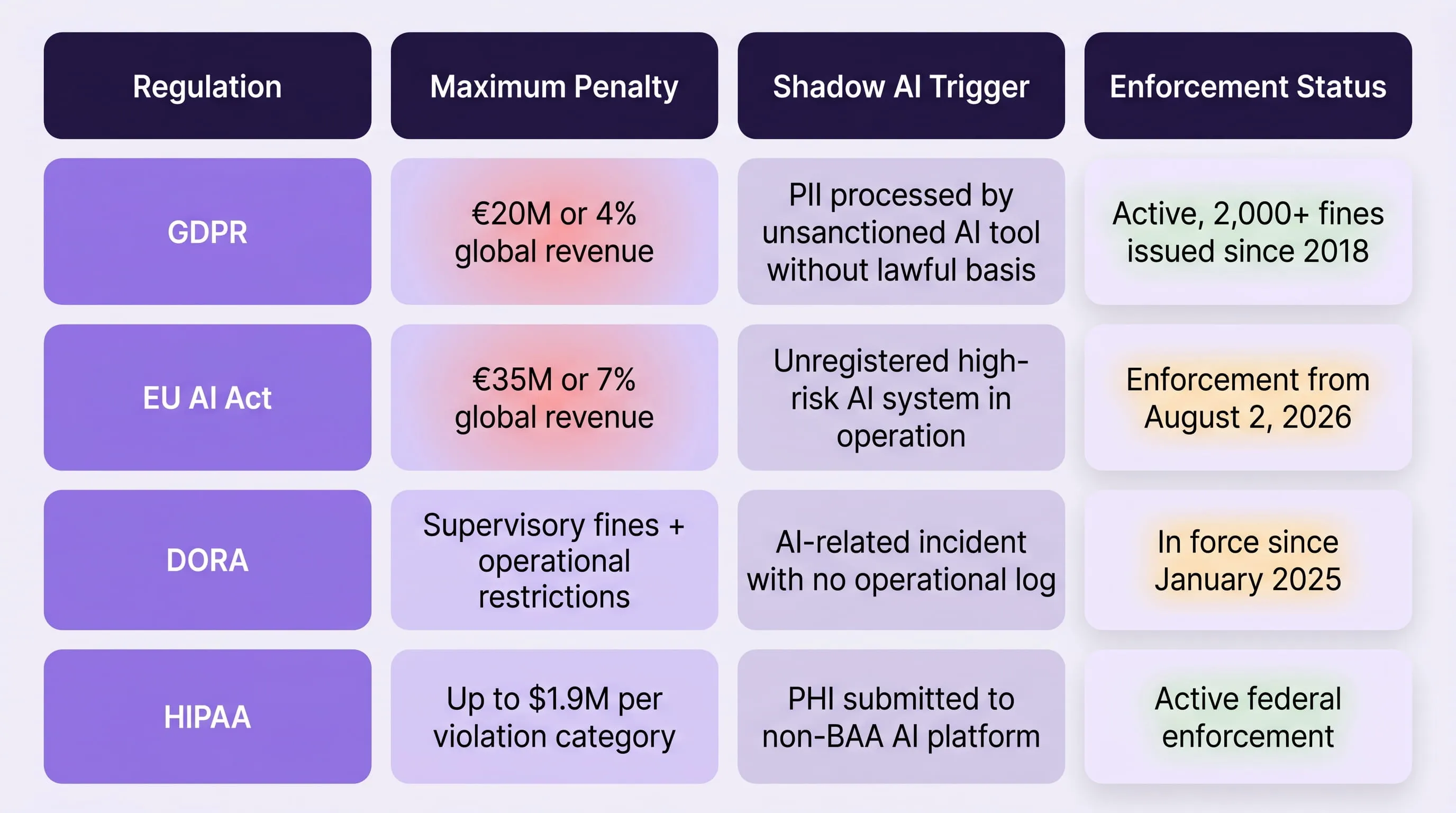

Layer 2 - Regulatory Penalties: The Number Most Boards Haven't Calculated

The $4.63 million direct cost is the floor, not the ceiling. For organisations operating in regulated industries or jurisdictions, the regulatory penalties that attach to a shadow AI breach, particularly where no audit logs exist, can exceed the direct breach cost itself.

The penalty exposure is not theoretical. It is defined, published, and actively enforced:

Two of these frameworks deserve specific attention for organisations evaluating their shadow AI exposure now.

The EU AI Act deadline is August 2, 2026, not a future planning horizon.

From that date, Article 12 of the EU AI Act mandates continuous logging of high-risk AI system activity. Organisations operating in the EU (or processing EU residents' data), that cannot demonstrate AI usage governance will face penalty exposure up to €35 million or 7% of global annual turnover.

For a mid-market enterprise with €500 million in annual revenue, that is a €35 million liability that shadow AI governance directly determines.

Critically, the EU AI Act does not require a breach to have occurred for penalties to apply. Operating an unregistered high-risk AI system, which includes many AI tools employees currently use without approval, is itself a violation. The shadow AI in your environment may already constitute non-compliance, independent of whether any data has been exposed.

DORA has been in force since January 2025, and most financial institutions are not ready.

For financial institutions operating under DORA's ICT risk management framework, AI-related operational incidents must be logged, classified, and reported within defined timelines. Shadow AI activity, by definition, generates no operational logs.

A shadow AI incident in a DORA-regulated environment is therefore simultaneously an ICT security incident and a reporting failure, compounding the regulatory exposure on two axes.

To understand how shadow AI enters regulated financial environments and what governance frameworks currently fail to contain it, see our analysis of why AI adoption is outpacing enterprise security architecture.

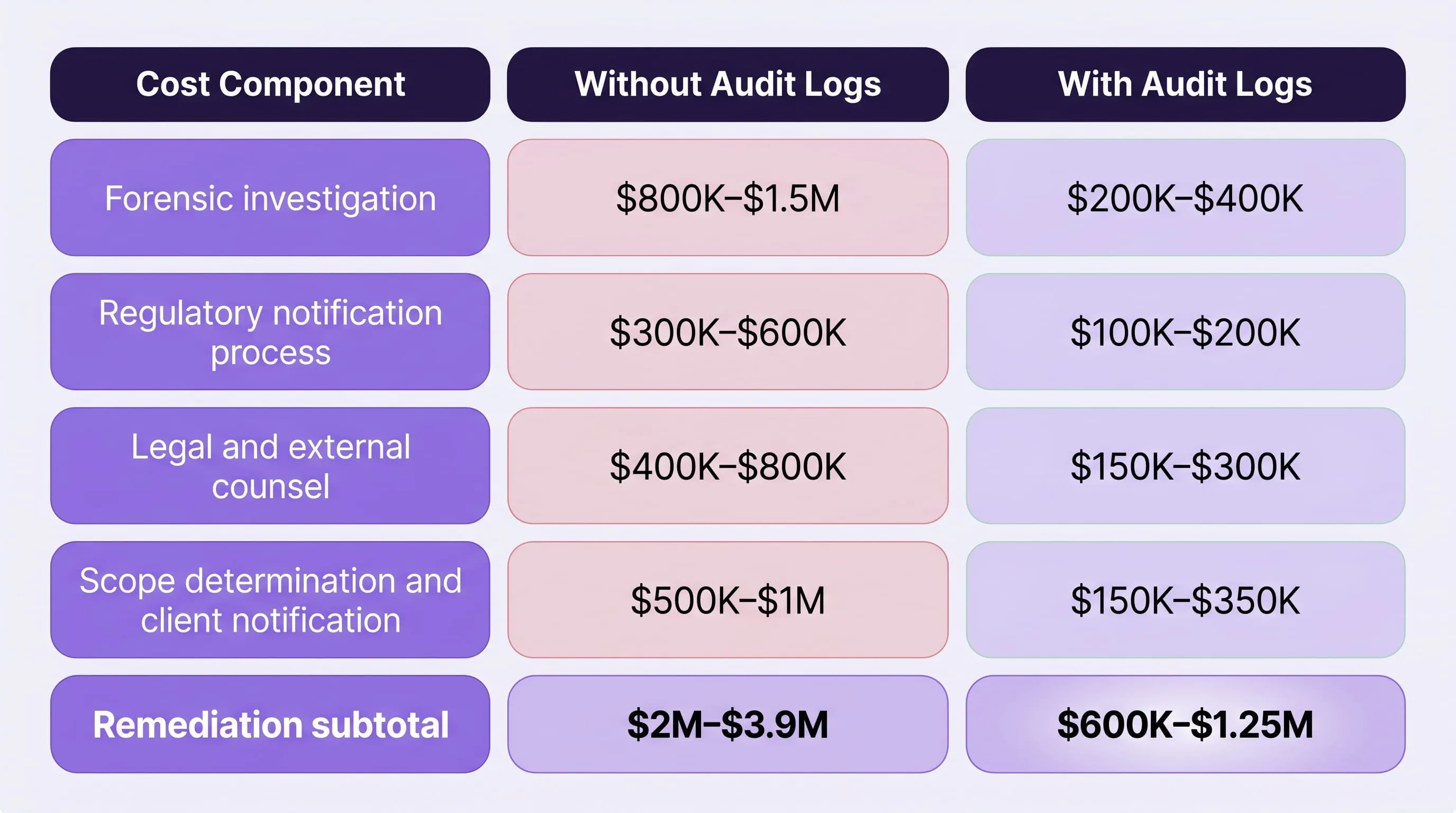

Layer 3 - Remediation Costs When There Are No Logs

Standard breach remediation follows a defined playbook: isolate the affected system, reconstruct the timeline from logs, notify affected parties, demonstrate containment to regulators. Each step depends on the existence of records that shadow AI breaches do not produce.

When forensic investigators arrive at a shadow AI incident with no prompt-layer logs, no DLP records, and no audit trail of AI tool usage, the remediation process changes fundamentally:

- Timeline reconstruction shifts from log analysis to employee interviews, browser history review, and vendor data requests, a process that is slower, less precise, and less credible to regulators

- Scope determination how many records were affected, across what time period, becomes an estimate rather than an evidence-based finding, which regulators treat with appropriate scepticism

- Containment demonstration the requirement to prove that exposure has ended, is impossible without records showing when it began

OWASP's framework for sensitive information disclosure in LLM applications classifies the absence of output and input logging as a primary risk amplifier in LLM deployments, not a secondary concern. The absence of logs does not reduce the cost of a breach. It multiplies it.

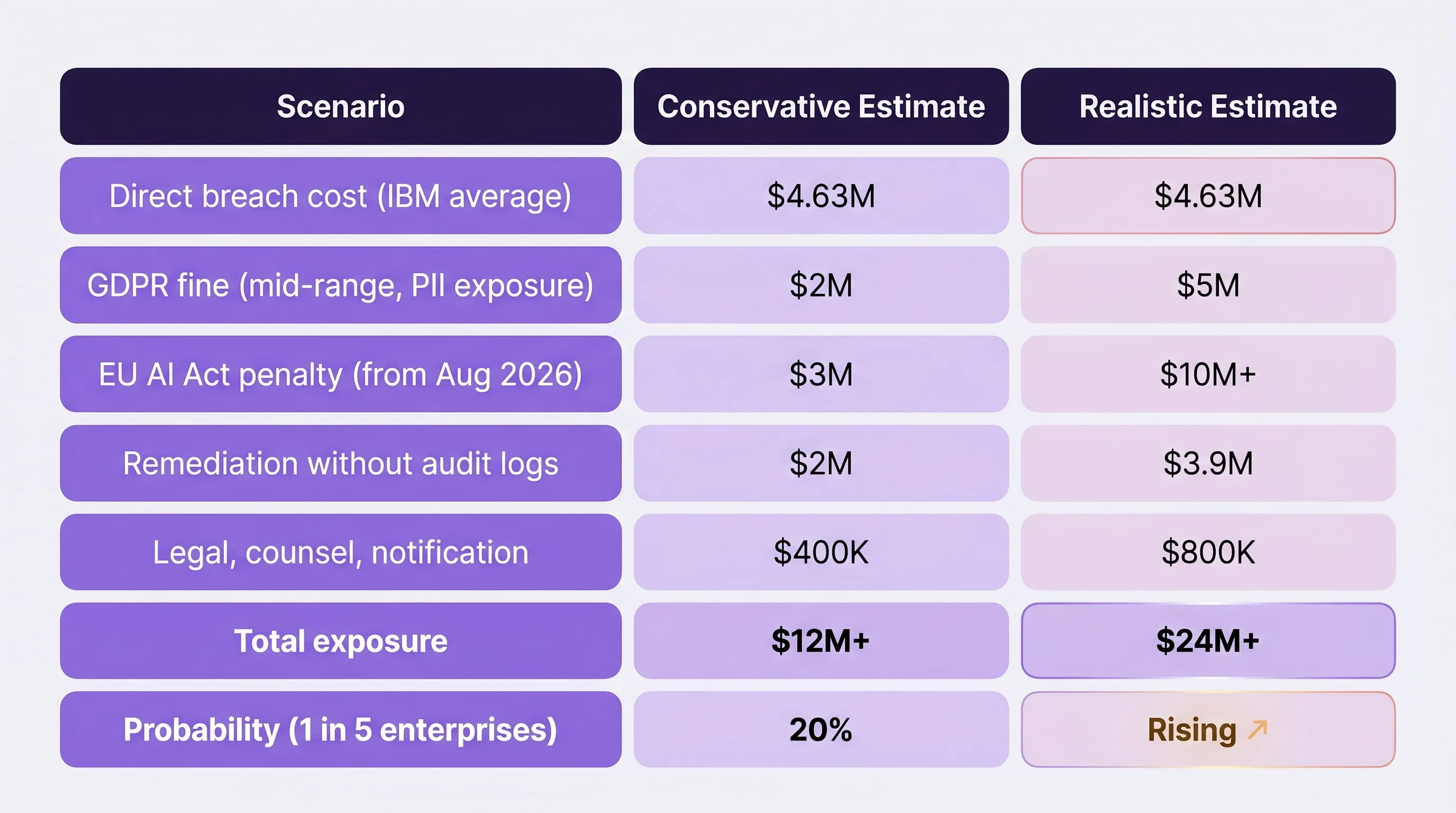

The compounding remediation math looks like this for a realistic mid-market scenario:

The difference between these two columns is not determined by the severity of the breach. It is determined entirely by whether prompt-layer logging was in place before the incident occurred.

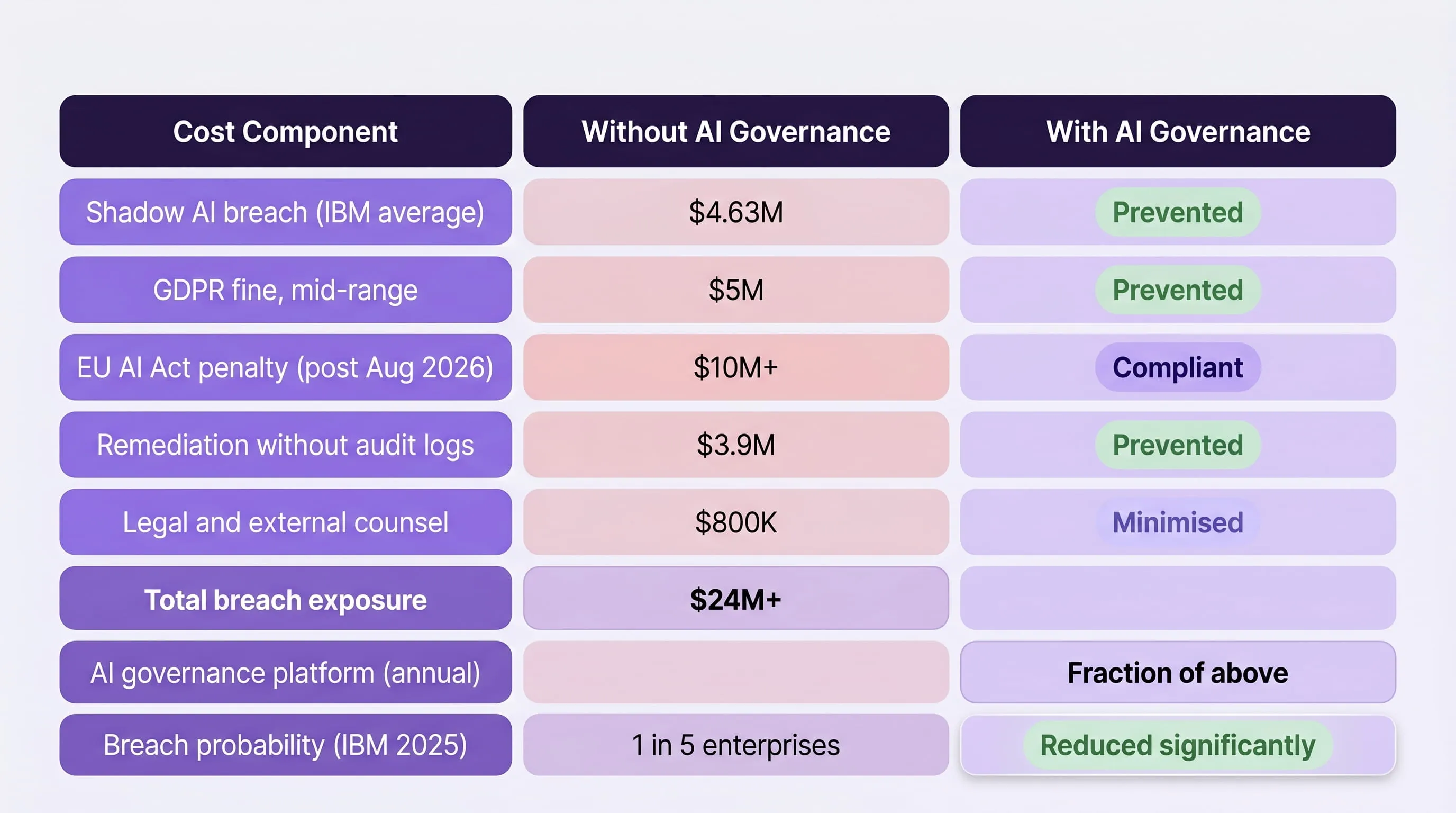

The Compounding Total - What the Board Should Be Looking At

When direct breach costs, regulatory penalties, and remediation are calculated together, the financial exposure profile of an unaddressed shadow AI environment looks like this:

The standard framing of shadow AI risk, "this might happen" understates the exposure. IBM's data establishes that 1 in 5 organisations has already experienced a shadow AI breach. Only 37% have policies to manage or detect shadow AI usage. Only 17% have technical controls that can prevent employees from uploading confidential data to public AI platforms.

This is not a risk category that improves passively over time. As AI adoption accelerates, Gartner projects that 40% of enterprise applications will embed task-specific AI agents by end of 2026 :- the number of unsanctioned AI touchpoints in a typical enterprise environment will multiply, and the probability of a shadow AI incident will rise proportionally with each new tool that employees adopt without security review.

A $4.63M Breach Starts With One Unsanctioned AI Tool

A $4.63M Breach Starts With One Unsanctioned AI Tool. See exactly how LangProtect detects shadow AI data flows and stops them before they reach a regulator.

Why Your Security Stack Is Architecturally Blind to Shadow AI

Most organisations responding to shadow AI risk make the same assumption: that their existing security tools, properly configured and actively monitored, should be capable of detecting it. That assumption is wrong, and it is wrong for a specific architectural reason, not a configuration one.

SIEM platforms, DLP systems, endpoint monitoring tools, and proxy filters were engineered during a period when enterprise data exposure followed predictable patterns. Data left the organisation through email attachments, file transfers, removable media, or cloud storage uploads. Threats arrived through credential compromise, malicious software, or network intrusion. Security tooling was built to monitor those surfaces, and it does so effectively.

Shadow AI does not use any of those surfaces.

It uses browser sessions. Pasted text fields. API connections to legitimate vendor domains over encrypted HTTPS. Activity that is architecturally indistinguishable from standard enterprise productivity, because in most cases, it is standard enterprise productivity. The employee is not trying to exfiltrate data. They are trying to do their job faster. The data leaves anyway.

Understanding exactly where each tool fails, and why is the prerequisite for building a control framework that actually addresses the exposure.

The Four Tools That Fail - and the Specific Reason Each One Misses Shadow AI

SIEM - Built for Anomaly Detection, Blind to Normal Behaviour

Security Information and Event Management platforms operate on a core principle: establish a baseline of normal activity, then alert on deviations. An employee submitting client data to an AI summarisation tool at 9 AM on a Tuesday generates no deviation from baseline. The authentication event is normal. The network destination is a legitimate, trusted domain. The session duration is consistent with standard working patterns. The data volume, text pasted into a browser field, registers as negligible compared to the file transfers and database queries that SIEM traffic analysis is calibrated to flag.

From the SIEM's perspective, nothing happened. From a data governance perspective, 14 months of client records just entered a third-party AI pipeline.

DLP - Designed for Files, Invisible to Prompts

Data Loss Prevention systems are built around a file-centric model of data transfer. They inspect email attachments, monitor file uploads to cloud storage, scan documents transferred via USB, and flag content matching defined sensitivity patterns when it moves through monitored channels.

Shadow AI does not move files. It moves text typed or pasted directly into browser-based input fields. That interaction occurs entirely within the browser session, outside the inspection surface that DLP systems monitor. According to OWASP's LLM Top 10 framework, sensitive information disclosure through LLM prompt inputs is now classified as a primary enterprise risk, precisely because it bypasses the file-based controls that most organisations rely on for data protection.

Standard DLP deployment does not see a prompt. It never will, unless it is specifically extended to operate at the AI interaction layer.

Endpoint Monitoring - Detects Installation, Misses SaaS

Endpoint Detection and Response tools identify threats by monitoring process execution, software installation events, and behavioural patterns on managed devices. They are highly effective at detecting malware, unauthorized application installation, and suspicious process activity.

Shadow AI tools are predominantly SaaS platforms, accessed entirely through a web browser, requiring no installation, creating no process footprint, and leaving no executable on the endpoint. From an EDR perspective, an employee accessing Claude, Gemini, or any of the thousands of AI-powered SaaS tools available today is indistinguishable from accessing any other website.

Proxy and Firewall Filtering, Only Blocks What It Knows Is Bad

Proxy filters and next-generation firewalls operate on domain reputation, threat intelligence feeds, and category-based blocking policies. They are effective at preventing access to known malicious infrastructure.

The AI platforms employees use for shadow AI are not malicious infrastructure. They are legitimate, well-resourced, security-conscious vendors with clean domain reputations and valid SSL certificates. No threat intelligence feed flags ChatGPT, Google Gemini, or Grammarly as a security risk. No category filter marks them as prohibited. The connection passes every check, because the tool itself is not the threat. The threat is what the employee submits to it.

The result is a complete visibility gap at the AI interaction layer:

What Monitoring at the AI Layer Actually Detects

The architectural gap is not addressable by reconfiguring existing tools. It requires a control operating at the surface where shadow AI activity actually occurs, the AI interaction layer itself.

Effective AI-layer monitoring operates across four dimensions that conventional tools cannot reach:

- Tool identification Not just whether an employee accessed a domain, but which AI platform received the data, whether that platform is sanctioned, and whether it has a data processing agreement with your organisation. This distinction, between a tool appearing in a browser history and a tool being identified as an AI system receiving enterprise data is what separates visibility from intelligence.

- Prompt content classification. Real-time classification of what data is being submitted in each interaction, PII patterns, financial data identifiers, health information markers, intellectual property signals, before that data leaves the enterprise environment. This is the capability that makes prevention possible rather than retrospective.

- Policy-based enforcement. The ability to apply graduated responses based on data classification and tool sanction status, warning employees on low-risk submissions, blocking high-risk data transfer in real time, and logging all activity regardless of outcome. Blocking alone is insufficient; as our analysis of why banning AI tools increases shadow AI risk demonstrates, restriction without governance pushes usage to surfaces with even less visibility.

- Tamper-proof audit logging. Every AI interaction is recorded with timestamp, user identity, tool identification, data classification result, and policy enforcement decision, producing the audit trail that GDPR Article 33, EU AI Act Article 12, and DORA's operational logging requirements demand, and that shadow AI breaches currently make impossible to produce.

For a detailed examination of how prompt-level monitoring prevents data leakage in real-time AI workflows, see How Real-Time Prompt Filtering Prevents Data Leaks.

The Scale of the Exposure

The architectural gap described above is not a theoretical future problem. It is actively producing exposure across enterprise environments at significant scale.

Exposure landscape that is actively expanding

- 70% of enterprise employees now use AI tools on a weekly basis, with up to one in three doing so outside any form of IT oversight, figures drawn from Lenovo's April 2026 survey of 6,000 enterprise employees across organisations with 1,000 or more staff.

- 75% of CISOs surveyed in the 2026 CISO AI Risk Report had already discovered unsanctioned AI tools running in their environments, with a further 16% indicating uncertainty, which in practice means the same problem undiscovered.

- 63% of organisations that experienced a breach either lacked an AI governance policy or were still developing one at the time of the incident, according to IBM's 2025 research.

These figures describe an exposure landscape that is actively expanding. As Gartner's projection of 40% enterprise app AI agent embedding by end of 2026 materialises, the number of AI interaction surfaces across a typical enterprise environment will increase by an order of magnitude, each one a potential shadow AI touchpoint that conventional security infrastructure will not see.

The organisations that close this architectural gap before that expansion completes will do so with audit logs, governance evidence, and regulatory defensibility in place. The organisations that do not will discover the gap the way most organisations currently do, through an external notification, after 247 days.

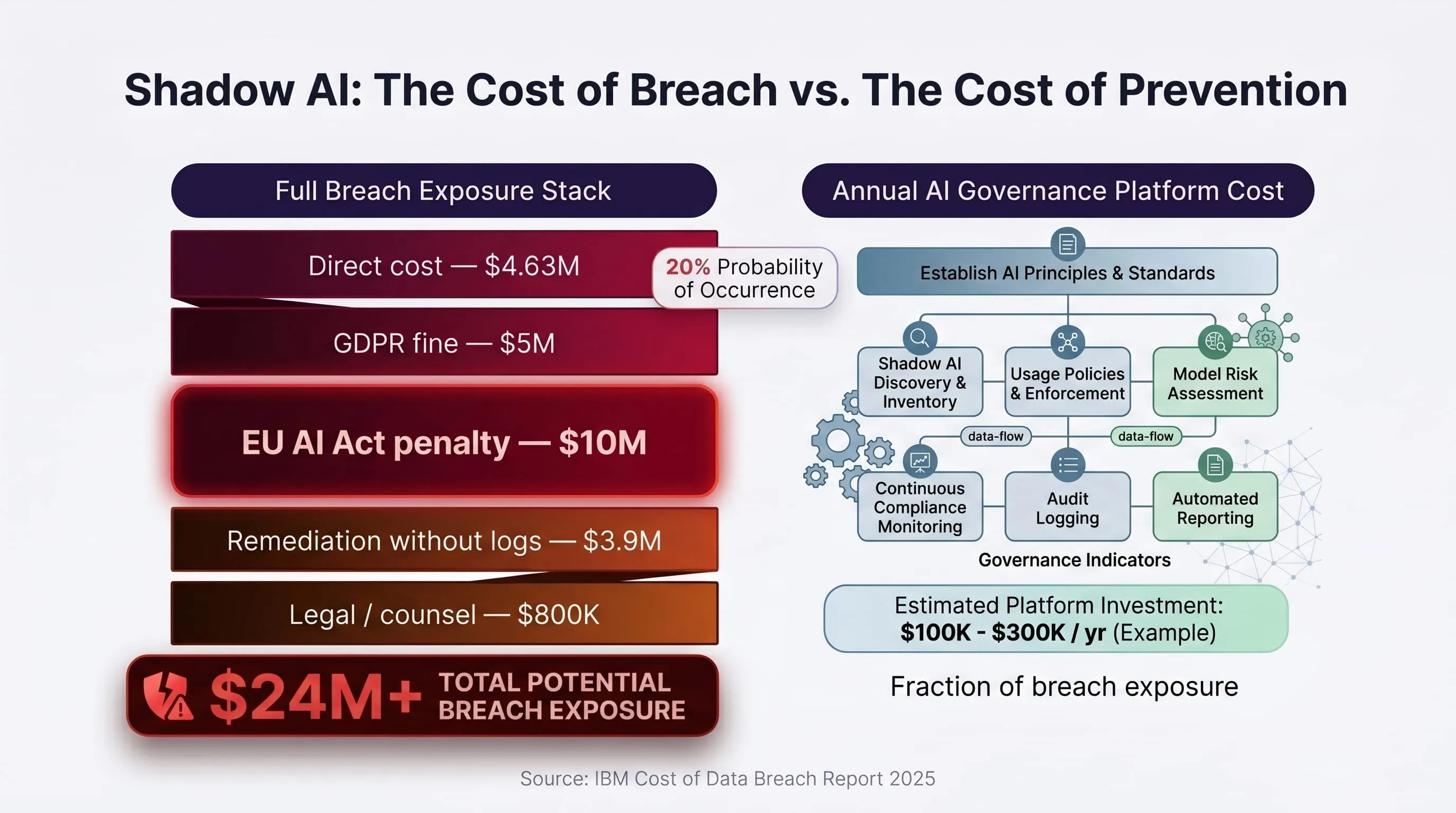

Prevention ROI - What It Costs to Govern Shadow AI vs. What It Costs to Breach

The business case for AI-layer security governance does not require sophisticated financial modelling. It requires one number from IBM's 2025 research, one probability figure, and an honest conversation about what your current stack can and cannot see.

The average shadow AI breach costs $4.63 million before regulatory penalties. One in five enterprises has already experienced one. The cost of preventing it is a fraction of that figure, and unlike breach costs, prevention costs are fixed, predictable, and entirely within your control.

The ROI Calculation Your CFO Actually Needs

Most security budget conversations fail because they present risk in abstract terms. Shadow AI risk is not abstract. It is quantified, documented, and actively compounding in environments that have not yet implemented AI-layer controls.

The comparison your board should be evaluating is not "should we invest in AI security" it is "which of these two cost profiles is acceptable to us:"

The Question to Put to Your CFO

"Would you accept a 20% probability of a $24 million liability, with no audit evidence and no insurance coverage when the cost of eliminating that exposure is a fraction of a single incident?"

That is not a rhetorical question. It is the exact framing that moves shadow AI security from a security team concern to a board-level budget decision.

Why Governance Beats Blocking Every Time

Before examining how LangProtect addresses this exposure, one critical misconception needs to be addressed directly:

blocking AI tools does not reduce shadow AI risk. It increases it.

When organisations prohibit access to approved AI platforms, employees do not stop using AI. They use:

- Personal devices, outside endpoint monitoring entirely

- Mobile browser sessions, invisible to proxy controls

- Less reputable AI tools, with weaker data handling and no enterprise data agreements

- Peer-sharing workarounds, where one unsanctioned tool is shared informally across a team

The result is not less shadow AI exposure. It is the same exposure, with less visibility and worse data handling on the receiving end.

This is a pattern we examine in detail in Why Banning ChatGPT Creates Shadow AI Risk, the evidence is consistent: restriction without governance produces more exposure, not less.

Effective prevention requires a fundamentally different approach, one that governs AI usage rather than attempting to prohibit it. That is the architectural position LangProtect is built on.

How LangProtect Closes the Shadow AI Security Gap

LangProtect is an enterprise AI security platform built specifically to address the exposure class that conventional security infrastructure cannot see: the AI interaction layer.

Where SIEM monitors network anomalies, DLP monitors file transfers, and endpoint tools monitor software installation, LangProtect monitors the surface where shadow AI actually operates: the prompt, the response, the tool, and the data flowing between them.

LangProtect's Three-Layer AI Security Platform

LangProtect addresses AI security exposure across three distinct surfaces, each one corresponding to a different way enterprises interact with AI:

LangProtect Guardia - Security for Employee AI Usage

What it addresses: The shadow AI exposure created when employees use GenAI tools, sanctioned or unsanctioned, to process enterprise data.

How it works:

- Continuous shadow AI discovery, identifies every AI tool in use across your environment in real time, including browser-based SaaS tools that leave no installation footprint. Not a quarterly audit, persistent, live telemetry.

- Prompt-level data classification, classifies data being submitted in each AI interaction before it leaves the enterprise environment. PII patterns, financial data, health information, intellectual property indicators, all identified at the prompt layer, before transmission.

- Policy-based enforcement, graduated response framework: warn employees on low-risk submissions, block high-risk data transfer in real time, allow governed usage to proceed uninterrupted. Employees continue working; risk is controlled at the interaction layer.

- Tamper-proof audit logging, every AI interaction recorded with full context: timestamp, user identity, tool identification, data classification result, policy enforcement outcome. The audit trail that GDPR Article 33, EU AI Act Article 12, and DORA operational logging require, generated automatically.

Key outcome for CISOs:

- Shadow AI exposure identified and governed without restricting legitimate productivity

- GDPR, EU AI Act, and DORA audit evidence generated as a byproduct of normal operation

- The 247-day detection gap eliminated, exposure is identified at the moment of occurrence, not months later

See how Guardia protects enterprise data across employee AI interactions: LangProtect Shadow AI Detection →

LangProtect Armor - Runtime Security for AI Applications

What it addresses: The security exposure created by internally-built or vendor-deployed AI applications processing sensitive enterprise data.

How it works:

- Real-time prompt inspection, inspects every prompt submitted to your AI applications for injection attempts, jailbreak patterns, and policy violations before the model processes them

- Output classification and filtering, classifies model outputs for sensitive data, harmful content, and policy violations before they reach the end user

- Runtime enforcement, blocks, rewrites, or flags policy-violating interactions in real time without degrading model performance

- Compliance evidence generation, produces the input/output logs that regulated industries require for AI application audit trails

Key outcome for CISOs:

- Prompt injection and jailbreak attempts blocked at the application layer

- Sensitive data prevented from appearing in model outputs

- Audit-ready evidence of AI application governance for regulatory review

LangProtect Vector - Security for AI Agents and MCP Connections

What it addresses: The emerging exposure is created by autonomous AI agents and Model Context Protocol (MCP) connections that can access, process, and transmit enterprise data without direct human oversight.

How it works:

- Agent action monitoring, tracks every action taken by AI agents operating in your environment, including tool calls, data retrievals, and external API connections

- MCP connection governance, identifies and governs MCP server connections, preventing agents from accessing data sources outside their defined operational scope

- Privilege drift detection, flags when AI agents accumulate or exercise permissions beyond their intended function, the agentic equivalent of privilege escalation

- Action-level audit logging, creates a complete, tamper-proof record of every agent action for forensic reconstruction and regulatory compliance

Key outcome for CISOs:

- Autonomous AI agents operating within defined access boundaries

- MCP connections governed and audited in real time

- Agent activity reconstructible from logs, critical for regulatory defensibility as EU AI Act enforcement expands to agentic systems

What LangProtect Specifically Provides That Your Current Stack Cannot

To make the architectural distinction concrete, this is the direct comparison between what conventional security tools provide versus what LangProtect adds at the AI layer:

This table represents the architectural gap that produces the $670,000 shadow AI breach premium. Every cell in the LangProtect column that reads ✓ corresponds to a control that was absent in the UK financial services scenario described earlier, and absent in the environments of the 20% of organisations that have already experienced a shadow AI breach.

The 4-Step Control Framework LangProtect Implements

For security teams building the case for AI-layer security investment, the implementation framework maps directly to the four requirements that shadow AI breach post-mortems consistently identify as missing:

Step 1: Continuous Shadow AI Discovery

The gap it closes: Most organisations discover shadow AI tools during a breach investigation, not before one. What LangProtect does:

- Provides persistent, real-time telemetry of every AI tool in use across the environment

- Identifies browser-based SaaS AI tools that leave no installation footprint

- Classifies discovered tools by sanction status, data handling policy, and risk profile

- Surfaces the complete AI tool inventory that EU AI Act compliance requires, automatically

Why it matters: A quarterly shadow IT audit produces a point-in-time snapshot. AI tool adoption moves weekly. Continuous discovery is the only approach that matches the pace of employee AI adoption.

Step 2: Prompt-Level Data Classification

The gap it closes: DLP cannot inspect text entered into browser fields. LangProtect operates at the layer DLP cannot reach.

What LangProtect does:

- Classifies prompt content against defined sensitivity policies before transmission

- Identifies PII, PHI, financial data, and IP indicators in real time

- Applies classification outcomes to enforcement decisions immediately, not retrospectively

Why it matters: By the time a file-based DLP system detects sensitive data transfer, the data has already left. Prompt-level classification intercepts the exposure before it occurs.

Step 3: Policy-Based Enforcement

The gap it closes: Blocking AI tool access pushes usage underground. Graduated enforcement governs usage without destroying productivity.

What LangProtect does:

- Applies per-user, per-tool, per-data-type enforcement policies

- Warn → block → log enforcement spectrum based on data sensitivity and tool sanction status

- Allows approved AI usage to proceed uninterrupted, only high-risk submissions are blocked

- Employee-facing notifications explain why an action was blocked, reducing friction and building awareness

Why it matters: Governance that employees understand and can work within produces compliance. Governance that simply blocks produces shadow AI on personal devices.

Step 4: Audit-Ready Logging

The gap it closes: Shadow AI breaches produce no audit evidence. Regulatory defensibility requires a complete interaction log that does not exist without purpose-built AI monitoring.

What LangProtect does:

- Logs every AI interaction with: timestamp, user identity, tool name, data classification result, policy decision, outcome

- Produces tamper-proof audit trails formatted for GDPR Article 30, EU AI Act Article 12, DORA, and HIPAA requirements

- Enables forensic reconstruction of any AI interaction, the capability that transforms a $3.9M remediation into a $400K one

Why it matters: The difference between the two remediation cost columns in the table above is entirely determined by whether this step was implemented before the incident occurred.

Shadow AI Is Already in Your Environment - The Only Question Is Whether You Can See It

The $4.63 million average cost of a shadow AI breach is not a projection. It is a measured outcome across 600 organisations that have already experienced what most enterprises are currently building toward, an AI adoption curve that has outpaced the security architecture designed to govern it.

The architectural gap is specific and well-defined. Employees are submitting sensitive enterprise data to AI platforms through browser sessions that SIEM cannot profile, text fields that DLP cannot inspect, and SaaS tools that endpoint monitoring cannot see. The exposure is happening at normal business hours, on managed devices, through legitimate vendor domains, and generating zero alerts in the process.

Three things determine whether your organisation pays the $4.63 million or prevents it:

- Whether you have visibility into which AI tools are operating in your environment right now, not from a quarterly audit, but continuously

- Whether you have controls operating at the AI interaction layer, not the network layer, not the file layer, but the prompt layer where the exposure actually occurs

- Whether you have logs, the audit evidence that transforms a $3.9 million forensic investigation into a $400,000 one, and that satisfies the regulatory obligations your organisation already carries

LangProtect provides all three, across employee AI usage through Guardia, AI applications through Armor, and autonomous AI agents through Vector. The platform closes the architectural gap that conventional security tools leave open, produces the regulatory audit evidence that EU AI Act, GDPR, and DORA require, and does so without restricting the AI productivity your organisation has already built its workflows around.

The 247-day detection window is not a fixed feature of shadow AI risk. It is a consequence of the absence of AI-layer monitoring. With the right controls in place, that window closes to zero.

Your security stack cannot see the AI interaction layer. LangProtect can.

Stop shadow AI breaches before they become a $4.63M problem.

Frequently Asked Questions About Shadow AI Breaches

Q: What is the average cost of a shadow AI breach?

A shadow AI breach costs enterprises an average of $4.63 million, $670,000 more than a standard data breach, according to IBM's 2025 Cost of a Data Breach Report. That premium rises significantly when regulatory penalties under GDPR, EU AI Act, and DORA are added on top. Without prompt-layer audit logs in place, remediation costs alone can add another $2–4 million to that figure.

Q: Why can't my existing security tools detect shadow AI?

SIEM, DLP, and endpoint monitoring tools were built to detect file transfers, credential anomalies, and software installation events. Shadow AI operates through browser sessions and pasted text fields, surfaces these tools were never designed to inspect. LangProtect operates specifically at the AI interaction layer, classifying prompt content and identifying unsanctioned AI tools in real time, on the surface your current stack cannot see.

Q: How does LangProtect detect shadow AI in my environment?

LangProtect Guardia provides continuous, real-time telemetry of every AI tool in use across your environment, including browser-based SaaS platforms that leave no installation footprint. Unlike a quarterly audit that produces a point-in-time snapshot, Guardia monitors AI tool usage persistently, classifies the data being submitted at the prompt layer, and surfaces your complete AI tool inventory automatically. See how shadow AI detection works →

Q: Can my organisation be fined for shadow AI even if no data was confirmed stolen?

Yes. Under GDPR, a reportable breach is any unauthorised access to or disclosure of personal data, not just confirmed theft. If an employee submitted personal data to an unsanctioned AI platform without a lawful basis, that constitutes a reportable breach regardless of whether the data was misused. The absence of audit logs, which shadow AI environments consistently produce, makes containment impossible to prove, which regulators treat as evidence of sustained exposure.

Q: Does the EU AI Act apply to shadow AI tools employees use without approval?

Yes, directly. From August 2, 2026. The EU AI Act requires organisations to maintain a governed inventory of AI systems in operation, with continuous logging for high-risk systems under Article 12. Unsanctioned AI tools processing sensitive data qualify as unregistered AI systems under the Act. Operating them without governance exposes organisations to penalties of up to €35 million or 7% of global annual revenue, independent of whether a breach has occurred. LangProtect generates the AI inventory and audit evidence the EU AI Act requires as a byproduct of normal operation.

Q: How long does it take to detect a shadow AI breach without AI-layer monitoring?

Without prompt-layer visibility, the average shadow AI breach takes 247 days to detect, nearly eight months, according to IBM's 2025 research. During that window, GDPR's 72-hour notification deadline expires, regulatory liability compounds daily, and data may enter third-party AI model training pipelines. LangProtect eliminates this detection gap by identifying exposure at the moment it occurs, not months later through an external notification.

Q: Is blocking AI tools an effective way to prevent shadow AI breaches?

No, and the evidence is consistent on this point. Blocking approved AI tools pushes employees toward unsanctioned alternatives accessed through personal devices and mobile browsers, outside any security monitoring. The result is the same data exposure with less visibility and weaker data handling on the receiving end. LangProtect's approach is governance, not restriction, allowing approved AI usage to proceed uninterrupted while classifying and enforcing at the prompt layer, where the actual risk occurs.

Q: What audit evidence does LangProtect produce for regulatory compliance?

LangProtect generates a tamper-proof audit log of every AI interaction, capturing timestamp, user identity, tool identification, data classification result, and policy enforcement outcome. This evidence satisfies the logging and monitoring requirements of GDPR Article 30, EU AI Act Article 12, DORA operational resilience obligations, and HIPAA audit controls, all produced automatically, without manual compilation. For organisations facing a regulatory inquiry, this is the difference between demonstrating governance and being unable to account for 14 months of AI tool usage.

Tags

Related articles

The Illusion of Enterprise Safety: Why Sanctioned LLM Accounts Still Leak Patient Data

The Prohibition Paradox: Why Banning ChatGPT is Your Boardroom’s Strategic Vulnerability