Enterprise AI Adoption Barriers: Why Security Stalls the Rollout

In most enterprises right now, there are two conversations about AI happening simultaneously, and neither side knows exactly what the other is afraid of. In the boardroom, AI is a competitive obligation. Rivals are shipping AI-assisted products, compressing timelines, and reallocating headcount. Every quarter without a formal AI strategy is a quarter the gap compounds.

One floor down, in the security and compliance function, the picture looks different. Vendor agreements are unsigned. Data handling terms are unclear. Compliance obligations under the EU AI Act and DORA are still being interpreted by legal teams who received the frameworks months after the business had already started asking for tool approvals.

The result is a deadlock that neither side is wrong to hold. Boards are not being reckless when they push for speed, and security teams are not being obstructionist when they push back. The problem is structural: enterprise security processes were designed for a world where software moved slowly enough that a 90-day review was a reasonable safeguard.

That world no longer exists. Employees are not waiting for the formal decision to be made. According to the Stanford AI Index 2025, 78% of employees are already using AI tools at work, and the majority of those tools were never reviewed, approved, or logged by anyone in IT or security.

This post examines the four security barriers that most commonly stall formal enterprise AI adoption, explains why each one is a legitimate concern rather than simple obstruction, and sets out what a structured path forward actually requires, so the answer to the board stops being "not yet" and starts being "yes, with these controls in place."

Not sure if your organisation is ready for AI adoption?

Book a 30-minute call with LangProtect's security team and we'll show you exactly where your current controls end, and how to move forward safely →

Why Does AI Adoption Outpace Enterprise Security Reviews?

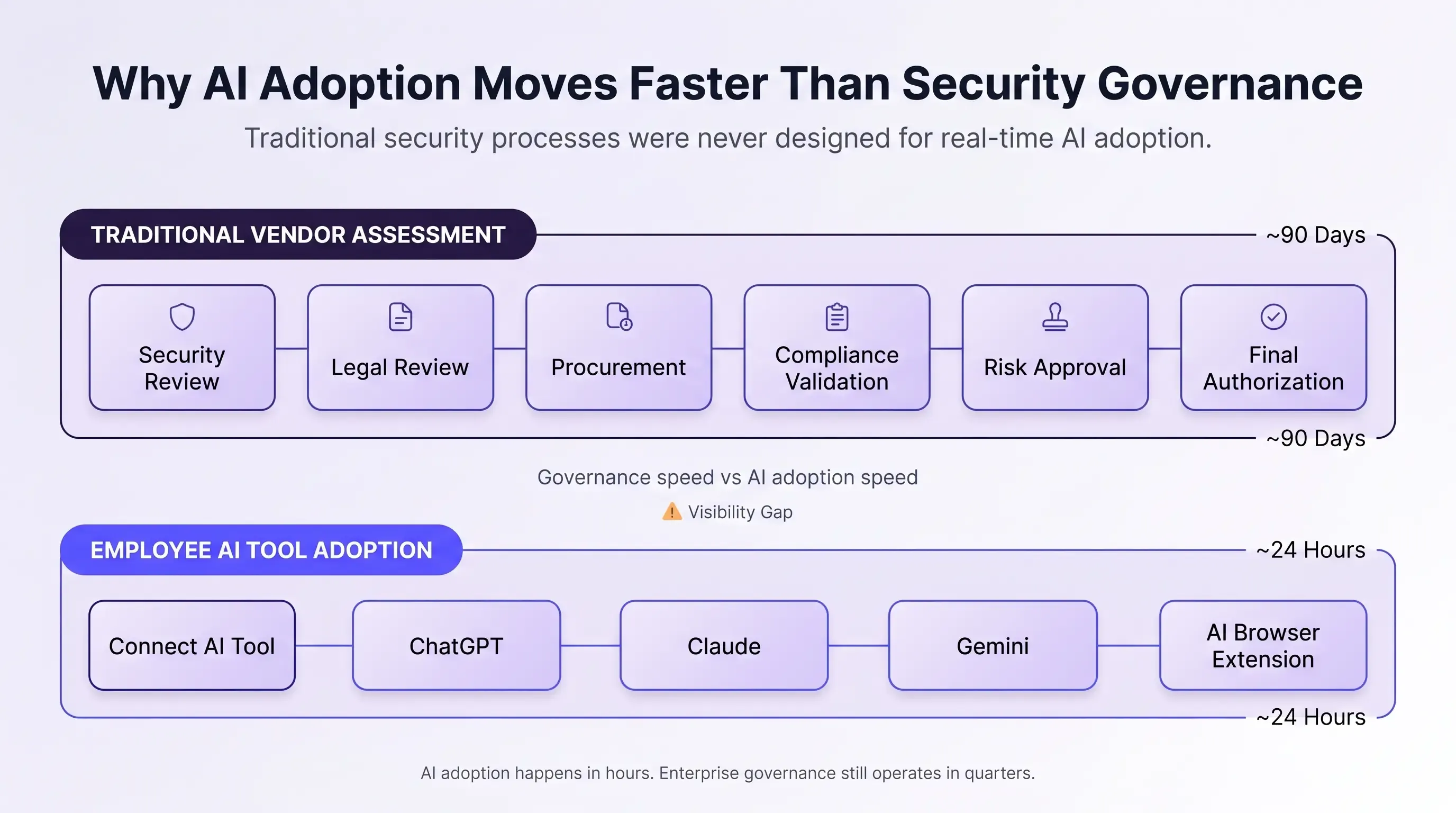

Enterprise security teams take 60 to 90 days to complete a vendor risk assessment. Employees discover, download, and start using AI tools in under 24 hours. That gap is not a process failure or a staffing problem, it is a structural mismatch between a security framework built for quarterly software release cycles and a category of technology that ships model updates weekly.

The traditional software procurement timeline was designed as a security control. Each stage, vendor security review, legal DPA negotiation, IT provisioning, compliance sign-off, existed to ensure that no tool touched company data before it had been evaluated.

That sequence worked because software moved slowly enough for the process to keep pace. AI adoption has inverted that relationship entirely, and most enterprise security architectures have not been updated to reflect it.

The Real Scale of the Problem

The volume of AI tools now available makes the traditional review process impossible to apply uniformly. Bessemer Venture Partners identified over 10,000 AI tools in active enterprise use in 2026. No security team has the capacity to run a full vendor assessment on each one before an employee installs it. The bottleneck is not resources, it is the architecture of the process itself.

What this looks like in practice

A product manager at a 2,000-person B2B software company discovers an AI meeting summarizer on a Monday. By the following Wednesday, it has automatically transcribed and processed 40 internal meetings, containing unreleased product roadmap details, client names, and Q3 revenue projections.

None of it sits in the vendor inventory. None of it has a signed DPA. The security team learns about the tool six weeks later during a routine software audit.

The data has already been processed, retained, and is governed by terms no one in the organisation has reviewed.

This scenario is not unusual. It is the default outcome when the approval process moves slower than the tools it is supposed to govern.

What Should Replace the Vendor Approval Process for AI?

The answer is not a faster version of the same 90-day review, it is a different control mechanism entirely. Continuous AI discovery identifies tools as they appear in the environment in real time, rather than catching them weeks after data has already moved.

This shifts the security posture from reactive audit to active governance, and it produces the tool inventory that every subsequent control, DPA review, compliance mapping, prompt monitoring, depends on.

According to Gartner's September 2025 Innovation Insight on AI Usage Controls, existing enterprise security controls are "generally not optimised for specific AI threats or controls." That assessment applies directly here: the vendor assessment framework was not designed to evaluate AI-specific risks such as prompt data retention, model training opt-ins, or output behaviour changes after model updates. Even when reviews do happen, the frameworks being applied were built for a different category of software.

The NIST AI Risk Management Framework addresses this directly, its Govern function establishes that AI-specific governance structures must be in place before deployment begins, not retrofitted after tools are already live. That principle is the foundation of a security posture that can actually keep pace with AI adoption speed.

The practical first step for security teams:

- Deploy continuous AI discovery before the next tool request arrives to find what is already running, not just what has been formally requested

- Tier all AI tools by data sensitivity on discovery, not after a full assessment, tools that process no regulated data get a fast-track review; tools that process PII, PHI, or financial records get the full process

- Set a maximum review window per tier, five business days for low-sensitivity tools, 30 days for regulated data tools — so "under review" has a defined end date that the business can plan around

The goal is not to eliminate security review. It is to make the review process fast enough to be the first thing that happens, rather than the sixth thing employees find out about after they have already adopted the tool on their own.

Why Do Boards and CISOs Disagree on AI Adoption?

Boards and CISOs disagree on AI adoption not because one side is wrong, but because they are each working with a different definition of risk, the board measures the cost of not adopting, the CISO measures the cost of adopting without controls, and neither calculation is incorrect.

From the board's perspective, AI is a competitive obligation, not an optional investment. Rivals are compressing product timelines, reducing operational costs, and building AI-assisted workflows at a pace where quarterly delays translate directly into market share.

When a board pushes for faster AI adoption, it is not dismissing security risk, it is pricing the risk of inaction and finding it higher than the risk of moving forward.

The CISO's calculation is equally grounded. Unreviewed AI adoption means unsigned vendor agreements, unmonitored data flows, and no audit trail when something goes wrong. When a breach does occur under those conditions, the security team absorbs the accountability for a decision it was never given the authority to shape. Board pressure to move faster, in that context, is not support, it is exposure without authority.

Both positions are accurate. Neither is complete without a shared framework that defines what approval actually means.

The cost of getting this wrong

A CISO at a 2,500-person financial services firm blocks an AI contract review tool for two quarters. The security concern is legitimate, the vendor assessment is incomplete.

During those six months, 11 lawyers in the legal department find a free consumer version of a competing product and start using it immediately. They submit client contracts, NDAs, and live deal terms — no DPA, no audit trail, no IT visibility. The blocked enterprise tool had a signed BAA and full GDPR-compliant data handling. The consumer version the lawyers chose had neither.

Six months of careful security review produced a materially worse outcome than a 48-hour conditional approval would have. The delay did not prevent the risk. It just moved it somewhere no one could see it.

What Should an Enterprise AI Approval Standard Include?

The deadlock breaks when "not yet" becomes a defined threshold rather than an indefinite position. A CISO who can say "yes, when these five criteria are met" is not blocking adoption — they are governing it, on record, with accountability on both sides.

Those five criteria need to be specific enough that both the security team and the business can verify them without interpretation:

- Vendor data handling confirmed — retention period, training data opt-out, and sub-processor list documented in a signed DPA before any employee accesses the tool

- Prompt-level controls active — sensitive data classified and filtered at the point of input, before it reaches the model's servers

- Compliance obligations mapped — the tool's use case assessed against EU AI Act Annex III, DORA ICT risk requirements, and GDPR data processing obligations relevant to the organisation's sector

- Audit logging live — every AI interaction producing a tamper-evident record that satisfies regulatory evidence requirements

- Re-assessment date set — a defined 90-day review window post-deployment, triggered automatically when the vendor announces a model update

When these criteria are documented and met, the answer is yes. When they are not, the answer is "not yet, and here is exactly what changes that." That distinction turns a recurring argument into a resolved process.

How Do CISOs Enable AI Adoption Without Losing Accountability?

Forrester's 2026 research found that CISOs must shift from "technology gatekeeper to strategic enabler of AI adoption", and that shift is as much about positioning as it is about process.

The CISO who builds the AI approval framework owns the company's AI strategy. Every tool that goes live does so on documented terms the security team defined. The CISO who only says no owns nothing except the blame when employees adopt tools informally — which, as the scenario above shows, they will.

A written approval standard, consistently applied across every tool request, is the mechanism that makes that shift possible. It gives the board a process it can trust and gives the security team a defensible record regardless of outcome.

LangProtect's enterprise AI governance framework operationalises those five criteria across every tool request, producing a documented approval trail without a manual assessment every time a new request lands on the security team's desk.

Your board wants a yes. Your security team needs a standard.

LangProtect gives you both, a documented AI approval framework that moves in days, not quarters, and produces the audit trail regulators will ask for.

Which Compliance Laws Actually Apply to Enterprise AI Tools?

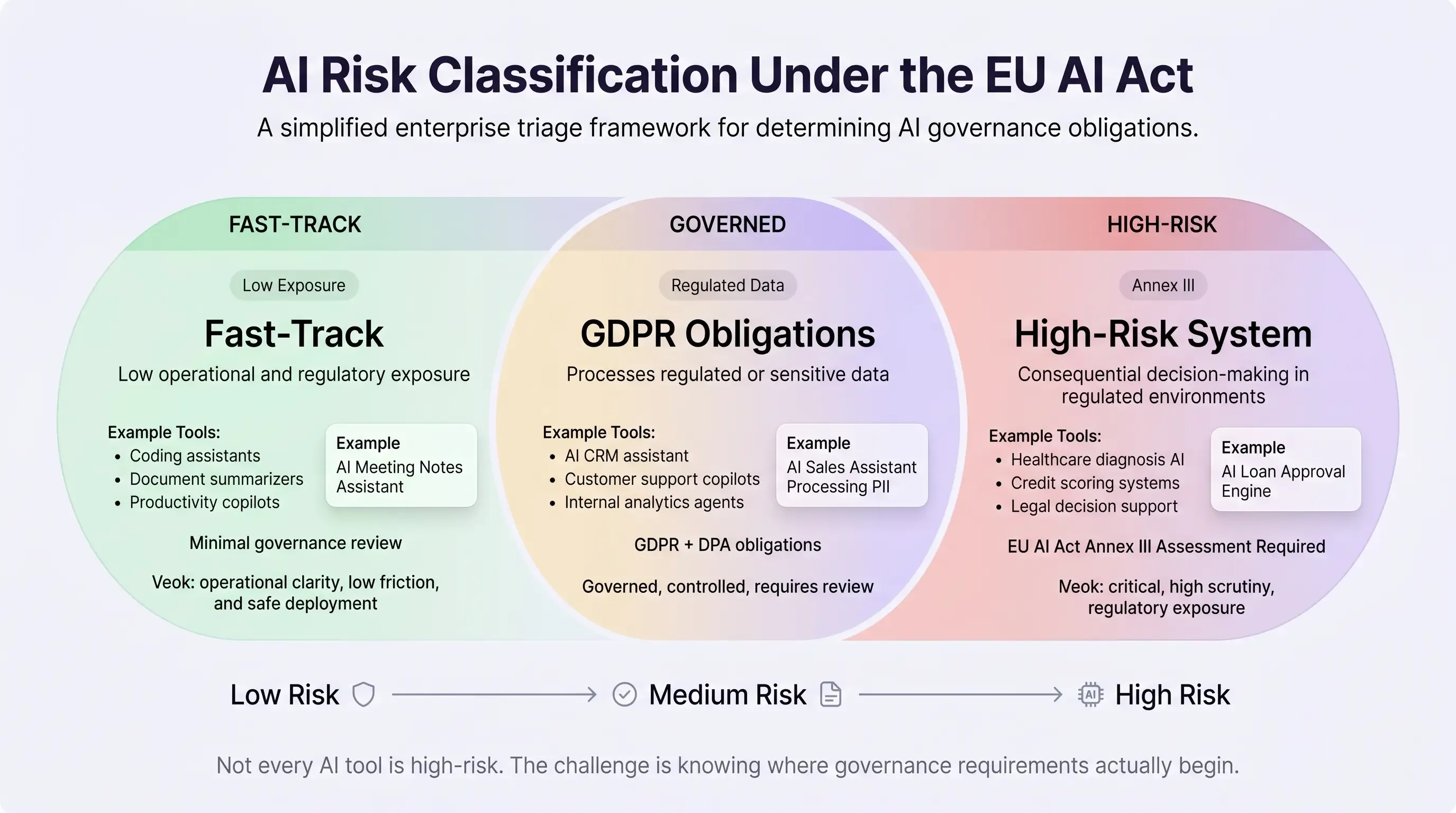

Most enterprise AI deployments do not trigger the high-risk compliance obligations that legal and security teams are most afraid of, but without a structured method for determining which regulation applies to which tool, every deployment looks equally risky, and the safest decision keeps being no decision at all.

This is compliance paralysis. It is not irrational. The EU AI Act, DORA, and GDPR all intersect on AI, all carry significant penalties, and all arrived as frameworks that legal teams are still interpreting while the business is already asking for tool approvals. The paralysis is not caused by the regulations themselves, it is caused by the absence of a triage method that separates tools requiring full regulatory treatment from tools that do not.

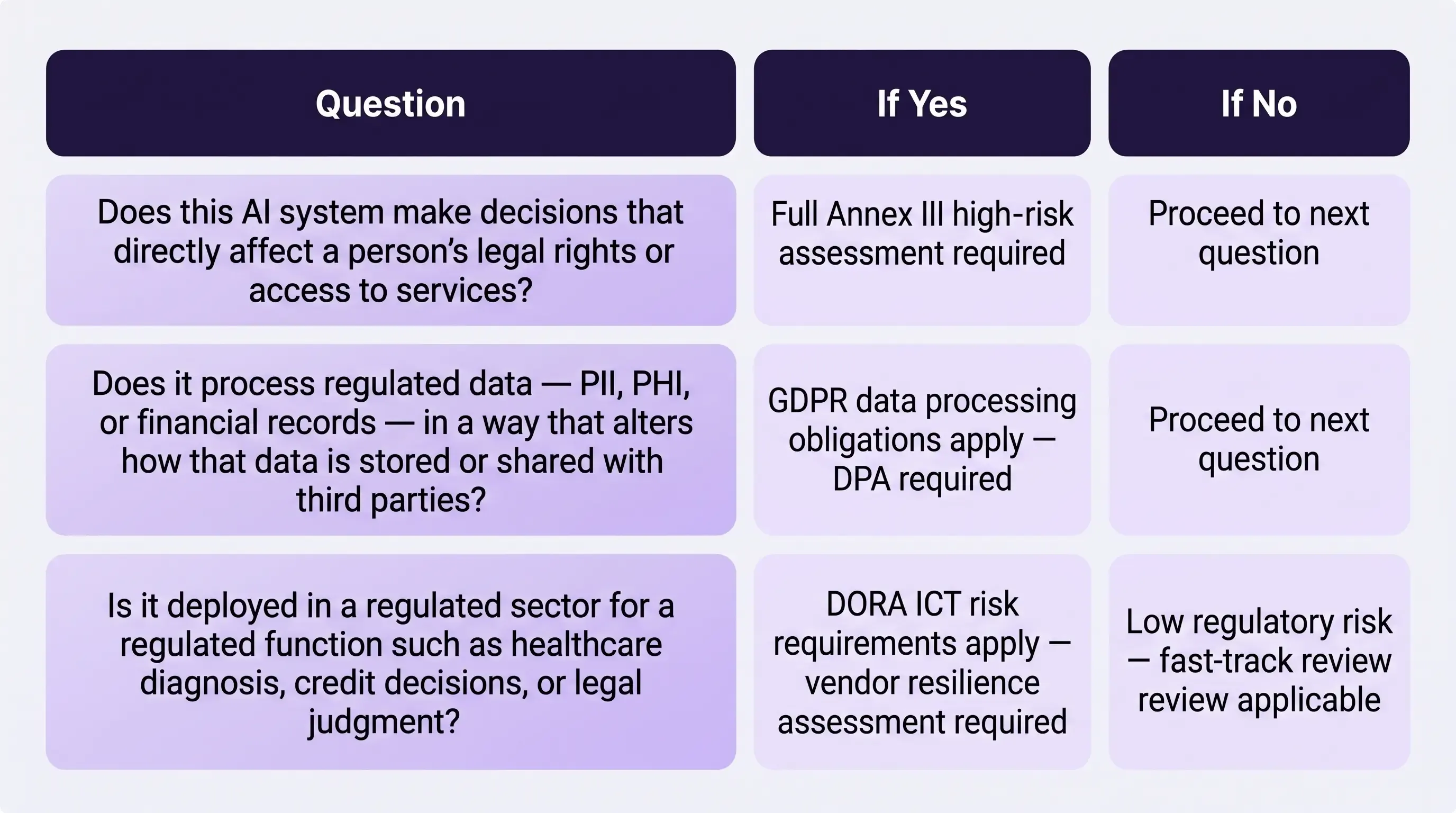

How Do You Know If Your AI Tool Is High-Risk Under the EU AI Act?

The EU AI Act defines high-risk AI systems under Annex III as those making consequential decisions about people in regulated contexts — hiring, credit scoring, healthcare triage, law enforcement. Most internal enterprise AI tools sit entirely outside that definition. The problem is that most compliance teams are applying the full weight of the regulation to tools that Annex III was never designed to govern.

A structured three-question triage separates high-risk tools from low-risk tools in minutes rather than months:

The NIST AI Risk Management Framework Playbook provides the methodological foundation for this kind of tiered risk triage, establishing that not all AI systems carry equivalent risk and that governance effort should be proportional to the risk level of each specific deployment.

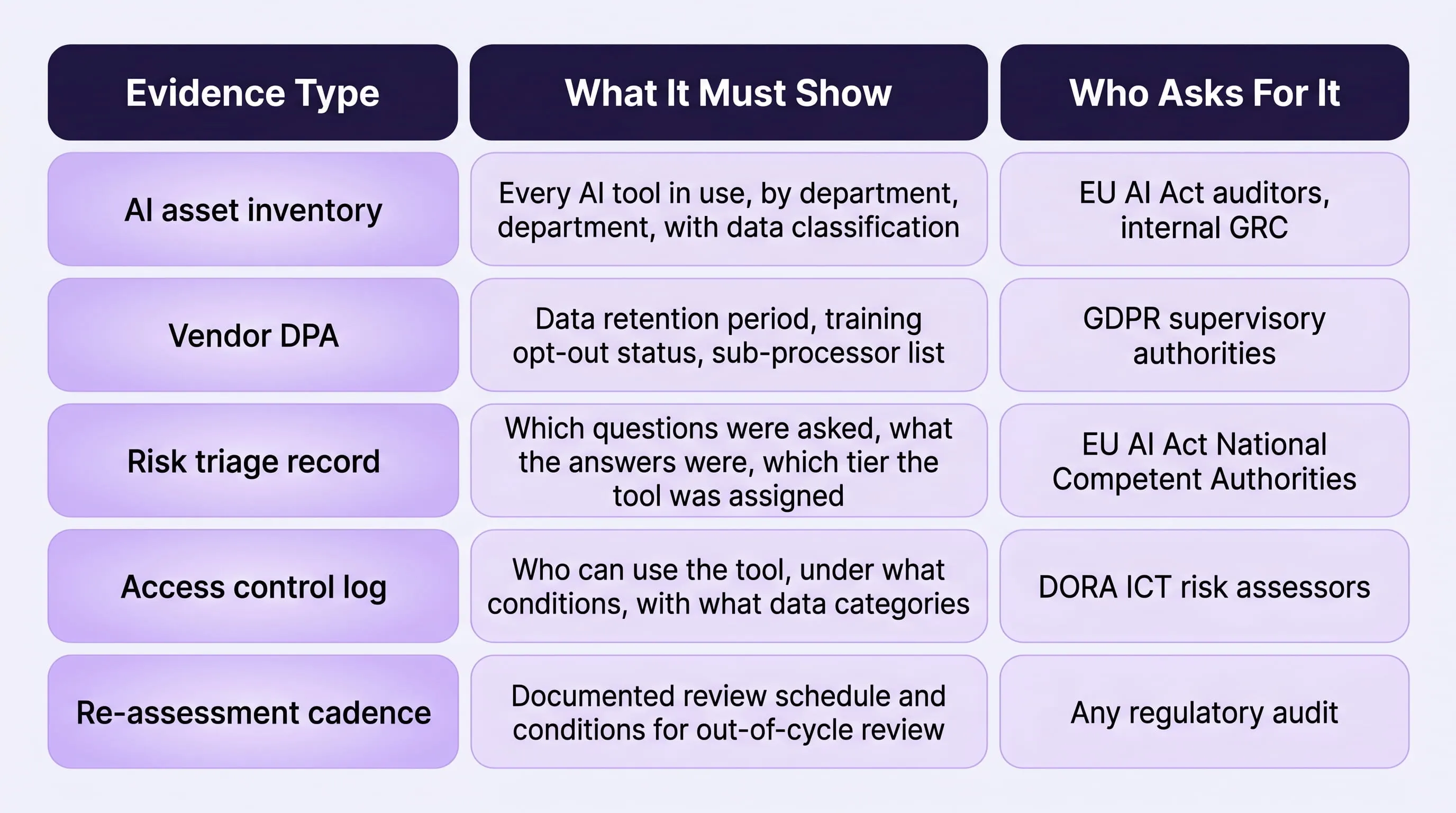

What AI Compliance Evidence Must Enterprises Produce in 2026?

Knowing which regulation applies is the first step. Producing evidence that satisfies it is the second. Most enterprises approaching their first AI compliance audit discover the same gap: the tools are defensible, but the documentation trail does not exist.

The minimum evidence set that every enterprise needs in place before any AI tool goes live — regardless of risk tier, covers five areas:

This documentation does not require a four-month assessment. It requires a defined process applied consistently from the point a tool is first requested, not assembled retrospectively after a regulator asks for it.

For enterprises operating in financial services, the intersection of DORA's ICT risk requirements and EU AI Act obligations creates a compliance architecture that benefits from sector-specific guidance. LangProtect's AI compliance framework for financial services maps both frameworks to a single governance layer.

Legal sector teams working through attorney-client confidentiality and privilege obligations alongside EU AI Act classification can find sector-specific triage guidance at LangProtect's legal AI compliance resource.

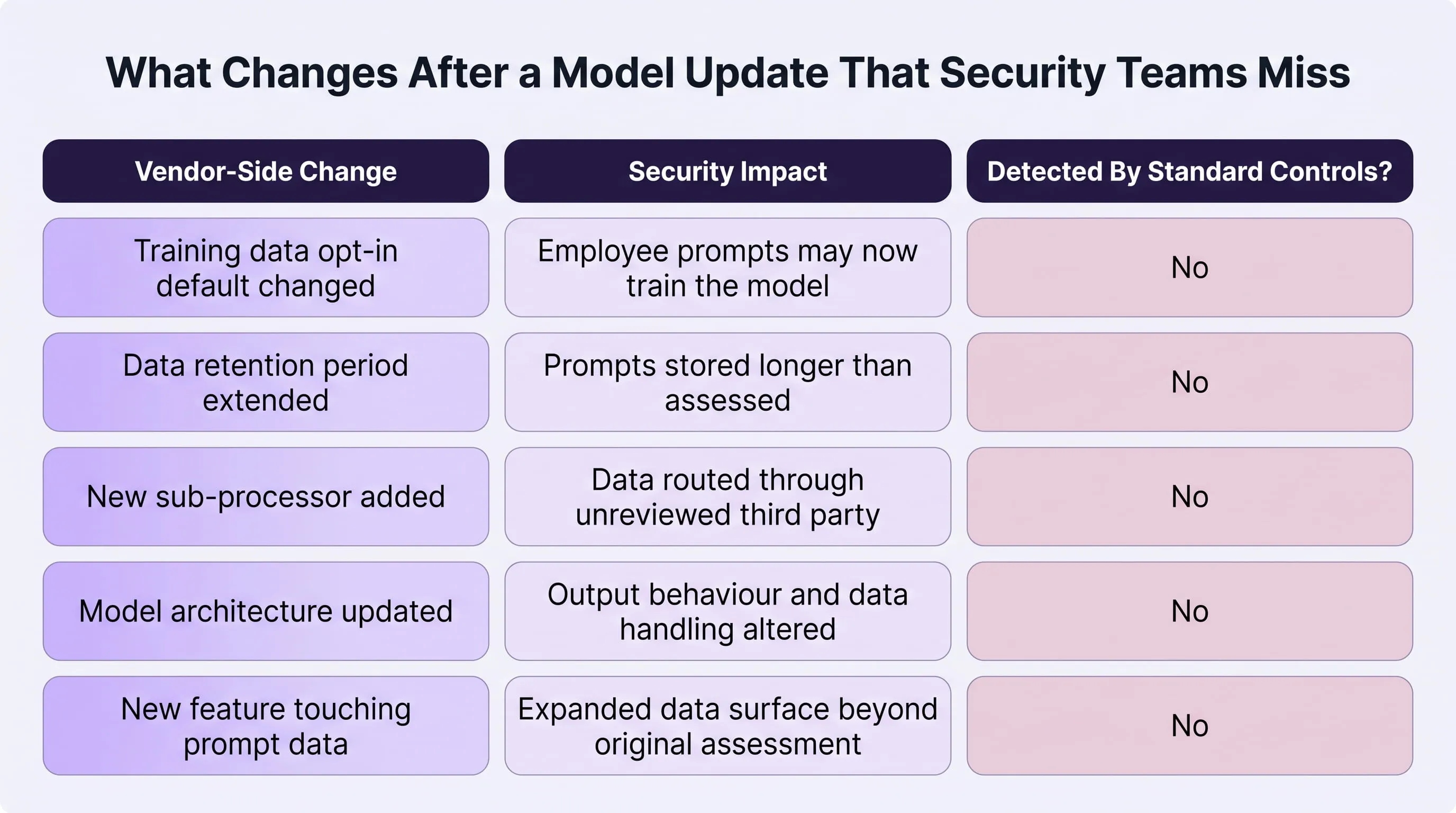

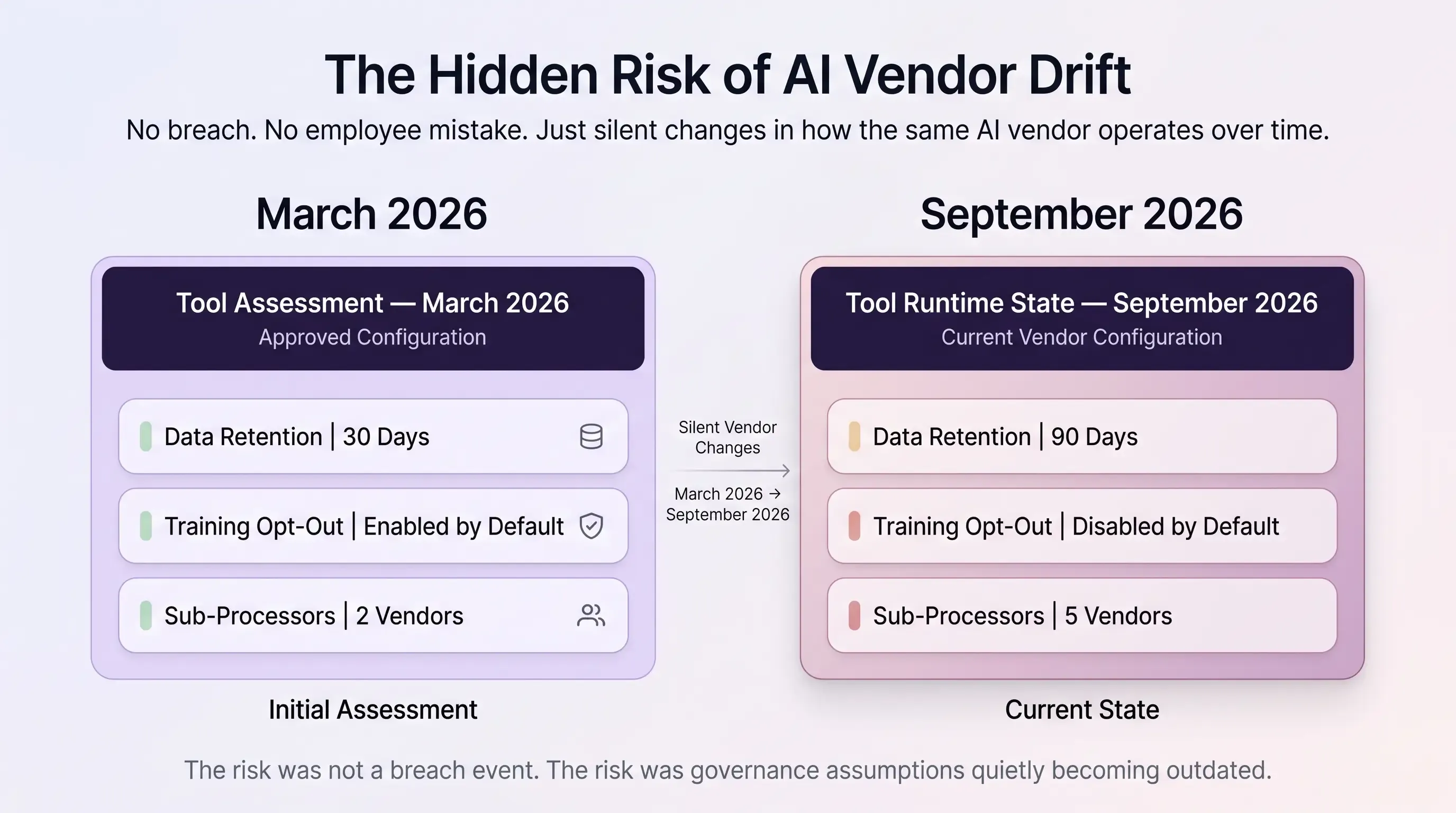

What Happens to AI Security When a Vendor Updates Their Model?

The most rational AI adoption fear is not what happens on day one, it is what happens on day 180, when a vendor silently updates their model, revises their data retention policy, or changes how employee prompts are handled, without sending a single notification to enterprise customers.

This risk is categorically different from employee prompt leakage or shadow AI. It does not require any employee to behave carelessly. It does not require any internal control to fail. It happens entirely on the vendor's side, on the vendor's timeline, and it invalidates the security assessment your team completed months ago without anyone in your organisation knowing it has happened.

By the end of 2026, Bessemer Venture Partners estimates that 40% of enterprise applications will embed AI agents directly into their workflows. That is not 40% of standalone AI tools, it is 40% of the software stack your organisation already runs. The vendor update surface is no longer a handful of dedicated AI tools. It is your entire application layer.

Can an AI Vendor Change Data Policies After You Sign the Contract?

Yes, and most enterprise contracts provide no protection against it. Standard AI vendor agreements include clauses that allow unilateral changes to terms of service with notice periods as short as 30 days, published in changelog pages that no security team monitors as a routine control. Model updates, training data opt-in default changes, and new data retention periods all fall within those clauses.

OWASP LLM02 — Sensitive Information Disclosure establishes the technical framework for why this matters: model behaviour changes directly affect what data the model retains, surfaces, or exposes in outputs. A model update is not a cosmetic change. It can materially alter how the tool handles the sensitive inputs your employees submit every day.

What Changes After a Model Update That Security Teams Miss

None of these changes require a security incident to occur. All of them invalidate the assumptions your approval was based on.

How Often Should Enterprises Re-Assess Approved AI Tools?

A 90-day re-assessment cadence is the minimum defensible standard for any AI tool processing regulated data. That cadence should not be calendar-based alone — it should include defined out-of-cycle triggers that initiate an immediate re-assessment regardless of when the last review occurred:

- Any announced model update — treat it as a new tool assessment, not a routine software patch

- Any vendor privacy policy change — even if the change appears minor, assess whether it alters data handling within your compliance obligations

- Any new product feature that touches prompt data — expanded functionality often expands data surface in ways the original assessment did not account for

- Any change to the vendor's sub-processor list — each new sub-processor is a new data flow that requires its own DPA evaluation

Monitoring AI tool behaviour continuously after vendor model updates gives security teams the signal they need to detect behavioural changes in how approved tools handle sensitive inputs, without waiting for a scheduled re-assessment date to find out the tool they approved six months ago is no longer the tool their employees are using today.

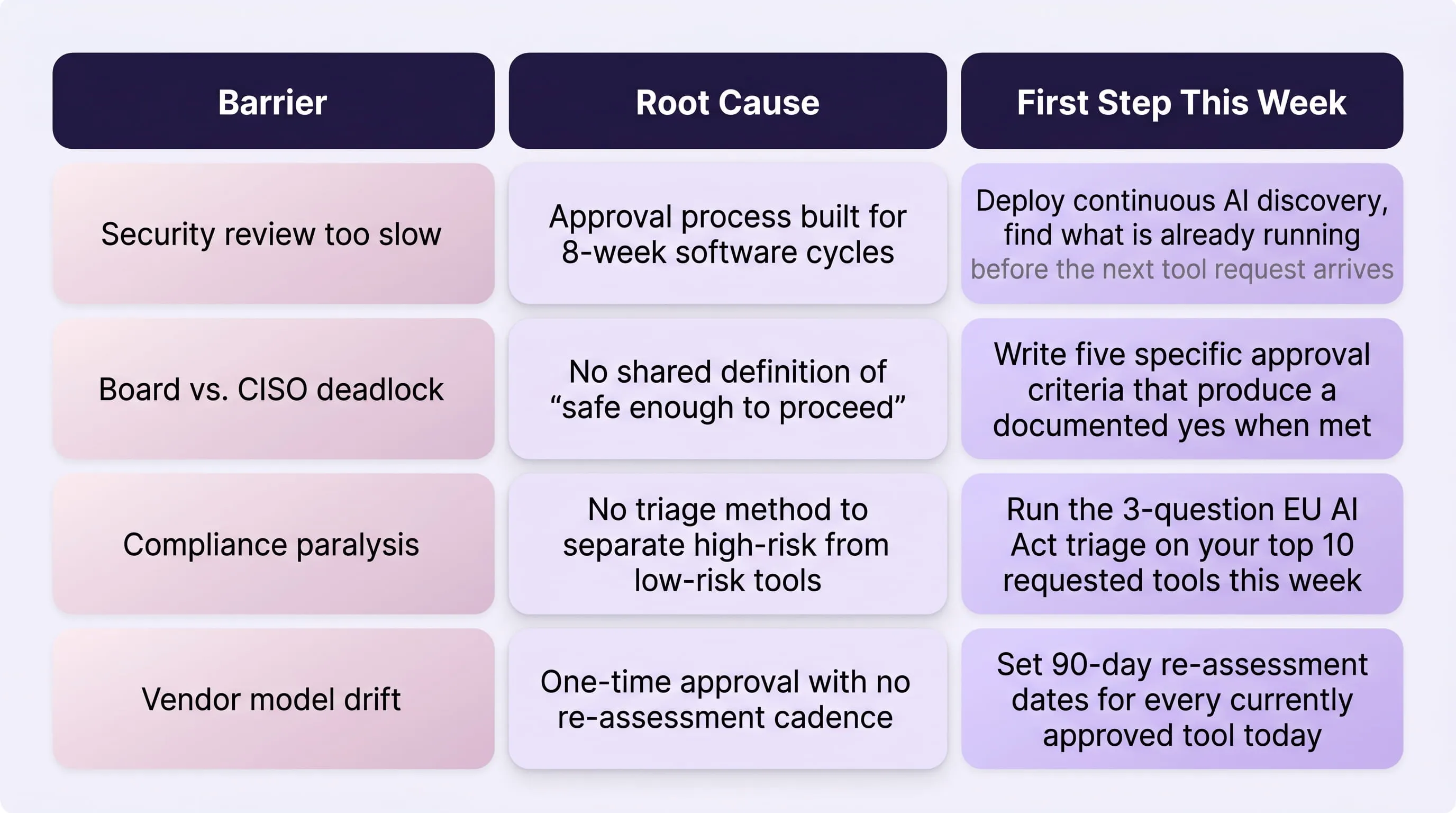

How Do Enterprises Start AI Adoption Without Creating Security Risk?

Enterprises that successfully adopt AI do not wait until every risk is resolved, they define the minimum viable governance that lets them start with accountability and build controls from there.

Every barrier covered in this post has a structural root cause and a defined first step that addresses it without requiring a full security overhaul before a single tool goes live. The table below maps each barrier to its root cause and the specific action that breaks it open, written for security teams who need to move a decision forward this week, not next quarter.

The Compounding Cost of Waiting

None of these first steps require a new budget line, a vendor contract, or a board approval. They require a decision to shift from a posture of indefinite caution to one of structured governance, and that decision has a measurable cost attached to delaying it.

The enterprises losing competitive ground to AI are not the ones that adopted recklessly. They are the ones still waiting for the conditions that would make adoption feel completely safe — conditions that do not exist and will not arrive before competitors have compounded their advantage for another quarter.

Why blocking AI tools accelerates the risk rather than reducing it covers the downstream consequence of indefinite delay in detail. The pattern is consistent: the longer formal adoption is deferred, the larger the informal adoption becomes — and informal adoption is the version with no controls, no logs, and no audit trail when a regulator eventually asks for one.

Runtime enforcement that stays current as vendors update their models gives security teams the ongoing governance layer that makes structured AI adoption sustainable — not just defensible on the day a tool is first approved, but across every model update, policy change, and new feature the vendor ships after that.

The decision is not whether to adopt AI. It has already been made, by your employees.

The only question left is whether your organisation governs that adoption or inherits the consequences of not governing it.

Frequently Asked Questions

Q: What are the biggest security barriers to enterprise AI adoption?

A: The four most common barriers are the speed gap between AI deployment and security review, the internal conflict between board pressure and CISO caution, compliance uncertainty about which regulations govern specific tools, and fear of vendor behaviour changing after a tool is approved. Each is addressable with governance controls established before adoption begins — not after a breach forces the conversation.

Q: Which compliance regulations apply to enterprise AI tools in 2026?

A: The three primary regulations are the EU AI Act, DORA, and GDPR. The EU AI Act's high-risk obligations under Annex III apply to AI systems making consequential decisions about people — not to internal productivity tools, coding assistants, or document summarizers. GDPR applies to any personal data processed through AI tools regardless of use case. DORA applies to ICT risk management in financial services, which includes third-party AI tools used by employees.

Q: How do you resolve the conflict between board pressure for AI and CISO security concerns?

A: Replace the binary yes or no decision with a written adoption standard. Define the specific criteria — vendor DPA signed, prompt-level controls deployed, compliance obligations mapped, audit logging active, re-assessment date set — that must be met before any tool is approved. When those criteria are met, the answer is yes. When they are not, the answer is "not yet, and here is exactly what changes that." This shifts the CISO from blocker to adoption framework owner.

Q: What is vendor model drift and why does it matter for AI security?

A: Vendor model drift occurs when an AI tool's underlying model, data handling practices, or output behaviour changes after your organisation approved it — without notification. Most enterprise contracts do not include automatic alerts for model updates or data retention policy changes. A tool approved six months ago may be operating under materially different terms today, which means one-time approval processes are insufficient for AI tools compared to traditional software.

Q: Is it safer to delay AI adoption until regulations are clearer?

A: No. Delay does not prevent AI usage — it drives employees to unsanctioned tools outside IT visibility, creating the exact exposure the delay was intended to prevent. Enterprises that move forward with a defined governance framework build a documented, auditable record of controls. Enterprises that wait build neither safety nor documentation — and face the same regulatory questions without the evidence to answer them.