What Does AI Governance Actually Require in 2026?

Picture this. Your legal team's AI contract review tool processes 200 NDAs over a Tuesday afternoon. It summarises key clauses, flags termination terms, and returns clean outputs to six senior associates. No alert fires. No policy is violated on paper. Your governance document says "AI tools approved for internal use." Everyone moves on.

What actually happened: the model is hosted by a third-party provider. The output contains client names, revenue figures, and termination clauses, unredacted. No output classification layer exists. No agent access scope was defined. The data left your environment before anyone thought to ask whether it should.

Your AI governance framework said everything was fine. Your data told a different story.

This is the core problem with AI governance in 2026. Most enterprises have governance documents. Acceptable use policies. AI ethics statements. Vendor approval checklists. Almost none have governance controls, technical mechanisms that actually enforce those policies at the model, prompt, and output layer where breaches happen.

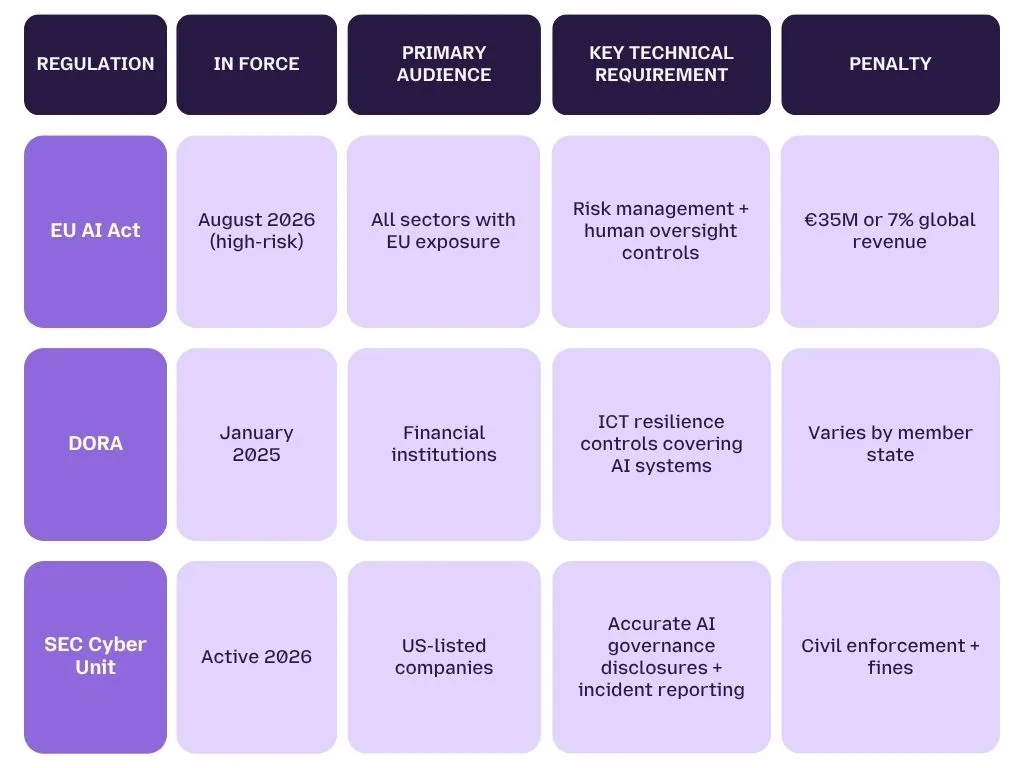

With the EU AI Act's high-risk compliance deadline hitting August 2, 2026, and the SEC actively pursuing AI washing cases against organisations that misrepresent their governance posture, that gap is no longer just a security risk. It is a regulatory liability with a hard deadline and eight-figure penalties.

In this post, you will learn exactly what AI governance requirements in 2026 look like in practice, the six controls that actually matter, how three active regulations are defining enforcement, and where most enterprise governance programs fall short before an auditor finds it first.

Is Your AI Governance Program Enforcement-Ready?

Most enterprise AI policies look compliant on paper — and fail in production. See exactly where your stack is exposed.

What Is AI Governance in 2026?

AI governance is the combination of policies, technical controls, and enforcement mechanisms that determine what your AI systems can access, do, and output, and who is accountable when they fail.

That definition matters because it is fundamentally different from what most enterprises are actually running today.

Ask a compliance team to show you their AI governance program and they will send you a PDF. An acceptable use policy. A vendor approval checklist. Maybe an AI ethics statement drafted by law in 2023. What they will not show you, because it almost certainly does not exist, is a technical control that enforces any of it.

That is the gap. And in 2026, it is the gap regulators are looking for first.

Why the Ethics-First Definition of AI Governance No Longer Works

The original AI governance conversation was built around ethics, bias, fairness, transparency, societal impact. That framing made sense when AI was experimental. It does not make sense when your legal team is running contract review agents, your finance team is using LLM-powered forecasting tools, and your customer support stack has three AI layers none of which your security team can see into.

In that environment, a governance document is not governance. It is documentation of intent. Real governance is what happens at the model layer, at the prompt, the tool call, the output, in real time, automatically, without depending on a human to read a policy and make the right choice.

The shift that 2026 demands is this: from declaring what AI is allowed to do, to technically enforcing it.

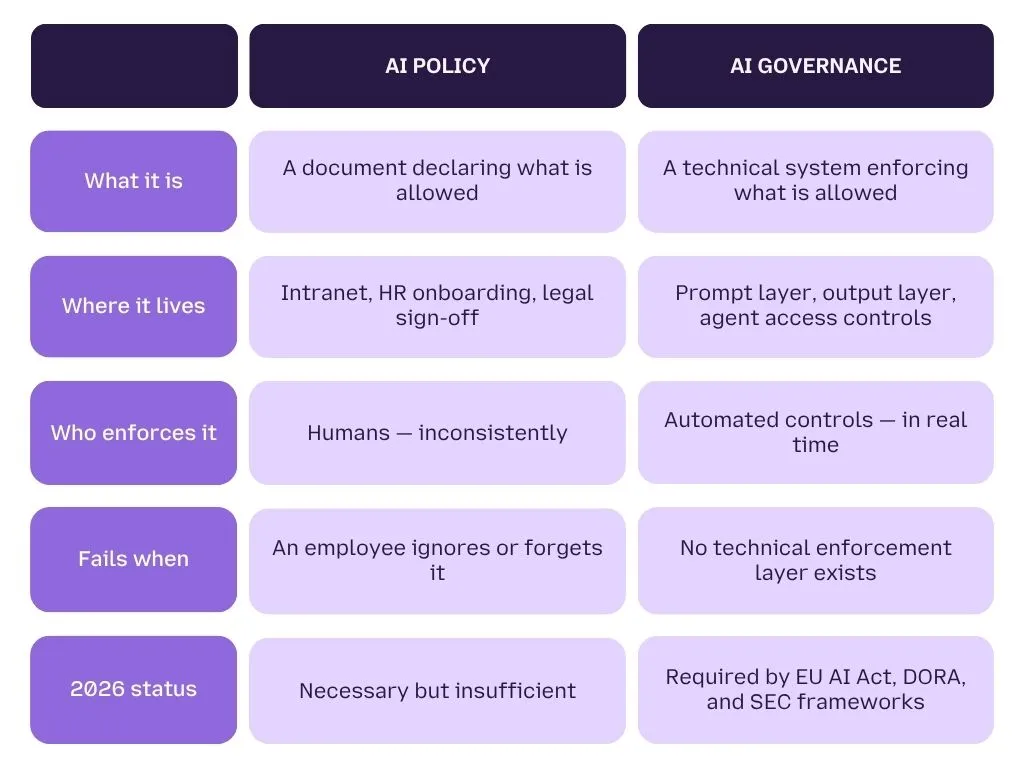

AI Policy vs. AI Governance, Key Differences

The clearest way to understand the gap is through a direct comparison.

Think of it this way: a firewall rule is governance. A network usage policy is not. One enforces. The other informs. The same logic applies to your AI stack.

What an Enforcement-Ready AI Governance Framework Actually Covers

An AI governance framework in 2026 is not a compliance checklist, it is a set of active technical controls. Here is what it must cover to be enforcement-ready:

- Model and agent inventory :- a live map of every AI tool, model, and agent in use across your organisation, including shadow AI

- Prompt-level inspection :- classification and enforcement at the input layer before sensitive data reaches a model

- Output monitoring and redaction :- real-time classification of what models return, with PII, PHI, and confidential data stripped before it reaches users

- Agent access scope :- technically enforced boundaries on what each AI agent can access, call, and act on

- Audit trail generation :- interaction logs that can be produced on demand for a compliance review, not reconstructed after the fact

- Incident response playbooks :- specific response procedures for AI-native failure modes: prompt injection, data exfiltration, agent hijack

Without all six, your governance program has gaps. With only a policy document, it has all of them.

Why AI Governance Gaps Are a Business Risk, not Just a Security Problem

IBM's Institute for Business Value found that 80% of business leaders cite AI explainability and trust as a major roadblock to GenAI adoption, not because the tools do not work, but because there is no technical layer making their behaviour verifiable at scale.

That is a business risk, a regulatory risk, and a reputational risk simultaneously. Under the EU AI Act, deploying a high-risk AI system without a functioning governance framework carries penalties of up to €35M or 7% of global annual revenue. Under DORA, financial institutions must demonstrate technical controls over AI systems to auditors, not point them to a policy document.

The enterprises that will clear their next compliance audit are not the ones with the most comprehensive AI ethics statement. They are the ones that built enforcement into their AI stack before a regulator asked to see it.

For a deeper look at exactly where legacy security frameworks stop and the LLM attack surface begins, read our analysis of the AI security layer beyond traditional controls.

What Does AI Governance Actually Require?

The 6 Controls That Matter in 2026

Effective AI governance in 2026 requires six technically enforced controls, not six policy sections. Each one maps to a real attack surface that a document alone cannot close. If any of these six are missing from your program, you have a gap that a regulator, or an attacker, will find before you do.

1. AI Inventory and Discovery

Govern What You Can Actually See

You cannot govern what you cannot see. And right now, most enterprises are governing approximately 20% of their actual AI usage.

Shadow AI is the first and largest governance gap in 2026. Employees are using browser-based AI tools, personal accounts, unapproved browser extensions, and team-level SaaS products that IT has never reviewed, and every one of them is a potential data exfiltration channel.

An enforcement-ready governance program starts with a live, continuously updated inventory of every model, agent, and AI-enabled tool in use across your organisation, including the ones your security team did not approve.

What to map for each tool:

- What data can it access?

- What can it output?

- Who has access?

- Is it third-party hosted?

- Does a prompt inspection or output redaction layer exist?

If you cannot answer all five for every AI tool in your environment, your inventory is incomplete and your governance program is blind.

2. Prompt-Level Enforcement

Stop Breaches at the Input Layer

Prompt-level enforcement means classifying and inspecting every input before it reaches a model, and blocking or redacting sensitive content before it leaves your environment.

This is the control that makes your acceptable use policy real. Your policy says "do not share confidential data with AI tools." Prompt-level enforcement is the technical mechanism that actually stops it from happening when an employee pastes NDA terms into a model at 11pm on a deadline.

Without this layer, your governance program relies entirely on human compliance, which is not a control. It is a hope.

What prompt enforcement must cover:

- PII and PHI classification before model ingestion

- Confidential document detection, contracts, financial data, legal communications

- Prompt injection attempt detection and blocking

- Real-time logging of every classified prompt for audit trail

For a detailed breakdown of how prompt injection bypasses governance controls that have no enforcement layer, read our analysis of how real-time prompt filtering prevents data leaks.

3. Output Monitoring and Redaction

Classify What the Model Returns

Prompt inspection controls what goes in. Output monitoring controls what comes out. Both are required. Most enterprise governance programs have neither.

Real-time output classification means every model response is analysed before it reaches the user, and any PII, PHI, revenue data, or confidential clause is redacted automatically, regardless of what the model decided to include.

This is not a nice-to-have. Under the EU AI Act's high-risk obligations and DORA's ICT risk requirements, you must demonstrate that sensitive data cannot flow uncontrolled through your

4. Agent Access Control,

Scope What Every AI Agent Can Touch

Every AI agent in your environment is a non-human identity with persistent access to tools, APIs, databases, and communication systems. Most of them have more access than they need, and none of them can be stopped by MFA, a security awareness reminder, or a usage policy.

An over-permissioned AI agent is the 2026 equivalent of a domain admin account with no logging, no MFA, and no access review. The difference is that the agent acts autonomously, and at a speed no human security team can monitor manually.

Agent access control means every agent has a technically enforced scope: a defined list of tools it can call, data sources it can read, and actions it can take, with everything else blocked by default.

What an unscoped agent looks like in a real governance log:

[2026-04-14 11:32:07] AGENT: legal-review-bot-v2

[ACTION]: tool_call → document_retrieval

[SOURCE]: /contracts/nda-archive/2024/

[DATA ACCESSED]: client_name, revenue_terms, termination_clauses

[OUTPUT]: Sent to user session — unredacted

[ALERT FIRED]: None

[POLICY VIOLATED]: None on record

[GOVERNANCE GAP]: No agent-level access scope defined.

No output classification layer active.

No alert fired. No policy was violated, because no technical scope had been defined. This is governance theater in a single log entry. The MITRE ATLAS adversarial AI threat framework documents exactly how attackers exploit over-permissioned agents to move laterally through enterprise environments, a threat class that did not exist three years ago and that your existing SIEM was not built to detect.

For a deeper look at how agent autonomy creates security risks your current stack cannot see, read why AI agents increase security risk, and how real-time interaction governance closes that gap.

5. Audit Trail and Explainability,

Produce Evidence on Demand

Here is a direct question: if a regulator asked you to produce a complete log of every AI interaction in your environment for the last 30 days, how long would it take?

If the answer is anything other than immediately, your governance program has a critical compliance gap.

Under DORA, financial institutions must demonstrate ICT risk controls to auditors on demand. Under the EU AI Act, high-risk AI systems require documented evidence of human oversight and risk management. Under SEC disclosure rules, AI governance claims must be supportable with technical evidence, not narrative.

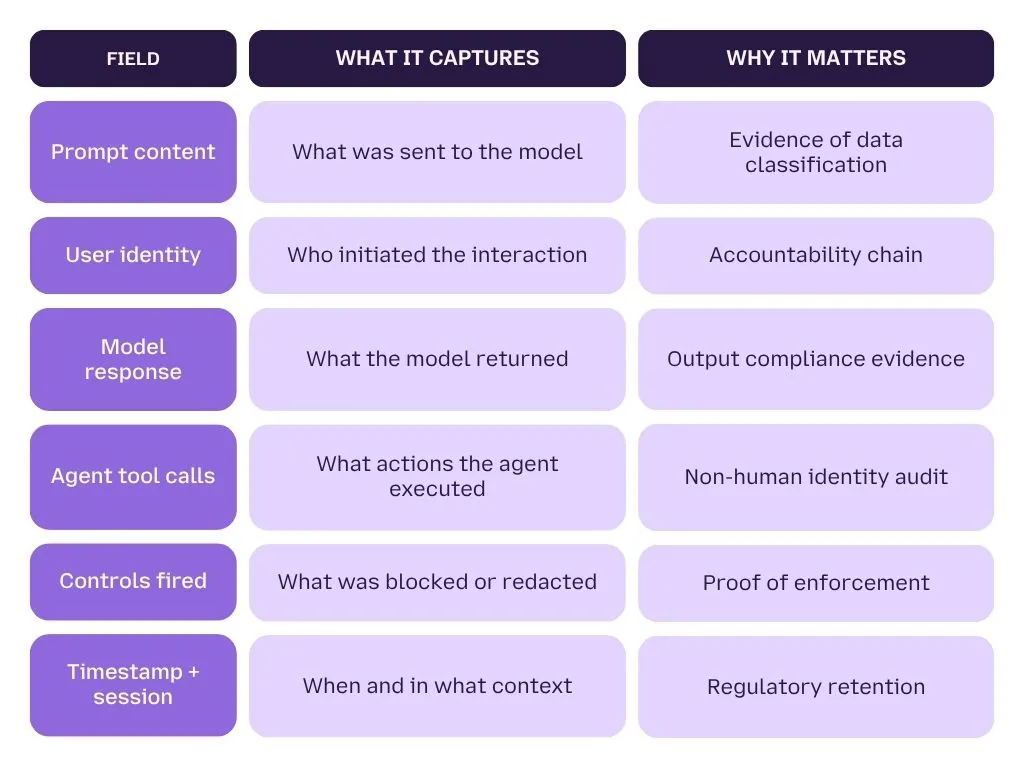

An audit trail is not just a log file. It must capture: who sent the prompt, what the prompt contained, what the model returned, what actions the agent took, and whether any controls fired. Every interaction. In real time. Retained for your regulatory window.

What a complete AI audit trail must include:

6. AI-Specific Incident Response

Respond to Failures Your IR Team Has Never Seen

Your existing incident response runbooks were written for human-initiated threats, phishing, credential theft, ransomware. They were not written for prompt injection, agent hijacking, model manipulation, or AI-layer data exfiltration.

The OWASP LLM Top 10 documents ten distinct failure classes that are specific to LLM-based systems, none of which map cleanly to a traditional IR playbook. The NIST AI Risk Management Framework identifies AI-specific incident response as a core governance requirement, not an optional maturity level.

Your AI incident response program must include:

- Detection playbooks for prompt injection attempts

- Containment procedures for a compromised or hijacked agent

- Escalation paths for AI-layer data exfiltration events

- Evidence preservation for AI interactions during an active incident

- Post-incident analysis for model behaviour and reasoning chain review

Without these, your security team will respond to an AI-native breach using tools and procedures built for a completely different threat model, and lose critical response time in the gap.

See Your AI Governance Gaps in Real Time

LangProtect maps your AI stack against all six controls, and shows you exactly what is missing before your next audit finds it.

What Do 2026's Regulations Actually Require From Your AI Governance Program?

Three regulatory frameworks are demanding technical AI governance controls in 2026, not just written policies, and two of them are already in active enforcement. If your AI governance program was built around documentation rather than technical controls, all three of these frameworks will find that gap. Here is exactly what each one requires, and what it costs when you cannot demonstrate compliance.

1. EU AI Act, The August 2, 2026 Deadline Every CISO Needs to Know

The EU AI Act is the most significant AI governance regulation in force today, and its most critical deadline is 97 days away.

August 2, 2026 is the date by which every organisation deploying a high-risk AI system must have a fully compliant governance program operational. Not documented. Not planned. Operational.

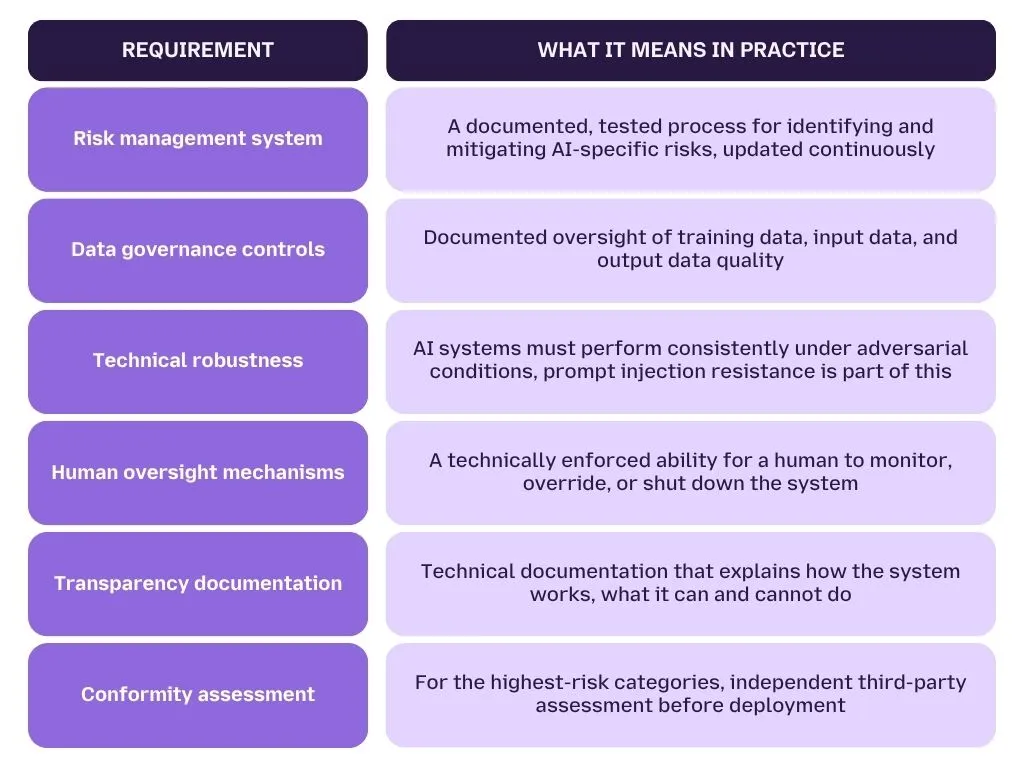

What the EU AI Act technically requires for high-risk AI systems:

Penalties for non-compliance:

Up to €35 million or 7% of global annual revenue, whichever is higher.

This is not a fine structure built for small violations. It is built to make non-compliance more expensive than compliance. For any organisation with significant EU market exposure, the calculus is simple.

2. What Qualifies as a High-Risk AI System Under the EU AI Act?

This is the classification question that every legal, compliance, and security team is currently working through, and getting it wrong has consequences in both directions.

Under EU AI Act Annex III, a system qualifies as high-risk if it operates in or makes decisions related to:

- Credit and financial services — AI used in creditworthiness assessment, insurance risk scoring, or investment advisory

- Employment and HR — AI used in recruitment, performance evaluation, or workforce management decisions

- Legal and justice — AI used to assist in legal interpretation, case analysis, or judicial decision support

- Critical infrastructure — AI systems that manage or influence energy, transport, water, or digital infrastructure

- Law enforcement and border control — AI used in risk profiling, evidence analysis, or identity verification

- Education — AI that determines access to education or evaluates student performance

3. DORA, AI Governance Is Now an ICT Risk Management Obligation for Financial Firms

The Digital Operational Resilience Act has been in force since January 2025. For financial institutions, AI governance is no longer a separate workstream, it is a direct ICT risk management obligation under a regulation that auditors are actively enforcing.

DORA requires financial entities to demonstrate technical resilience across their entire digital operations stack. That includes every AI and LLM system that processes, stores, or transmits financial data. A generic cybersecurity framework presented to a DORA auditor as your AI governance evidence is not sufficient.

What DORA specifically requires that most financial AI programs currently miss:

- Third-party AI vendor risk management — if your AI tool is third-party hosted, you own the operational risk. DORA requires documented vendor assessment, contractual resilience obligations, and exit strategies for every critical third-party AI provider

- ICT incident classification for AI failures — prompt injection events, data exfiltration via AI agents, and model manipulation must be classified as ICT incidents under DORA's reporting framework

- Resilience testing for AI systems — AI systems that are operationally critical must be included in your digital operational resilience testing program — not excluded because they sit outside the traditional IT perimeter

- Audit trail requirements — DORA auditors are now specifically requesting AI interaction logs as evidence of operational oversight

For a detailed breakdown of how DORA's ICT risk requirements map to LLM-specific security controls in financial environments, read our analysis of AI risks in fintech, including where most financial AI governance programs currently fall short of DORA's technical expectations.

4. SEC Cyber and Emerging Technologies Unit — AI Governance Is Now a Disclosure Risk

The SEC's Cyber and Emerging Technologies Unit, renamed and refocused in early 2026, has made AI governance misrepresentation an active enforcement priority. The first cases are already filed. This is no longer a hypothetical risk.

The enforcement theory is straightforward. If your organisation makes public statements about its AI governance posture, to investors, customers, or in regulatory disclosures, those statements must be supportable with technical evidence. Claiming a "robust AI governance framework" when no technical controls exist is the AI equivalent of securities fraud.

What SEC enforcement is specifically targeting:

- Public claims about AI safety, governance, or risk management that cannot be verified with technical evidence

- AI governance disclosures in SEC filings that overstate the maturity or coverage of existing controls

- Failure to disclose material AI-related cybersecurity incidents within required timeframes

- AI system descriptions in investor materials that misrepresent how the systems actually operate

Pro Tip: Review every public-facing statement your organisation has made about AI governance, product pages, investor decks, press releases, SEC filings. If any claim cannot be directly supported by a technical control in production, it is an enforcement exposure. Fix the control or fix the claim. Do not leave both unresolved.

How All Three Regulations Intersect, and Why That Matters for Your Governance Program

For organisations operating across jurisdictions, particularly financial and legal firms with EU market exposure, all three frameworks apply simultaneously. That intersection creates a compliance complexity that a single-framework governance program cannot address.

The organisations that will navigate this cleanly are not the ones with the most comprehensive policy documents. They are the ones that built a single, technically enforced governance layer that satisfies all three simultaneously, and can produce evidence of it on demand.

For a broader view of how AI governance intersects with enterprise security ethics and accountability frameworks, read our analysis of AI security ethics, including how the transatlantic regulatory divide is reshaping what governance actually means for global organisations.

Is Your AI Governance Program Audit-Ready for EU AI Act, DORA, and SEC?

LangProtect maps your AI stack against all three frameworks, and shows you exactly where your controls need to be before the August deadline.

How AI Governance Requirements Differ by Industry in 2026

AI governance requirements are not sector-agnostic. The controls a fintech CISO must implement are materially different from what a law firm or a federal agency needs, and regulators are increasingly reflecting that distinction in how they write, interpret, and enforce the rules.

A governance framework built for a general enterprise will satisfy none of these sectors fully. What works for a SaaS company does not work for a bank under DORA, a law firm processing privileged communications, or a defense contractor handling Controlled Unclassified Information. If your AI governance program was not built with your specific regulatory environment in mind, it has gaps you have not found yet.

Here is exactly what each sector requires, technically, operationally, and regulatorily in 2026.

1. Financial Services and Fintech, Dual Enforcement Under DORA and the SEC

Key Stat

Only 6% of organisations report having an advanced AI security strategy in place, despite 78% having adopted AI tools in production environments.

- Stanford AI Index 2025

Financial services firms face the most complex AI governance environment of any sector in 2026. DORA is actively enforced. The SEC's Cyber and Emerging Technologies Unit is filing cases. And the EU AI Act's August deadline applies to every financial AI system that touches credit, insurance, or investment decisions, which, for most firms, is most of their AI stack.

The core financial services governance challenge is dual enforcement. A single AI system, say, an LLM-powered credit risk scoring tool, must simultaneously satisfy DORA's ICT resilience requirements, EU AI Act's high-risk technical obligations, and SEC disclosure accuracy standards. Most financial AI governance programs were built to satisfy one framework. None of them were built to satisfy all three at once.

What Financial Services AI Governance Must Cover

-

Algorithmic decision explainability is the first requirement that separates financial governance from generic enterprise governance. When an AI system informs a credit decision, an insurance premium, or an investment recommendation, the reasoning behind that decision must be documentable, auditable, and explainable to a regulator on demand. A black-box model output is not a defensible governance position under any of the three active frameworks.

-

Model fairness and bias controls are a direct DORA and EU AI Act obligation for financial AI systems. If your credit scoring model produces statistically disparate outcomes across demographic groups, even unintentionally, that is both a regulatory violation and a litigation exposure. Governance must include ongoing bias monitoring, not just pre-deployment testing.

-

Third-party AI vendor risk is the most commonly missed financial governance requirement. DORA explicitly requires documented vendor assessment, contractual resilience obligations, and exit strategies for every critical third-party AI provider. If your AI tool is third-party hosted, which most are, you own the operational risk. Your vendor's governance posture is your governance posture.

Critical Governance Gap, Financial Services

LLM router attacks, where malicious AI middleware intercepts and alters data flows between a user and a model, are now a documented threat class with no existing DLP coverage.

April 2026 research identified 26 compromised routers actively injecting malicious tool calls into financial AI workflows. Traditional network monitoring does not see this layer. Your governance program must.

What financial services AI governance must include in 2026:

- Live AI system inventory covering every model, agent, and AI-enabled tool in the trading, compliance, credit, and customer service stack

- Prompt-level enforcement on every client-facing and internal AI interaction

- Output classification and redaction for PII, financial data, and regulated content

- Algorithmically documented decision trails for every AI-informed credit or investment outcome

- Third-party AI vendor risk assessments updated at least annually

- DORA-compliant ICT incident classification for AI-specific failure events

- SEC-supportable disclosure documentation for every public AI governance claim

For a detailed breakdown of where financial AI governance programs most commonly fail, and how attackers exploit those gaps

2. Legal and Compliance, Where Privilege Meets the LLM Layer

Key Stat

AI contract review tools are now embedded in over 60% of AmLaw 100 firms, the majority without a dedicated security control at the model output layer.

- Thomson Reuters Legal AI Report 2025

The legal sector has a governance problem that no other industry shares: attorney-client privilege. When an AI tool processes privileged communication, a legal memo, an NDA, a litigation strategy document, the governance question is not just about data security. It is about whether the act of processing that document through a third-party model constitutes a waiver of privilege.

That question does not have a settled legal answer yet. What is settled is that your governance program must treat it as a live risk, technically and legally..

What Legal Sector AI Governance Must Cover

- Confidentiality enforcement at the output layer is non-negotiable for legal AI governance. Every AI tool that processes client communications, case documents, or privileged materials must have a technically enforced output classification layer that prevents confidential content from being returned unredacted, logged externally, or retained by a third-party model provider.

- A usage policy that says "do not use AI for privileged communications" is not a control. It is an assumption of human compliance in an environment where deadline pressure, billing targets, and workflow convenience consistently override policy intent.

- EU AI Act high-risk classification almost certainly applies to AI systems used in legal decision support. If your firm uses AI to assist in contract interpretation, case outcome prediction, litigation risk scoring, or regulatory compliance assessment, that system is likely operating in the high-risk category under Annex III. That means mandatory risk management, technical documentation, and human oversight controls before August 2, 2026.

- Data residency and model retention are critical legal governance requirements that most firms have not addressed. When a lawyer uses an LLM-powered tool to summarise a client document, where does that document go? Is it retained by the model provider? Is it used for training? Most legal AI tools do not answer these questions clearly, and most firms have not required them to.

Legal Governance Spotlight — Privilege Risk

Using a third-party hosted AI tool to process privileged communications without a documented confidentiality enforcement layer creates a credible privilege waiver argument.

Several US jurisdictions are actively examining cases where AI tool use intersected with privilege claims.

Your governance program must address this before it becomes a motion in a live matter.

What legal sector AI governance must include in 2026:

- Confidentiality classification at the prompt and output layer for all client-facing AI interactions

- Model retention and data residency documentation for every third-party AI vendor

- EU AI Act Annex III risk classification for all legal decision support AI systems

- Human oversight controls for any AI output that informs a legal recommendation or client advice

- Privilege risk assessment for every AI tool that processes client communications

- Audit trail for every AI interaction involving a matter, client, or privileged document

For a broader view of how AI governance intersects with professional ethics, accountability, and the regulatory frameworks reshaping legal AI use, read our analysis of AI security ethics, including the specific liability questions that legal and compliance teams need to resolve before their next AI deployment.

3. Government and Defense, where Governance Gaps Become National Security Risks

Key Stat

Government and defense sectors saw a 185% year-on-year increase in targeted AI-enabled intrusions in 2025.

- CrowdStrike Global Threat Report 2026

Government and defense AI governance operates under a threat model that no other sector faces at the same scale: nation-state adversaries with the resources, patience, and technical capability to exploit AI governance gaps as a long-term strategic objective. A governance failure in a financial firm costs money. A governance failure in a defense contractor can cost classified intelligence.

The regulatory environment reflects that severity. CMMC 2.0 now applies to any government contractor whose AI tools touch Controlled Unclassified Information. Zero trust frameworks, mandatory across federal agencies, were designed for human identities and were not built for the non-human identity risks that AI agents introduce.

What Government and Defense AI Governance Must Cover

-

Non-human identity governance is the most critical and least addressed requirement in government AI programs. AI agents are non-human identities with persistent access to classified systems, secure communications, and sensitive databases. Unlike human identities, they cannot be governed with MFA, security awareness training, or a usage policy. Their access must be technically scoped, continuously monitored, and immediately revocable.

-

CMMC 2.0 AI alignment is the emerging compliance obligation that most defense contractors have not yet mapped to their AI stack. CMMC 2.0 requires documented controls over every system that touches CUI, and any AI tool processing, storing, or transmitting CUI is within scope. The DOJ is now holding contractors accountable for AI security misrepresentations under the False Claims Act. This is active, not theoretical.

-

AI agent memory poisoning is a threat class specific to government and defense environments that requires governance controls that do not exist in standard enterprise security frameworks. Nation-state actors have been documented planting dormant instructions inside AI agent memory, instructions that remain inactive for weeks before triggering data exfiltration, lateral movement, or system manipulation. Standard SOC monitoring does not detect this. Your governance program must include agent memory inspection and periodic state validation as specific controls.

Defense Governance Alert, MCP Server Risk

Over 13,000 Model Context Protocol (MCP) servers were deployed on GitHub in 2025 alone. The MCP specification enforces no audit logging, no sandboxing, and no verification of server authenticity.

In a government or defense environment, an unvetted MCP server connected to an AI agent represents an uncontrolled tool access point with classified system exposure. Governance must explicitly address MCP server vetting and access control.

What government and defense AI governance must include in 2026:

- Non-human identity governance framework covering every AI agent's tool access, data scope, and action authority

- CMMC 2.0 compliance mapping for all AI systems processing CUI

- Agent memory inspection controls and periodic state validation protocols

- MCP server vetting and allowlisting for all AI agent tool connections

- Nation-state threat modeling integrated into AI system design and deployment review

- Zero trust extension to non-human AI identities, separate from human IAM frameworks

- Classified data handling protocols specific to LLM prompt and output layers

For a detailed breakdown of how AI agent autonomy creates security risks that existing government frameworks were not designed to address, read why AI agents increase security risk, including how interaction governance closes the non-human identity gap that zero trust leaves open.

Your Industry Has Specific AI Governance Requirements. Does Your Program Match Them?

LangProtect's sector-specific AI security controls are built for financial services, legal, and government environments — not generic enterprise compliance.

What Is the Difference Between AI Governance and AI Security, and Why You Need Both in 2026?

AI governance defines the rules. AI security enforces them at runtime. Without both operating together as a unified control framework, you have either a policy with no technical teeth, or a security tool with no strategic direction telling it what to protect and why.

This is the distinction that most enterprise AI programs miss. And it is the reason organisations can pass a governance documentation audit in the morning and suffer an AI-layer data breach by afternoon.

In 2026, governance and security are not two separate workstreams. They are two layers of the same control system. Here is exactly how they differ, and why both are non-negotiable.

A Direct Comparison of AI Governance vs. AI Security

The fastest way to understand the relationship is to see what each layer covers independently, and where each one fails when it operates alone.

| AI Governance | AI Security | |

|---|---|---|

| Primary function | Defines rules, accountability, and compliance obligations | Enforces rules in real time at the model and agent layer |

| Operates at | Policy, documentation, risk frameworks | Prompt, output, tool call, agent reasoning chain |

| Who owns it | GRC, Legal, Compliance teams | CISO, Security Engineering |

| Visibility into AI behaviour | What should happen | What is happening right now |

| Regulatory evidence produced | Policy documents, risk assessments | Interaction logs, enforcement events, audit trails |

| Fails when | No technical enforcement layer exists | No governance framework directs what to enforce |

| 2026 mandate | EU AI Act, DORA, SEC require documented frameworks | EU AI Act, DORA require technical controls in production |

The table makes the dependency clear. Governance without security enforcement is documentation that does not stop a breach. Security without governance is reactive tooling with no framework to tell it what "secure" actually means for your organisation, your sector, or your regulatory environment.

Why Most Enterprise AI Programs Have One Without the Other

Key Stat:

80% of organisations have adopted AI tools in production, but only 6% report having an advanced AI security strategy in place to govern them technically.

— Stanford AI Index 2025

Most enterprise AI governance programs were built by GRC and legal teams. They were designed to satisfy documentation requirements, risk assessments, vendor approvals, acceptable use policies, ethics statements. They were not designed to enforce anything at the model layer, because the teams building them do not own the security stack.

Most enterprise AI security programs, where they exist at all, were built by security engineering as an extension of existing controls: SIEM rules, DLP policies, CASB configurations. They were not designed with a governance framework in mind, because the teams building them do not own the compliance obligation.

The result is a gap between two teams who are both working on AI risk, and producing controls that do not connect.

The Governance-Security Gap in Practice

A financial firm's GRC team documents an AI governance framework that prohibits sharing client data with third-party models. Their security team configures DLP rules to flag large file transfers.

Neither control catches a prompt injection attack that extracts client portfolio data one query at a time, because the governance framework did not define that as a threat scenario, and the DLP rule was not built to inspect prompt-level interactions.

Both teams passed their audits. The breach happened in the gap between them.

What a Unified AI Governance and Security Framework Looks Like

Closing the gap requires a single control framework where governance obligations directly inform security enforcement, and where security telemetry feeds back into governance evidence.

In practice, this means five things:

-

1. Governance obligations map directly to technical controls Every policy requirement, "no PII in AI prompts," "agents must operate within defined tool scopes," "all AI interactions must be logged", has a corresponding technical enforcement mechanism in production. Not a reminder. Not a training module. A control that fires automatically.

-

2. Security controls produce governance evidence Every prompt inspection event, output redaction, agent tool call block, and audit log entry is evidence of governance in action. Your security telemetry IS your compliance documentation, if your tools are instrumented correctly.

-

3. AI inventory is shared across both functions GRC cannot govern AI systems they do not know exist. Security cannot protect AI systems that are not in scope. A unified, continuously updated AI inventory, covering every model, agent, and AI-enabled tool, is the foundation both functions share.

-

4. Incident response bridges governance and security When an AI-native incident occurs, a prompt injection attempt, a compromised agent, an AI-layer data exfiltration, the response must simultaneously address the security event and the governance obligation. Who is accountable? What must be disclosed? Which regulatory framework triggered a reporting obligation? These questions must be answered in the same playbook.

-

5. Regulatory evidence is produced automatically Under DORA, the EU AI Act, and SEC disclosure frameworks, governance evidence must be producible on demand. A unified framework produces that evidence continuously, through audit trails, enforcement logs, and control telemetry, rather than assembling it retrospectively when an auditor asks.

How NIST, OWASP, and ISO 42001 Define the Governance-Security Relationship

The three most authoritative frameworks for enterprise AI risk all converge on the same conclusion: governance and security must be integrated, not parallel.

-

NIST AI Risk Management Framework The NIST AI Risk Management Framework organises AI risk management into four functions: Govern, Map, Measure, and Manage. Security controls sit within Manage, but they are explicitly directed by the governance function. Without the governance layer defining what risks matter and what outcomes are acceptable, the Manage function has no strategic direction.

-

OWASP LLM Top 10 The OWASP LLM Top 10 defines the ten most critical security risks specific to LLM-based systems, from prompt injection and insecure output handling to over-permissioned agents and model denial of service. Every item on the OWASP LLM Top 10 is a security failure that a governance framework must explicitly address. If your governance policy does not reference these threat classes, your security team does not know which controls to prioritise.

-

ISO 42001, AI Management Systems Standard The ISO 42001 standard is the first international standard specifically for AI management systems. It explicitly integrates governance obligations, risk assessment, accountability structures, transparency requirements, with operational controls. ISO 42001 certification is already being used as evidence of governance maturity in EU AI Act compliance assessments. It is the clearest external signal that your governance and security programs are operating as a unified system.

The LangProtect Position, (Governance and Security as a Single Layer)

This is the gap LangProtect was built to close.

Most AI security tools operate at the perimeter, they watch network traffic, flag large file transfers, and report on user behaviour. They do not sit between the user and the model. They

do not inspect prompt content. They do not classify output before it reaches the user. They do not enforce agent access scope in real time.

LangProtect's Armor operates at the interaction layer, the exact point where governance obligations and security enforcement must meet. Every prompt is inspected. Every output is classified. Every agent tool call is evaluated against a defined scope. Every interaction is logged for audit. Governance defines what the controls enforce. Security makes sure they fire.

For a full breakdown of how the interaction governance layer works, and how it maps to the threat classes your existing stack cannot see , read our analysis of why AI agents increase security risk and how real-time prompt filtering prevents data leaks at the layer where governance actually needs to operate.

Governance and Security, What Your Program Needs to Cover Together

Here is the integrated control map every AI governance and security program needs in 2026:

| Control | Governance layer | Security enforcement layer |

|---|---|---|

| AI inventory | Policy: what systems are in scope | Technical: continuous discovery of all AI tools |

| Data classification | Policy: what data AI can process | Technical: prompt inspection and output redaction |

| Agent access | Policy: what agents are permitted to do | Technical: tool scope enforcement in real time |

| Audit trail | Policy: what must be logged and retained | Technical: interaction logging for every AI event |

| Incident response | Policy: who is accountable and what is reportable | Technical: detection, containment, and evidence capture |

| Regulatory evidence | Policy: what frameworks apply | Technical: automated evidence generation per framework |

Every row where your governance layer has a policy but your security layer has no technical enforcement is a gap. Every row where your security layer has a control but your governance layer has no policy directing it is wasted tooling.

The goal is a complete row for every control. In 2026, anything less is an exposure.

Governance Documents Are Not Enough. Let Us Show You What Enforcement Looks Like.

LangProtect closes the gap between your AI governance obligations and your security controls, in a single enforcement layer built for your regulatory environment.

AI Governance in 2026 Is an Enforcement Problem — Not a Policy Problem

The shift that defines AI governance in 2026 is not regulatory, it is architectural. The organisations that will satisfy EU AI Act auditors, pass DORA reviews, and survive SEC scrutiny are not the ones with the most comprehensive AI ethics statements. They are the ones that built enforcement into their AI stack before a regulator asked to see it.

Three things are true simultaneously right now. The EU AI Act's August 2 deadline is real, the penalties are material, and most enterprise AI governance programs are still built around documentation rather than controls. That gap closes in one of two ways, by choice before the deadline, or by enforcement after it.

The six controls covered in this post, AI inventory, prompt enforcement, output redaction, agent access control, audit trails, and AI-specific incident response, are not a maturity roadmap. They are the minimum viable governance program for any organisation deploying AI in a regulated environment in 2026. Below this baseline, you are not governing your AI. You are hoping it behaves.

The difference between a governance document and a governance program is a technical enforcement layer. That layer sits between your users and your models. It classifies every prompt. It redacts every sensitive output. It scopes every agent. It logs every interaction. And when an auditor asks to see your AI governance evidence, it produces it automatically, because it has been producing it continuously, from the moment you turned it on.

That is what AI governance actually requires in 2026. Not a better policy. A working control.

Your AI Governance Policy Is Not Enough. Let Us Show You What Enforcement Looks Like.

LangProtect closes the gap between your AI governance obligations and your security controls, in a single enforcement layer built for your regulatory environment.

Book a 30-minute demo with our security team and we will show you exactly where your stack is exposed before your next audit finds it first.

Frequently Asked Questions

Q: What does AI governance actually require in 2026?

A: In 2026, AI governance requires six technically enforced controls: AI inventory and discovery, prompt-level enforcement, output monitoring and redaction, agent access control, audit trail generation, and AI-specific incident response. A written policy document alone does not constitute governance — each control must be technically active at the LLM or agent layer in production. Organisations without these controls in place are exposed to regulatory action under the EU AI Act, DORA, and SEC oversight frameworks — all of which are actively enforcing in 2026.

Q: Is AI governance the same as AI compliance?

A: No — and the difference matters significantly in 2026. Compliance means meeting the minimum documented requirements of a regulation. Governance is the ongoing system of policies and technical controls that ensures your AI behaves as intended across its entire lifecycle — before, during, and after deployment. You can be fully compliant on paper and still have a critical AI security gap. Governance is what compliance is supposed to produce. In most enterprises today, only the paperwork exists.

Q: What regulations require AI governance controls in 2026?

A: Three regulations are actively driving AI governance enforcement in 2026. The EU AI Act requires high-risk AI systems to have documented risk management, data governance, technical robustness controls, and human oversight mechanisms in place by August 2, 2026 — with penalties up to €35M or 7% of global annual revenue. DORA has been in force since January 2025, requiring financial institutions to demonstrate ICT risk controls that explicitly cover AI systems. The SEC's Cyber and Emerging Technologies Unit is actively pursuing AI washing cases against organisations that misrepresent their AI governance posture in public disclosures.

Q: What is the EU AI Act deadline in 2026 and what happens if I miss it?

A: August 2, 2026 is the compliance date for organisations deploying high-risk AI systems under the EU AI Act. By this date, affected organisations must have compliant risk management systems, data governance controls, technical robustness measures, and human oversight mechanisms documented and operational. Missing this deadline exposes organisations to penalties of up to €35 million or 7% of global annual revenue — whichever is higher. National market surveillance authorities across EU member states have the power to require non-compliant systems to be withdrawn from the market entirely. Early assessment and documented remediation progress is the strongest risk mitigation position available today.

Q: What is the difference between AI governance and AI security?

A: AI governance defines the rules — what your AI systems are permitted to access, process, and output, and who is accountable when those boundaries are crossed. AI security enforces those rules in real time at the model and agent layer, intercepting threats like prompt injection, data exfiltration, and unauthorised tool calls before they complete. Governance without security enforcement is documentation. Security without governance is reactive tooling with no strategic framework. In 2026, both must operate together as a unified control system — governance directing what the controls enforce, security making sure they fire automatically.

Q: How do I know if my AI system qualifies as high-risk under the EU AI Act?

A: If your AI system makes or informs decisions about credit, employment, legal outcomes, access to public services, or operates within critical infrastructure — it almost certainly qualifies as high-risk under EU AI Act Annex III. High-risk systems require mandatory conformity assessment, technical documentation, a functioning risk management system, and human oversight controls before deployment. If you are unsure of your classification, treating the system as high-risk and documenting your rationale is the most defensible compliance position available. Waiting for a regulator to classify it for you is not.

Q: What is governance theater in AI security — and how do I know if my program has it?

A: Governance theater is when an organisation has written AI policies, acceptable use guidelines, and compliance checklists — but has no technical controls enforcing those policies at the LLM or agent layer in production. The policy says "do not share confidential data with AI tools." The AI agent has no mechanism to know or enforce that instruction. The fastest way to identify governance theater in your own program is to ask one question: "Show me a control that fired last week." If your governance program cannot point to a blocked prompt, a redacted output, or a flagged agent tool call as evidence of enforcement, it is not a governance program — it is a governance intention.

Q: Do I need a separate tool for AI governance or does my existing SIEM cover it?

A: Your existing SIEM does not cover AI governance requirements — and neither does your DLP or CASB. Traditional security tools operate at the network and file layer. They were not built to inspect prompt content, classify model outputs, monitor agent reasoning chains, or detect prompt injection in real time. AI governance in 2026 requires a dedicated enforcement layer positioned between your users and your models — at the interaction layer where AI-specific threats actually occur. This is a fundamentally different control surface from what any existing enterprise security tool was designed to address.

Q: How do I audit my AI systems for compliance before a regulator does?

A: Start with an AI inventory — identify every model, agent, and AI-enabled tool in use across your organisation, including shadow AI and browser-based tools your security team has not approved. For each system, document what data it can access, what it can output, who has access, and what controls exist at the prompt and output layer. Map each system against EU AI Act Annex III risk categories. Then test whether your governance controls are technically enforced — not just documented. The critical question is this: if a regulator asked you to produce a complete AI interaction log for the last 30 days right now, could you? If not, your governance program has a gap that needs to be closed before your next audit.

Q: What happens if my organisation misrepresents its AI governance posture publicly?

A: Misrepresenting your AI governance posture in public disclosures — investor materials, product pages, SEC filings, or press releases — is an active enforcement target for the SEC's Cyber and Emerging Technologies Unit in 2026. The enforcement theory is straightforward: if your organisation makes public statements about AI safety, governance maturity, or risk management that cannot be supported by technical controls in production, those statements constitute a material misrepresentation. The SEC has already filed cases under this theory. Every public-facing AI governance claim your organisation has made should be reviewed against what your technical controls can actually demonstrate — and either the claim or the control needs to be fixed.

Tags

Related articles

Vibe Coding Is a Security Liability: What Legal Tech Must Know

Responsible AI Security Framework: Building Trustworthy AI