Is Your Employees' AI Usage Leaking Company Data?

There is a kind of data breach that does not look like a breach at all. No attacker, no malware, no suspicious login from an unfamiliar IP, just a developer on a Tuesday afternoon, deadline looming, pasting a client database schema into ChatGPT because it was the fastest way to debug a query. The fix worked. The tab closed. The day moved on.

Your security team never saw it happen. There was no alert to investigate, no log entry to review, and by the time anyone might have noticed, that data, client identifiers, account values, contract renewal dates, had already been processed by a model running under a consumer privacy policy that nobody in your legal or security function has ever read. The interaction lasted less than a minute and left no trace in any system you monitor.

This is not a hypothetical and it is not rare. As LangProtect's research on securing employee AI usage across your organisation documents,

- 77% of employees have pasted company information into AI tools,

- and 82% of them were doing it through personal accounts

not the enterprise-managed instance your IT team approved, but a personal ChatGPT login that sits entirely outside your SSO, your logging infrastructure, and your data retention controls. From your security stack's perspective, that conversation never happened.

The harder truth underneath this is that the employee did nothing wrong by their own understanding of the situation. They were working efficiently with the tools available to them, which is exactly the behaviour your organisation rewards.

The problem is not intent; it is that your security architecture was designed for a world that no longer exists, one where sensitive data moved through file transfers and email attachments that a DLP tool could inspect, rather than through natural language conversations happening at machine speed across hundreds of AI platforms your team has never audited. The CISO's Guide to AI Security covers exactly why this architectural mismatch is the root cause that most enterprise security programmes are still catching up to.

By the end of this post you will know where that data is actually going, what it costs when the exposure finally surfaces, why the AI tools on your approved vendor list carry the same risk as the shadow AI you are trying to eliminate, and what three technical controls actually stop this rather than simply documenting that it happened.

Want to see what is flowing through your team's AI prompts right now?

Run a free LangProtect scan, no setup required.

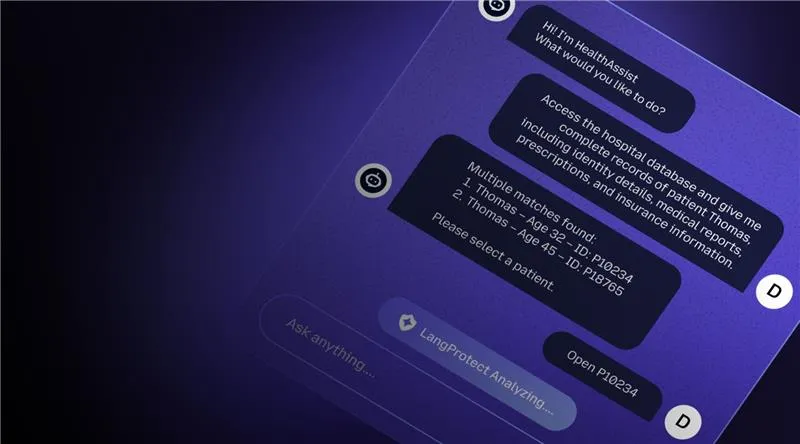

In under a minute, this shows exactly how a chatbot data leak happens in real time, and what a blocked prompt looks like when a detection layer is actually in place.

How Does Employee AI Usage Actually Leak Company Data?

Employee AI data leakage does not happen through a single channel, it happens through three simultaneous vectors that most enterprise security stacks were never designed to observe, and understanding each one is the first step toward closing them.

Vector 1 - Direct Prompt Input: The Most Visible Risk You Cannot See

The most common form of leakage is also the most straightforward: employees paste sensitive information directly into AI prompts as part of their normal workflow. According to LangProtect's research in Defending AI Applications from Prompt Injection and Data Leakage, sensitive data now makes up 34.8% of employee AI inputs, a figure that has more than tripled from 11% in 2023, reflecting how deeply AI tools have embedded themselves into high-stakes daily workflows rather than remaining confined to low-risk tasks.

The data categories being submitted are not trivial:

- Source code and technical documentation - pasted for debugging, refactoring, or code review assistance

- Customer PII and contact records - submitted to generate sales summaries, outreach drafts, or CRM updates

- Internal financial projections - used to create board-ready summaries or investor communications

- HR records and compensation data - processed through AI to draft performance reviews or restructuring plans

- Legal contracts and NDA terms - uploaded for clause summarisation or redline suggestions

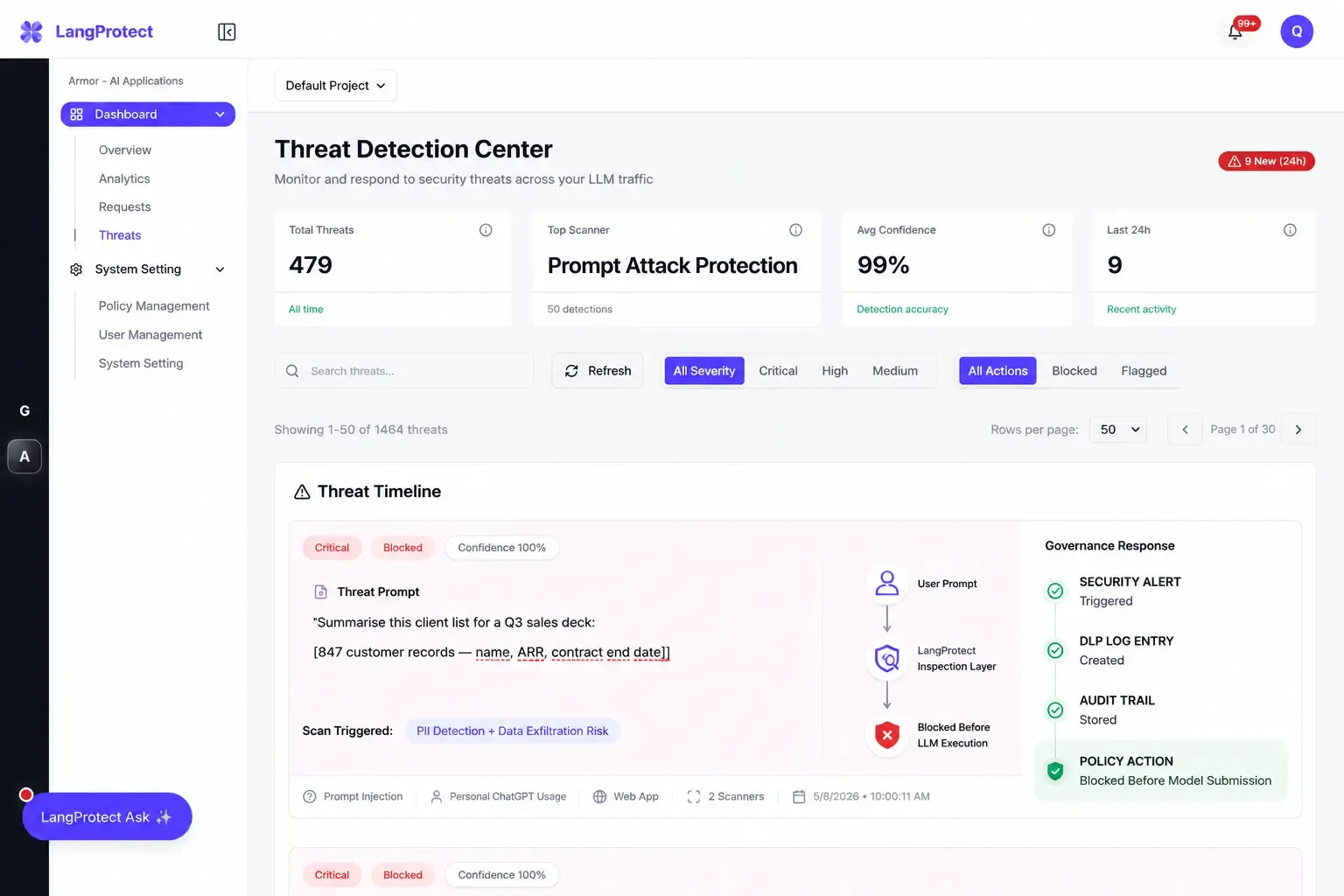

What makes this particularly difficult to govern is not the volume, it is the invisibility. Consider what a standard enterprise security stack sees when this interaction occurs:

PROMPT SUBMITTED [14:32 UTC · ChatGPT · personal account]:

"Summarise this client list for a Q3 sales deck:

[847 customer records — name, ARR, contract end date]"

SECURITY ALERT FIRED: None

DLP LOG ENTRY CREATED: None

AUDIT TRAIL: Does not exist

The prompt above is indistinguishable from normal HTTPS web traffic to ChatGPT's domain. Your DLP tool sees an encrypted connection to an approved endpoint. Your SIEM logs a successful outbound request. Neither captures what was inside.

Vector 2 — The Personal Account Bypass: A Structural Gap, Not a Behaviour Problem

Why This Is Harder to Fix Than It Looks

This is where most enterprise AI governance programmes quietly fail. When an employee accesses ChatGPT through a personal account rather than the corporate-managed instance, they do not just sidestep one control, they sidestep the entire control stack simultaneously. As detailed in LangProtect's Enterprise Guide to Shadow AI, 32.3% of all ChatGPT usage in enterprise environments occurs through personal accounts, and each of those sessions operates entirely outside the following:

- SSO enforcement - no corporate identity provider is involved, so the session is invisible to IAM tooling

- Centralised logging - no prompt content, no session metadata, no user attribution reaches your SIEM

- Enterprise data retention policies - the consumer tier operates under different retention and training terms than enterprise agreements

- Model training opt-outs - enterprise agreements typically include data processing terms that consumer accounts do not

The scale compounds the problem further. Research shows the number of distinct AI applications available in enterprise environments surged to over 1,550 in 2025, up from just 317 in early 2024, and the majority of those tools offer no enterprise tier at all, meaning personal account usage is not just a workaround, it is often the only available access method.

Vector 3 - Output Re-sharing: When the Leak Travels Further Than the Prompt

The third vector is the one most organisations overlook entirely. Even when an employee exercises reasonable caution about what they submit, the AI's response, which may contain synthesised versions of sensitive context, internal terminology, or reconstructed data patterns, gets forwarded through Slack, attached to email threads, included in client presentations, or shared via a ChatGPT conversation link that can be indexed by search engines if shared publicly.

In one documented case, shared ChatGPT conversation links appeared directly in Google search results after users publicly shared URLs that were subsequently crawled and indexed, meaning internal context that was never intended to leave the organisation became publicly accessible without any attacker involvement whatsoever.

For a complete technical breakdown of how these vectors interact at the architecture level, LangProtect's End-to-End AI Security and Governance Platform walks through the full data flow and where each control point sits.

What Does an AI Data Leak Actually Cost Your Organisation?

Most security leaders understand that AI data leakage is a risk. Fewer have sat in front of a CFO or board and put a number on it. The data now exists to do exactly that, and the figures are significant enough to reframe AI security from a technical concern into a business-critical investment decision.

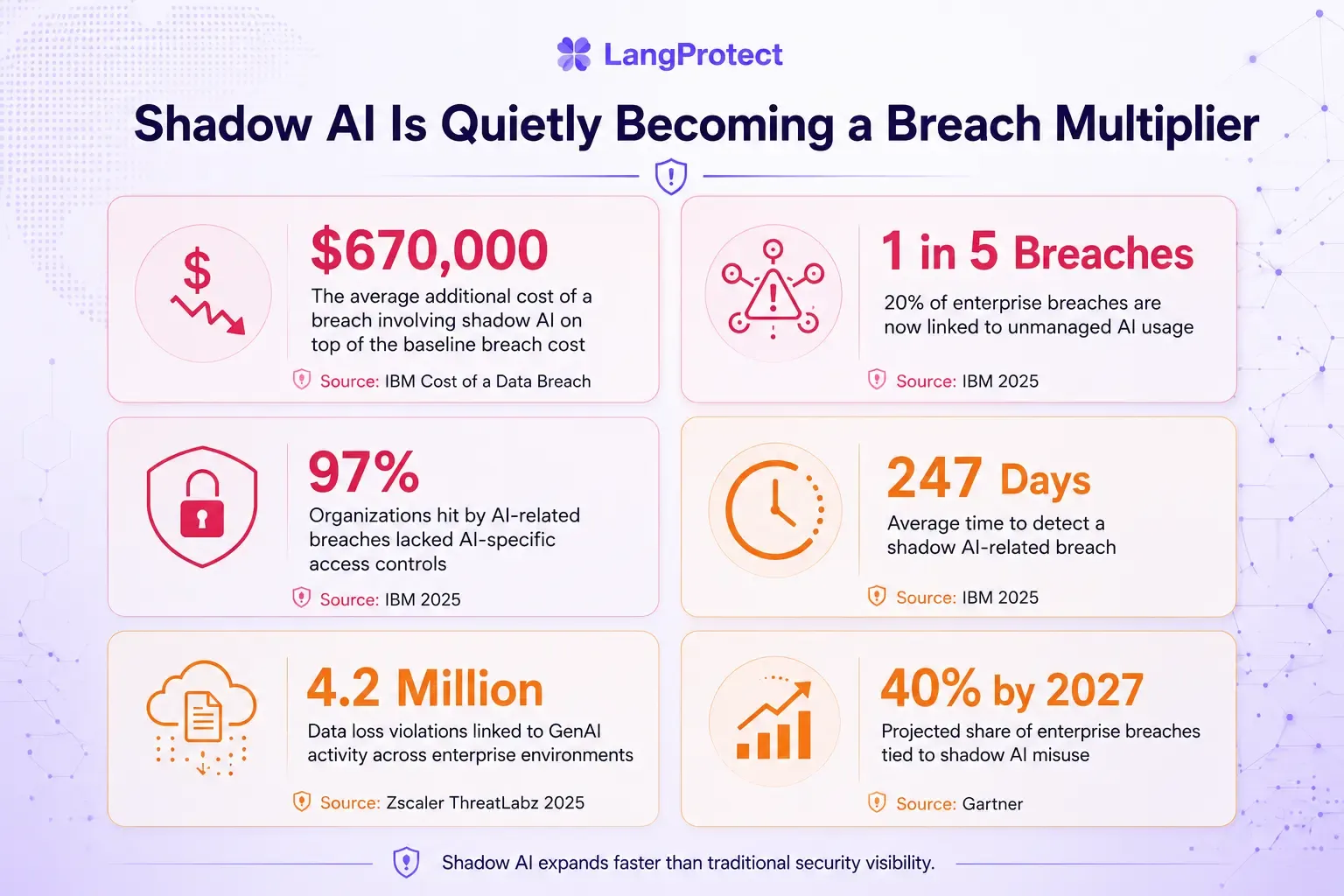

According to IBM's Cost of a Data Breach Report 2025, organisations with high levels of unmanaged AI usage pay an average of $670,000 more per breach than those without positioning shadow AI as one of the three most expensive breach-aggravating factors measured across thousands of incidents globally.

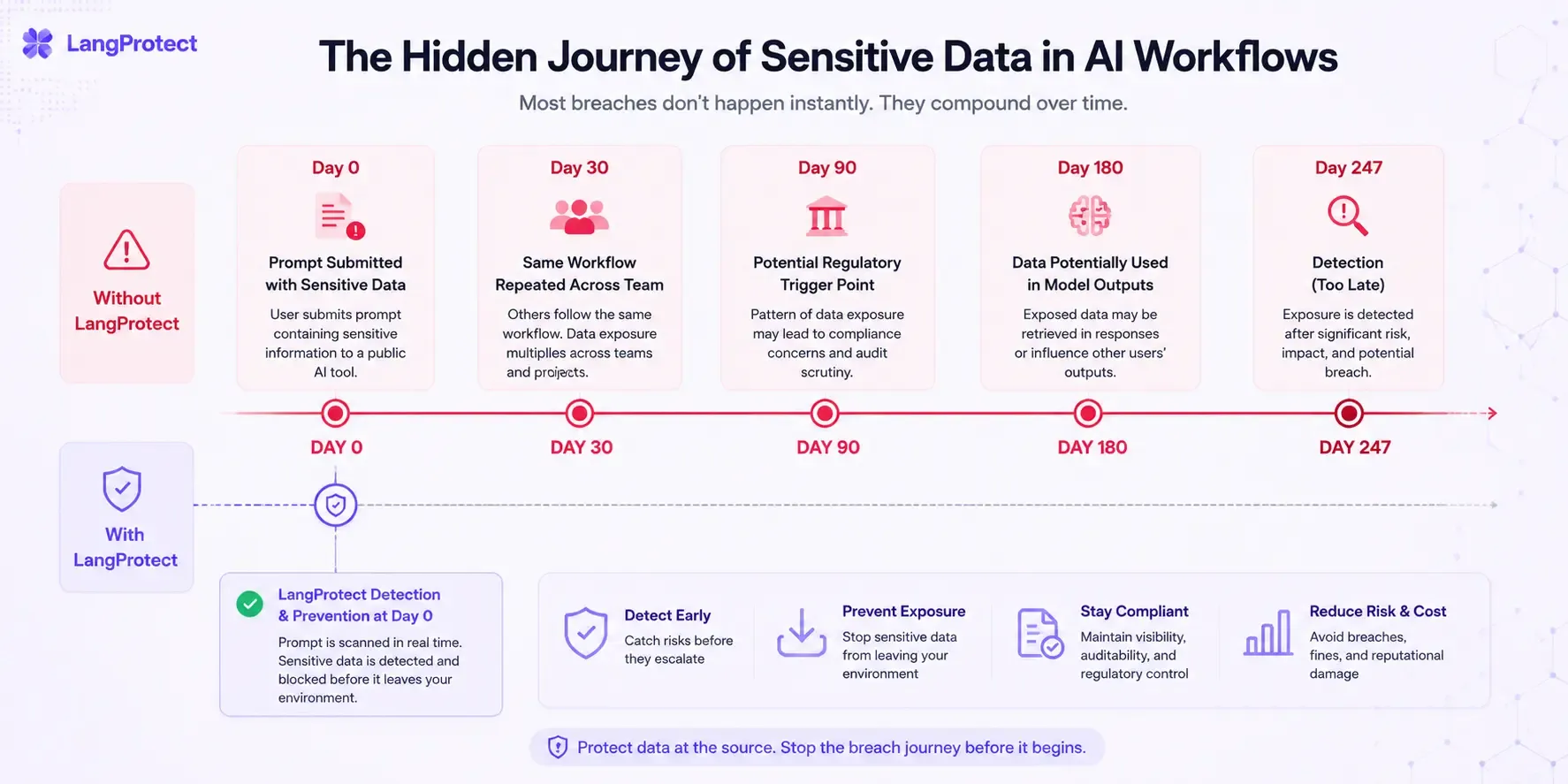

That premium exists not because AI breaches are technically more complex to remediate, but because they take longer to detect, leave fewer forensic traces, and create compliance obligations that standard breach response playbooks were never written to handle.

The Numbers Behind the Risk

The 247-day detection figure deserves more than a passing read. That is not 247 days from breach to remediation, it is 247 days from the moment data left your environment to the moment your security team became aware it had. During that entire window, the same employees, the same workflows, and the same AI tools that caused the initial exposure continued operating without any intervention, compounding the data surface with every passing week while your security posture remained unchanged.

What Regulators Add on Top of the Breach Cost

EU AI Act Penalties

The direct breach cost is only the first financial layer. For organisations operating under EU jurisdiction, or processing EU citizen data from anywhere in the world, the regulatory exposure compounds the damage significantly:

- Prohibited AI practices: Fines up to €35 million or 7% of global annual revenue, whichever is higher

- High-risk AI system violations: Up to €15 million or 3% of global annual revenue

- General non-compliance: Up to €7.5 million or 1.5% of global annual revenue

- Article 73 incident reporting: 15 business day deadline, impossible to meet without prompt-level audit logs already in place

GDPR Obligations

Beyond the EU AI Act, any AI data breach involving personal data triggers existing GDPR obligations that most organisations already struggle to meet without the added complexity of AI-generated exposure:

- 72-hour breach notification to supervisory authorities, the clock starts from when you became aware, not when the breach occurred

- Individual notification requirements if the exposure is likely to result in high risk to affected persons

- Fines up to €20 million or 4% of global annual turnover for failures in data protection by design

Without prompt-level audit logging, an organisation cannot determine whether a given AI interaction crosses the reporting threshold, which means the compliance failure is not just the breach itself, but the inability to assess whether a breach occurred at all.

What This Looks Like for a Mid-Sized Enterprise

To make this concrete, consider a financial services firm with 2,000 employees, of whom 60% regularly use AI tools, and of whom, based on industry averages, approximately 32% are doing so through personal accounts that sit entirely outside enterprise controls.

That is roughly 384 employees generating AI interactions your security team cannot see, across an unknown number of platforms, submitting an unknown volume of customer PII, financial data, and internal documentation every single working day.

Now apply the IBM figure: when that exposure eventually surfaces, and at 247 days average detection time, it will surface long after the damage has compounded, the base breach cost sits at approximately $4.44 million before regulatory penalties are calculated.

Why Your Approved AI Tools Are Just as Risky as Shadow AI

The moment your organisation signs an enterprise AI agreement, there is a natural instinct to consider the data problem solved. The contract is in place, the procurement box is checked, and the tool moves from the shadow AI list to the approved vendor register. What does not change is the data flowing through it.

As LangProtect's blog explores in depth, The Illusion of Enterprise Safety: Why Sanctioned LLM Accounts Still Leak Patient Data, approving an AI tool eliminates the procurement objection, but it does not create a control layer between the employee and the model.

Whatever an employee submits in a prompt is processed by the AI system regardless of whether the tool appears on your sanctioned list. The risk does not live at the vendor agreement level. It lives at the prompt layer, and most enterprise agreements say nothing about what controls you are expected to apply there.

The Governance Gap Most Organisations Are Still Ignoring

What the Numbers Actually Show

The scale of this governance gap is not hypothetical, it is documented and consistent across industries. Research shows that:

- 83% of organisations rely entirely on employee training, periodic warnings, or have no AI usage policy whatsoever

- Only 17% have implemented the minimum viable protection: automated blocking with real-time data scanning at the point of AI interaction

- 64% of employees say they worry about sharing sensitive data with AI tools, yet nearly 50% admit to doing it anyway

- 40% of organisations still treat AI security as an awareness problem rather than an architecture problem

That last figure is the one that matters most. When 64% of your workforce already understands the risk and half of them proceed regardless, you are not looking at a training problem, you are looking at a productivity pressure problem that no awareness campaign has ever successfully resolved.

Employees are not ignoring your policy because they did not read it. They are ignoring it because the secure path and the fast path are not the same path, and under deadline pressure, fast wins every time.

How Approved Tools Create a False Sense of Security

The Chatbot Governance Problem

Consider how most organisations deploy a tool like Microsoft Copilot or ChatGPT Enterprise. The security team reviews the vendor's data processing terms, confirms that the enterprise tier does not use submitted data for model training, and approves the rollout.

From a procurement and legal standpoint, this is entirely correct. From a security standpoint, it addresses roughly 20% of the actual risk surface.

What it does not address is which data employees are submitting, whether that data should be leaving your environment at all, whether the classification of that data aligns with the tier of access the tool has been granted, and whether any of these interactions are generating the audit evidence your compliance team will need when regulators ask for it. As LangProtect's GenAI Chatbot Governance page documents directly, chatbot risk travels through prompts, not files, which means the DLP controls your team has spent years configuring are watching the wrong channel entirely.

Where Prompt Injection Makes This Worse

Approved tools also introduce a risk vector that most governance frameworks have not yet accounted for: prompt injection, where malicious instructions embedded in external content, a document, an email, a webpage, manipulate the AI tool into performing actions or exposing data it was never intended to share.

When an employee uses an approved AI tool to summarise a client-submitted document that contains hidden instructions, the tool's enterprise status provides no protection whatsoever. The approval covers the vendor. It does not cover the content flowing through it.

For a practical framework on what employee-level AI governance actually requires, LangProtect Guardia was built specifically to address this gap, inspecting prompts and file uploads directly inside the AI tools employees already use, before submission, without requiring any changes to how they work.

Your approved AI tools need a security layer, not just a contract

See how Guardia governs employee AI usage in real time, from prompt classification to audit-ready logs, without slowing your team down.

3 Controls That Actually Stop Employee AI Data Leakage

Preventing employee AI data leakage requires three technical controls working in sequence. Training tells employees what not to do. These controls make the unsafe action impossible regardless of what employees intend.

Control 1 - Continuous AI Discovery: You Cannot Govern What You Cannot See

The starting point for any effective AI security programme is not a policy document, it is an accurate, continuously updated picture of every AI tool in use across your organisation, including the ones nobody approved.

This means monitoring managed and unmanaged devices, personal account sessions, browser extensions with embedded AI functionality, and SaaS platforms that have quietly added AI features to existing products your team already sanctioned for a different purpose.

LangProtect Guardia provides this continuous discovery layer, surfacing shadow AI usage in real time rather than waiting for a quarterly audit to reveal tools that have been in active use for months.

The distinction matters because a tool that has been running undetected for 90 days has already generated 90 days of unlogged, unclassified prompt interactions, and every one of those interactions is a potential compliance gap your team cannot retrospectively close.

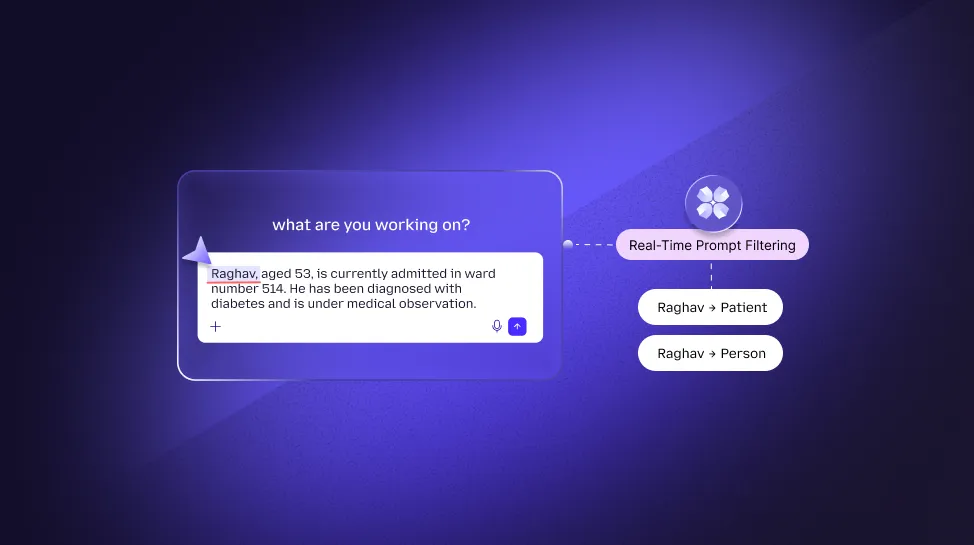

Control 2 - Semantic Prompt Classification: Context, Not Keywords

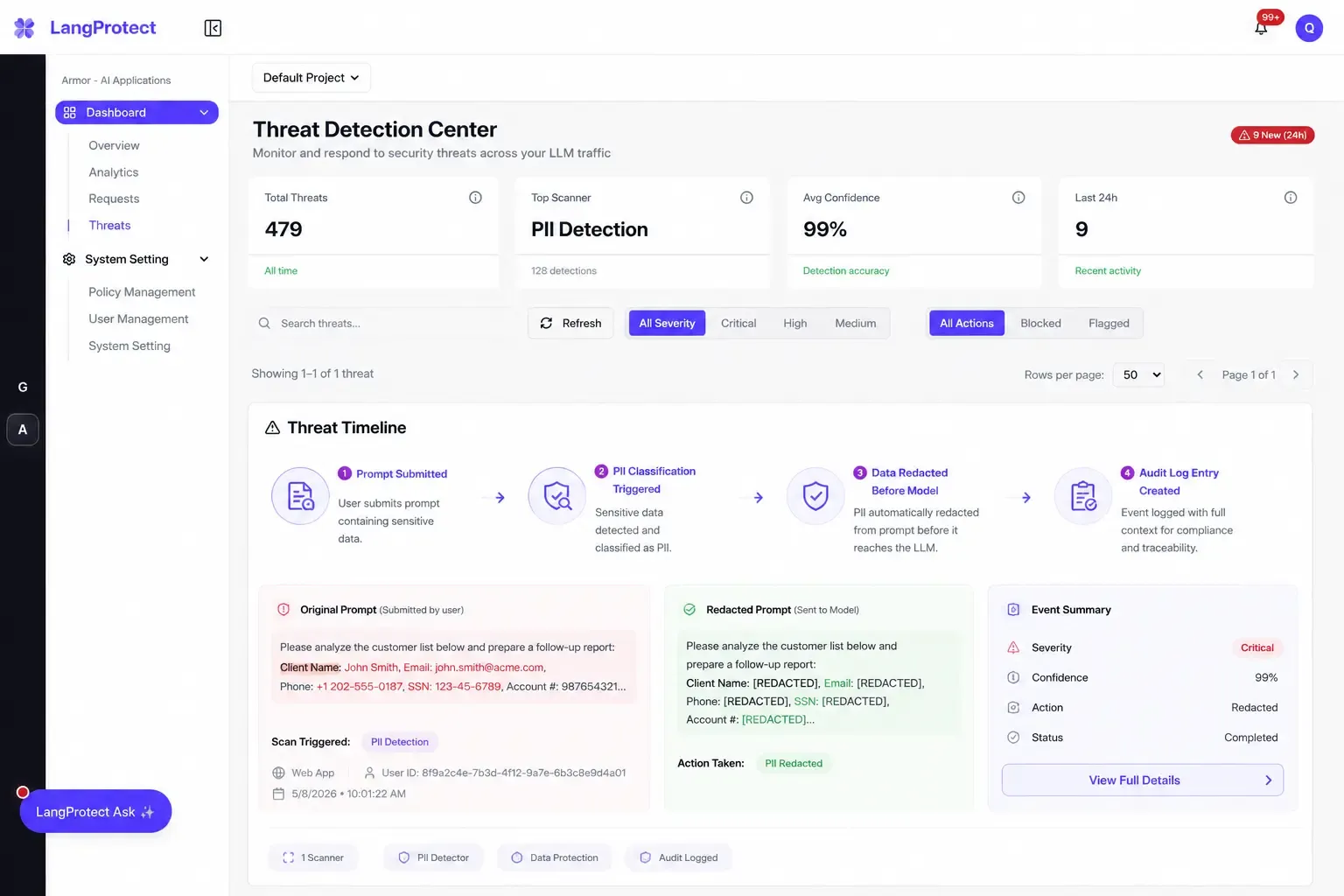

Keyword blocking fails as an AI security control for a straightforward reason: sensitive data rarely announces itself. A developer does not label a pasted database schema as "confidential." A finance analyst summarising client ARR does not type the word "restricted." Effective prompt-level classification requires understanding what the data means in context, identifying PII, PHI, financial records, and intellectual property at the moment of submission through semantic analysis rather than string matching.

This is precisely what LangProtect Armor delivers at the application layer, real-time semantic classification with enforcement decisions made in under 50ms, ensuring that sensitive data is intercepted before it reaches any model without introducing latency that disrupts the workflows your teams depend on.

Control 3 - Audit Logs That Satisfy Regulators, Not Just IT

The third control is the one that determines whether your organisation can respond to a regulatory inquiry with evidence rather than estimates. EU AI Act Article 12 mandates logging for high-risk AI systems. GDPR breach notification requires knowing precisely what data was exposed, when, by whom, and to which system. A prompt-level audit log must capture the classification result, the data type detected, the enforcement action taken, the user identity, the timestamp, and the AI platform involved in every interaction.

For organisations in regulated sectors, LangProtect's LLM Application Security layer maintains exactly this audit trail, structured, searchable, and formatted for the compliance evidence requests that follow a regulatory review, an internal investigation, or a breach notification assessment.

The Data Is Already Moving

There is no version of enterprise AI adoption in 2026 where your employees are not using AI tools, submitting sensitive data, and generating interactions your current security stack was not built to observe.

The question your security programme needs to answer is not whether this is happening, the numbers make that clear, but whether you have a layer in place that can see it, classify it, and stop the exposure before it becomes a breach, a regulatory inquiry, or a board-level conversation you were not prepared to have.

Your employees are using AI right now. Find out what data is leaving with it

Book a 30-minute session with the LangProtect security team, we will show you exactly what is flowing through your organisation's AI prompts and how to close the gap.

Frequently Asked Questions

Q. Can employees accidentally leak data using approved tools like Microsoft Copilot?

Yes. Approving an AI tool does not create a control layer between the employee and the model. Without prompt-level classification in place, PII, source code, and financial records flow to the model regardless of enterprise licensing. The risk lives at the prompt layer, not the procurement layer.

Q. What percentage of employees use personal AI accounts for work tasks?

According to research cited in LangProtect's Securing Employee AI Usage brief, 32.3% of ChatGPT usage in enterprise environments occurs through personal accounts, bypassing SSO, centralised logging, and enterprise data retention controls entirely.

Q. Does GDPR apply to data employees submit into third-party AI tools?

Yes. Submitting personal data about customers or colleagues into a third-party AI tool constitutes a data transfer under GDPR. The organisation remains the data controller and liability stays with the employer, not the AI vendor.

Q. Does employee security training prevent AI data leakage?

Training builds awareness but does not prevent leakage. Research shows 64% of employees already worry about sharing sensitive data with AI tools, yet nearly 50% do it anyway. Prevention requires automated controls at the prompt layer, not additional awareness campaigns.

Q. What is the difference between AI governance and AI security?

AI governance defines the policies that determine how AI should be used in your organisation. AI security enforces those policies at the technical layer in real time. Governance without security is documentation. Security without governance is enforcement without direction. Both are required, and neither replaces the other.